Turning Human Knowledge into an AI ‘Hivemind’

SageOx, a young startup focused on AI agents oversight, has raised USD 15 million (approx. RM69 million) in seed funding to tackle a growing problem: how to keep humans firmly in control as coding agents take on more work. Its core product acts like an institutional memory for human-in-the-loop AI. The platform continuously captures information from conversations, chats, and coding sessions, then structures that data into a shared “hivemind” accessible to both people and agents. When new coding agents join a project, they inherit this context instead of starting from scratch, improving AI alignment solutions for evolving codebases and fast-moving teams. Founder and CEO Ajit Banerjee describes this as critical infrastructure for teams suddenly operating 20x to 40x faster, where traditional documentation, ticketing, and review processes can no longer keep pace with autonomous tools.

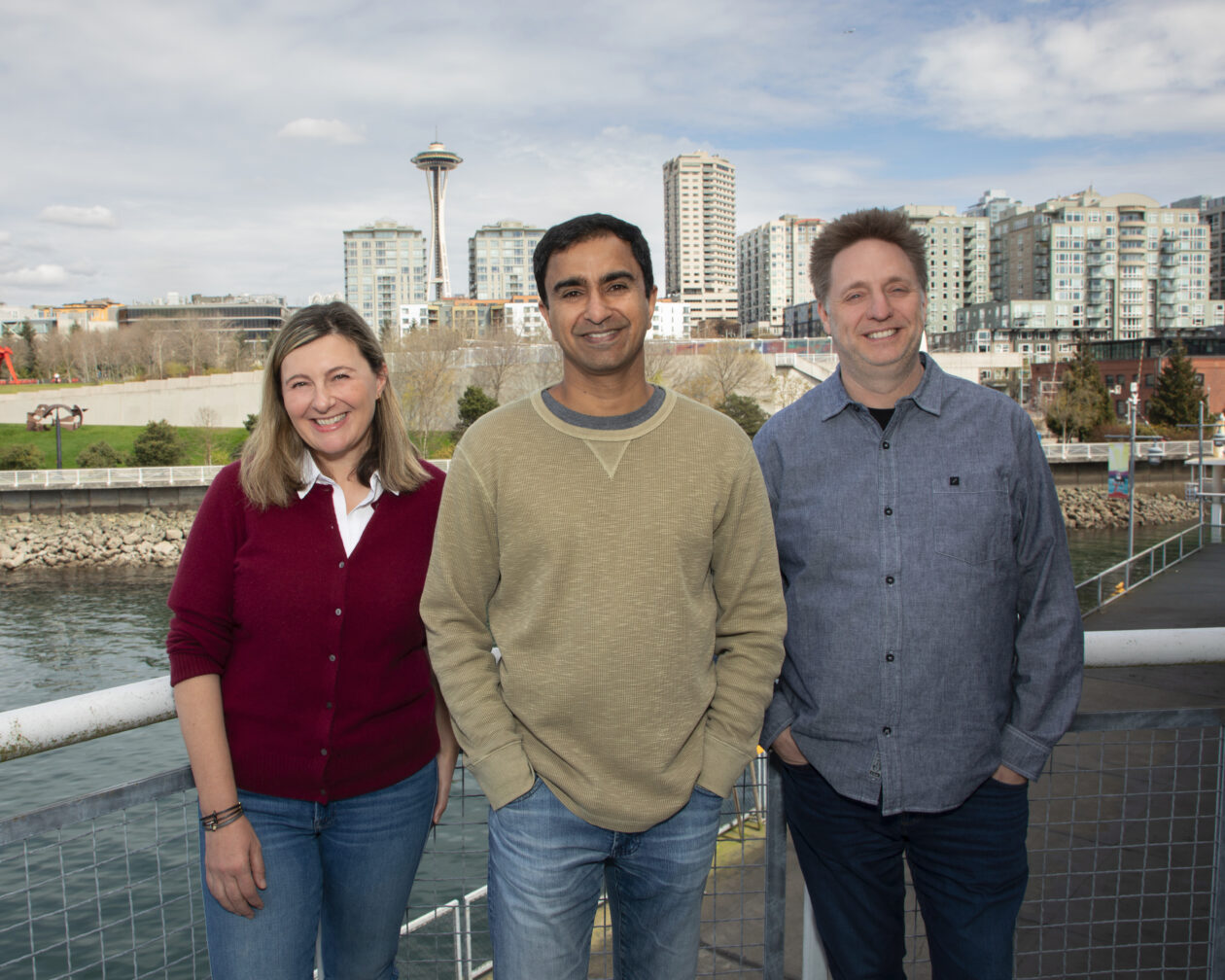

Amazon and Apple Veterans Target the Alignment Gap

SageOx’s approach to coding agents control is shaped by a founding team with deep experience at major tech companies. CEO Ajit Banerjee previously held engineering leadership roles at Amazon, Facebook, and Apple, while CTO Ryan Snodgrass was one of Amazon’s earliest engineers and spent 15 years there. Chief Product Officer Milkana Brace brings product and startup expertise, having founded Jargon and led technology efforts at a major travel platform, and early team member Galex Yen adds further engineering experience from multiple large tech firms. Together, they are focused on the alignment gap between AI autonomy and human oversight: ensuring agents understand a team’s intent, history, and decisions rather than simply following isolated prompts. Their thesis is that AI agents oversight cannot be bolted on later; it must be designed into workflows from the start, so organizations can scale automation without losing accountability or control.

Human-in-the-Loop AI as a Safety and Accountability Layer

SageOx positions its platform as a human-in-the-loop AI layer that reduces costly mistakes while preserving speed. Instead of allowing coding agents to operate in a black box, the system keeps them continuously informed of human decisions and discussions, and vice versa. This shared context helps prevent agents from acting on outdated requirements or missing crucial trade-offs debated in meetings. Early users say it eliminates the need to constantly recap decisions for agents and avoids important details getting lost. By embedding human review and context-sharing into agent-driven workflows, SageOx aims to provide AI alignment solutions that balance autonomy with traceability. When something goes wrong, teams can see what the agents “knew” at the time and which human decisions shaped that behavior, helping maintain accountability as AI takes on more critical tasks in software development pipelines.

Competing in a Crowded Field of Coding Assistants

SageOx enters a competitive landscape that includes offerings such as OpenAI Codex, Anthropic Claude Code, Cursor, GitHub Copilot, Windsurf, Blocks, Factory, Tembo, and 20x. Many of these tools focus on making individual developers more productive. SageOx instead emphasizes orchestration: aligning entire teams and their coding agents through shared knowledge and oversight. Its bet is that as organizations deploy multiple agents across repositories, products, and departments, the real challenge becomes coordination and governance, not just code generation. Early customers already rely on the platform to keep in-person conversations, decisions, and context available to agents automatically. This points to a broader market shift, where companies are seeking structured AI agents oversight rather than ad hoc usage, and where the winners may be those who can make agents effective collaborators within existing human-centric processes.

Why Guardrails Matter as AI Agents Become More Autonomous

The rise of advanced coding agents is pushing organizations to confront the risks of unchecked autonomy. When teams move 20x to 40x faster, small misunderstandings can snowball into security issues, outages, or compliance violations. SageOx’s human-in-the-loop AI model reflects a broader industry trend toward guardrails and structured supervision. Instead of fully delegating work, teams are seeking ways to keep humans in the decision-making loop while still benefiting from AI acceleration. By capturing intent, decisions, and history in a persistent hivemind, SageOx aims to ensure agents act consistently with human goals, even as team members change or projects evolve. This approach reframes AI from a replacement for engineers to a tightly supervised collaborator, suggesting that the future of coding agents control will hinge less on raw model power and more on how well humans and agents stay aligned over time.