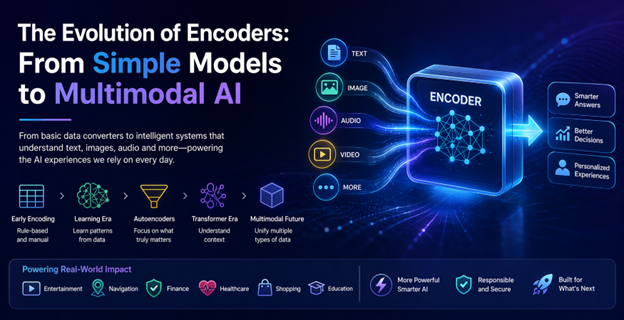

What Is an Encoder, Really?

Behind every impressive AI output sits an encoder doing the quiet work of understanding. In simple terms, an encoder turns messy real-world inputs—words, pixels, or sensor readings—into structured numerical representations that a model can reason over. Early on, this looked very mechanical. Engineers manually converted categories like “small,” “medium,” and “large” into numbers, or used basic tricks such as one-hot vectors for words. These systems handled data, not meaning: a recommendation engine could treat “running shoes” as a category, but had no idea they were related to “fitness watches” or “hydration packs” unless explicitly programmed. The arrival of neural networks changed this. Encoders started to learn patterns directly from data, discovering which features mattered most. Text and images were mapped into dense vectors that capture similarity and context, laying the foundation for large language encoders and the encoder decoder architecture that powers many modern AI tools.

From Single-Modal Text and Images to Multimodal AI Models

Once encoders could learn, they rapidly advanced in both language and vision. For text, neural encoders turned words into vectors where “cheap flights” lives close to “budget airfare,” enabling smarter search and classification. In images, encoders learned visual patterns like edges, textures, and shapes, making it possible to recognize objects without hand-coded rules. Autoencoders pushed things further by compressing inputs and reconstructing them, forcing encoders to capture the essence of data while discarding noise—useful for tasks like fraud detection or image compression. The transformer era was another turning point: transformer-based encoders can look at entire sentences or images at once, weighing what is most important in context. This ability to model relationships paved the way for multimodal AI models, where separate encoders for text and images share a common representation space, forming the backbone of today’s powerful vision language models.

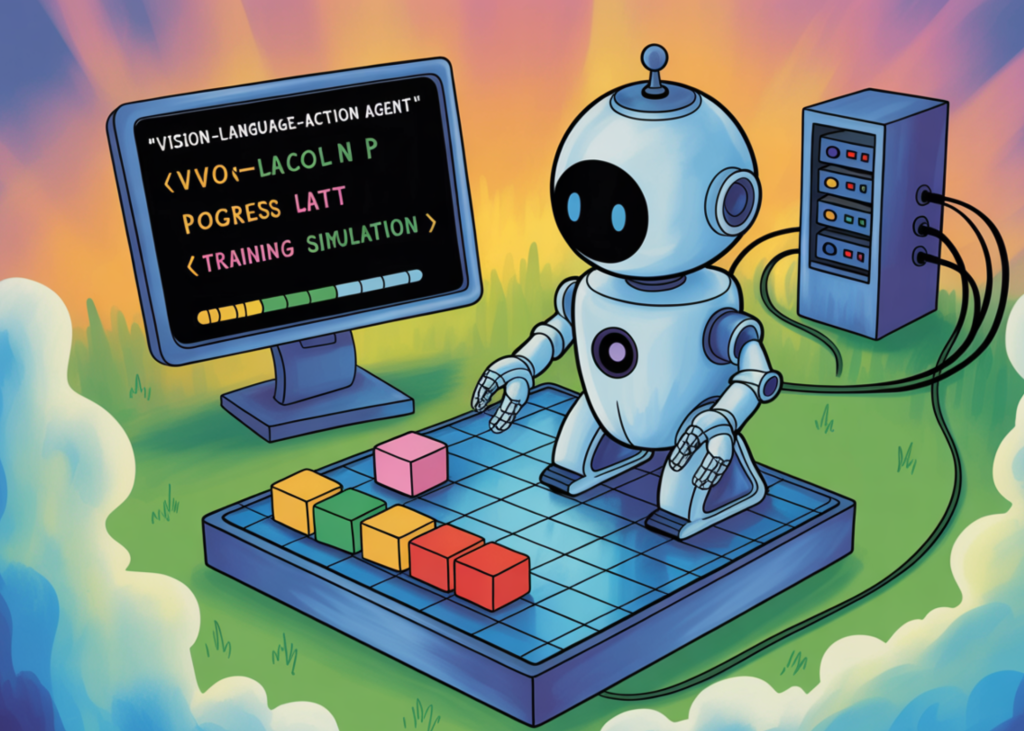

How Vision Language Models and Embodied Agents Use Encoders

Modern multimodal encoders sit at the heart of systems that can see, read, and act. In vision language models, an image encoder turns pixels into a visual embedding, while a language encoder processes your prompt. Because both use compatible representations, the model can align “a red mug on the left” with the correct region in an image. The same idea enables AI embodied agents—systems that perceive their environment and take actions. In a simple grid-world example, an image encoder can summarize a top-down RGB view of a world with an agent, obstacles, and a goal. A language or instruction encoder captures what you ask the agent to do. A control module then chooses actions like UP, DOWN, LEFT, RIGHT, or STAY, guided by these encodings and a learned internal world model, allowing lightweight vision-language-action systems to plan and move purposefully.

Everyday Applications: From Robots to Phone Assistants

For everyday users, the evolution of encoders shows up as tools that feel less like software and more like collaborators. In robotics, multimodal encoders enable natural language control: you can point a camera-equipped robot at a scene and say “go around the chair and pick up the blue box,” and its vision and language encoders jointly interpret your request. On phones and laptops, large language encoders help offline assistants understand long, messy voice queries and cross-reference them with photos, screenshots, or stored documents. Document-analysis tools similarly rely on encoders to read both text and embedded images, extracting structure from contracts, forms, or reports. Even recommendation systems and navigation apps are powered by encoders that learn patterns in your behavior. As encoders become more multimodal, search, chatbots, and productivity apps gain a richer understanding of both what you say and what you show them.

What Comes Next for Multimodal Encoders

The next wave of encoder research is about going beyond static text and images into richer streams of experience. Multimodal AI models are starting to incorporate video, audio, and continuous sensor data, requiring encoders that can track how the world changes over time. In embodied agents, encoders are being paired with latent world models and model predictive control, so an agent can imagine possible futures and choose actions accordingly, not just react frame by frame. This opens the door to robots that learn from demonstrations, home assistants that understand both your speech and your gestures, and industrial systems that fuse camera feeds with temperature, pressure, or motion sensors. For users, the payoff is more natural, context-aware interaction: devices that can watch, listen, read, and respond in real time. Encoders may stay invisible, but they will increasingly shape how we experience AI everywhere.