When a Helium Shock Exposed the AI Chip Supply Chain

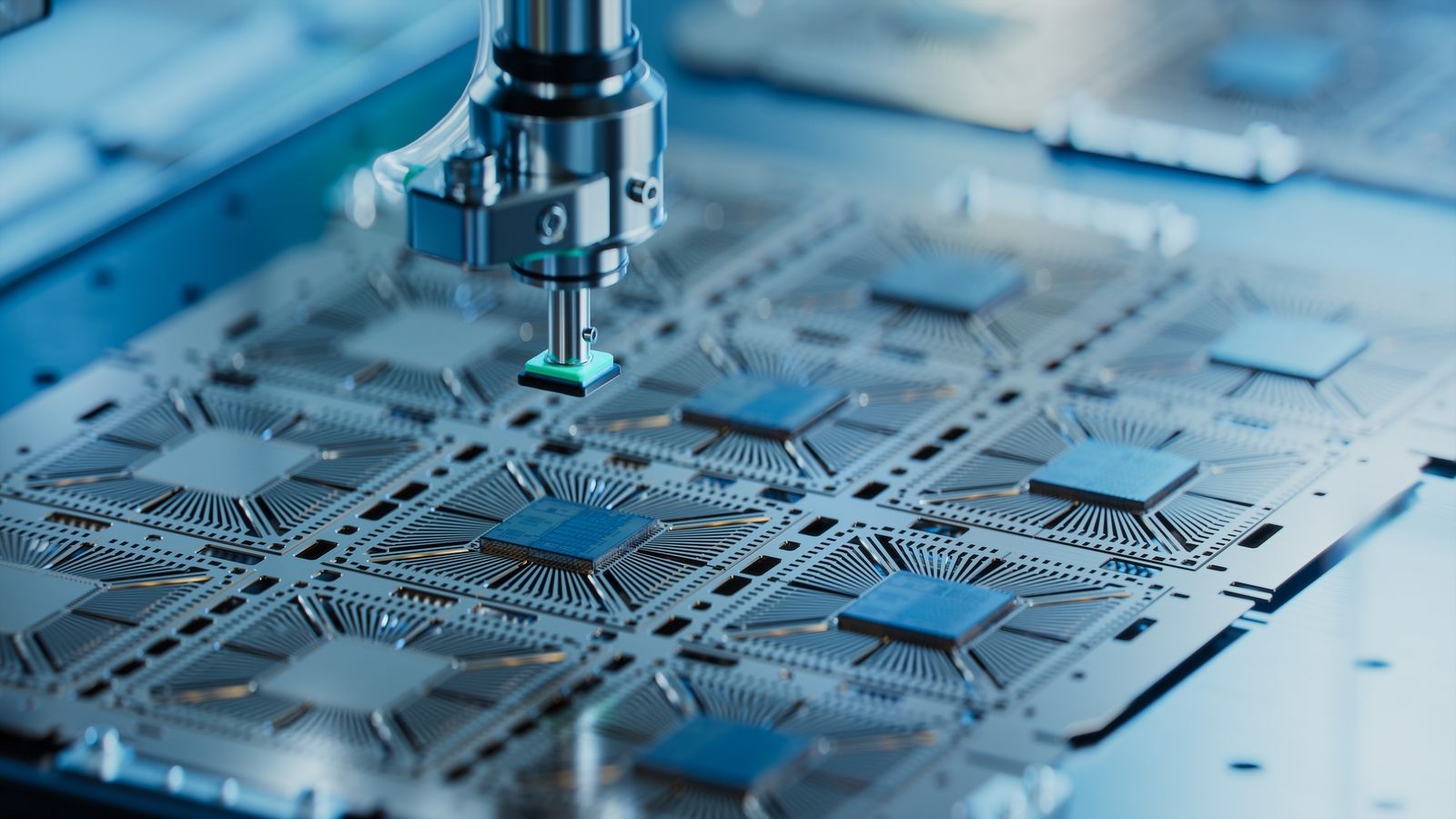

The AI chip supply chain has discovered an uncomfortable truth: its weakest links are often the least visible. During recent military operations that closed the Strait of Hormuz to commercial traffic, markets obsessed over crude oil while a quieter crisis unfolded around helium. A major industrial hub responsible for roughly 30% to 38% of global helium output was hit, forcing a halt in operations and sending spot prices roughly doubling in the following weeks. Helium is indispensable for semiconductor manufacturing, used to cool wafers, purify clean rooms, and detect microscopic leaks, with no practical substitute today. At the same time, high-bandwidth memory was already sold out through 2026 and advanced packaging lead times stretched up to two years. Investors largely priced AI as a pure demand story, but the episode revealed that the AI hardware stack is constrained by fragile materials, gases, and processes as much as by chips themselves.

Why Humble Hard Drives Became Strategic AI Infrastructure

As model sizes and training datasets explode, storage and memory have turned into strategic assets. Data centers now dominate shipments for storage vendors, accounting for 87% of one leading supplier’s volume in a recent quarter. Demand for high-capacity hard disk drives and solid-state drives has surged so much that this supplier has already sold out its top-capacity HDDs for the entire year, with hyperscale customers pre-booking inventory for the next two years as well. A shortage of these drives pushed high-capacity HDD prices up by 60% in just three months, while SSD prices climbed even faster. The result: stronger margins, with one vendor’s non-GAAP earnings jumping 53% year over year and its guidance pointing higher. For investors, this is a reminder that the AI hardware stack extends far beyond GPUs. For cloud providers, constrained storage is now a cost driver and a competitive battleground, directly influencing data center efficiency and AI service pricing.

ZAM: A Lower-Power HBM Alternative Aiming at AI Workloads

The escalating importance of memory has opened the door for new AI memory technology. SAIMEMORY, a subsidiary backed by a major telecom and working with Intel, is developing Z-Angle Memory (ZAM) as a next-generation alternative to conventional high-bandwidth memory. ZAM builds on US-backed research and Intel’s advances in DRAM stacking and bonding, using a vertically oriented architecture that targets higher capacity, greater bandwidth, and significantly lower power consumption. It has been selected for subsidies under a government Post-5G infrastructure R&D project, potentially covering a large share of development costs. RIKEN is supporting evaluation and system-level integration, signalling that ZAM is being designed from the outset as part of the AI hardware stack rather than a standalone chip. The core ambition is clear: replace today’s power-hungry memory with an HBM alternative tuned for AI workloads, easing thermal and energy constraints that limit current data center efficiency.

From GPUs to Bandwidth, Latency, and Energy Across the Stack

Taken together, these developments highlight a broader architectural shift. AI performance is no longer defined only by how many GPUs sit in a rack; it is increasingly shaped by bandwidth, latency, and data locality from storage through memory to compute. Shortages in high-bandwidth memory and high-capacity drives show that moving and feeding data is now as challenging as raw computation. Helium constraints and long packaging lead times reveal how delicate the AI chip supply chain has become. Experimental approaches like ZAM aim to cut power use while sustaining bandwidth, aligning with growing pressure to improve data center efficiency. As AI models grow, the cost and energy of shuttling data—between disks, DRAM, HBM alternatives, and accelerators—will be a key differentiator. Vendors that can optimize this end-to-end path, from new memory stacks to smarter storage tiers, stand to gain outsized advantages in both performance and operating costs.

Winners, Losers, and What Users Will Actually Notice

The emerging winners in this new phase of AI infrastructure may not be the usual GPU champions. Storage suppliers with locked-in demand and pricing power are already seeing margin expansion and strong earnings growth. If ZAM and similar HBM alternatives succeed, they could challenge incumbent DRAM vendors, lower the power footprint of AI clusters, and reshape the economics of both training and inference. Reduced memory power and better data locality would enable denser racks and more efficient data centers, potentially slowing the pace of energy cost inflation in cloud AI services. For enterprises and consumers, these shifts are likely to surface as tiered, AI-optimized cloud offerings, new device categories that advertise “AI-ready” storage and memory, and far more prominent discussions of energy-efficient AI on corporate roadmaps. In the next phase of the AI boom, bytes, bandwidth, and watts may matter as much as teraFLOPS.