GPT‑5.5 rolls out across ChatGPT and Copilot as a workhorse model

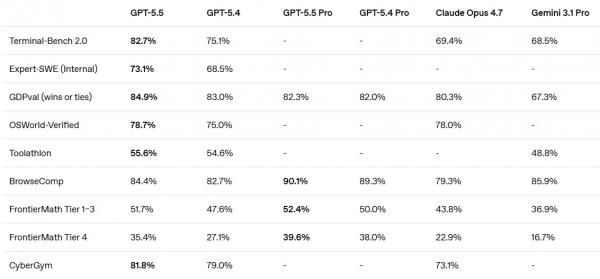

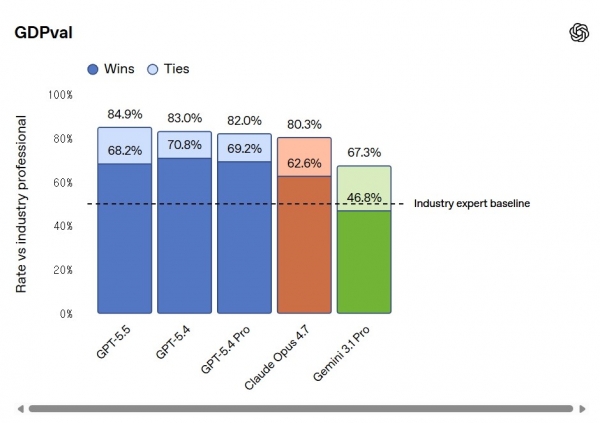

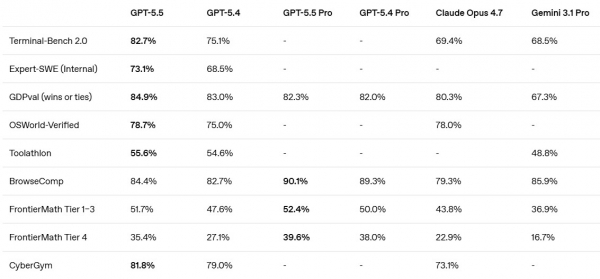

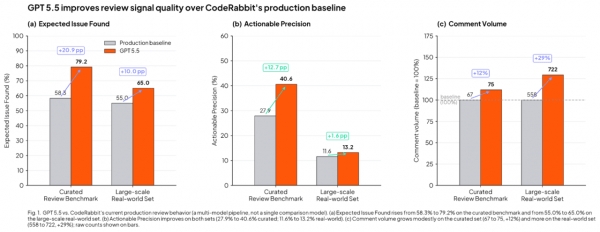

GPT‑5.5 is OpenAI’s most capable model yet for complex work, rolling out to Plus, Pro, Business, and Enterprise users of ChatGPT and Codex and increasingly embedded in Microsoft’s Copilot ecosystem. OpenAI pitches it as “smarter and more intuitive,” with a focus on handling multi‑step workflows such as coding, debugging, data analysis, document creation, and early‑stage scientific research with less hand‑holding. Internally codenamed “Spud,” GPT‑5.5 is the first fully retrained base model since GPT‑4.5 and is tuned for agentic coding and autonomous tool use. Benchmarks highlight its strength in planning and execution: it scores 82.7% on Terminal‑Bench 2.0 and 35.4% on the FrontierMath reasoning benchmark, while business‑oriented GDPval results underscore its suitability for knowledge work. At the same time, it still trails Anthropic’s Claude Opus 4.7 on SWE‑Bench Pro, suggesting competitive differentiation rather than outright dominance.

Inside OpenAI’s super app push: from chatbot to universal work interface

GPT‑5.5 sits at the center of OpenAI’s ambition to turn ChatGPT into a full‑blown OpenAI super app. Instead of a conversational bot that only answers questions, the company is converging ChatGPT, Codex, and an AI browser into a single interface that can interpret messy prompts, plan workflows, and carry out tasks end‑to‑end. In practice, that means one surface where a user can draft contracts, refactor code, run command‑line tasks, analyse spreadsheets, and even pursue exploratory math or scientific problems without constantly switching tools. Executives describe humans acting as “orchestrators” while the model does the heavy lifting, especially in agentic coding and computer use. Token efficiency gains in coding workflows and stronger safeguards against cyber and bio misuse are meant to make this super‑app layer both economically viable at scale and more governable. For enterprises, OpenAI is signaling that ChatGPT is evolving into a primary work environment rather than a sidecar assistant.

Microsoft’s OpenAI deal pivots from exclusivity to multi‑cloud GPT access

Microsoft has reshaped its partnership with OpenAI by ending exclusive cloud rights and allowing GPT models to run on rival platforms such as AWS and Google Cloud. The company retains intellectual property licenses to OpenAI models and products until 2032, but these are now non‑exclusive, with OpenAI still debuting new products first on Azure. In return, Microsoft will no longer pass a revenue share from OpenAI model sales on Azure back to OpenAI through 2030, effectively keeping the 20% cut it had previously returned. This marks a strategic pivot from defending exclusivity—Microsoft had even weighed legal action over OpenAI’s AWS plans—to locking in guaranteed revenue and cementing Azure as the preferred, but not sole, launch partner. As GPT‑5.5 expands across GitHub Copilot, Microsoft 365 Copilot, Copilot Studio, and Azure AI Foundry, the new deal turns Microsoft into both a flagship channel and a toll collector in a broader, multi‑cloud GPT economy.

How multi‑cloud GPT access reshapes enterprise AI strategy and competition

Multi cloud GPT access changes the calculus for enterprise AI strategy. Organisations can increasingly standardise on GPT‑5.5 as a core capability while deploying it on their preferred infrastructure—Azure, AWS, or Google Cloud—alongside other models. That reduces vendor lock‑in and makes hybrid strategies more feasible: a bank might run GPT‑5.5 for document workflows, Claude for deep code‑base refactoring where it leads SWE‑Bench Pro, and Gemini for specific integrations, all governed under one policy framework. Yet the flexibility introduces new complexity: CIOs must harmonise security baselines, cost controls, and model evaluation across clouds and vendors. Benchmark splits between GPT‑5.5 and Claude underscore that no single model dominates every workload, pushing enterprises toward portfolio thinking rather than one‑vendor bets. For OpenAI and Microsoft, the upside is clear: GPT becomes a de facto standard layer, while rivals are forced to compete on specialisation, safety posture, and deployment options instead of pure access.

Regulatory pressure and the next phase of AI platform governance

The shift from exclusivity to a looser Microsoft OpenAI deal also reflects mounting regulatory scrutiny around hyperscaler‑model provider alliances. Concentrating frontier AI capabilities behind a single cloud wall risks antitrust concerns and worries over data concentration. By opening GPT access to multiple clouds while maintaining a first‑on‑Azure launch pattern, Microsoft and OpenAI can argue that competition and customer choice are expanding, not shrinking. At the same time, GPT‑5.5’s stronger safeguards and OpenAI’s testing with nearly 200 early‑access partners in sensitive sectors like finance and drug discovery speak to a parallel pressure: regulators want to see concrete controls on bio and cyber misuse as models grow more agentic. In a multi‑cloud world, compliance teams must now track cross‑border data flows, API usage, and model behavior across several providers. The emerging question for regulators is less which model wins, and more how this distributed but tightly interlinked AI stack is governed.