From Niche Gadget to Mass-Market Camera on Your Face

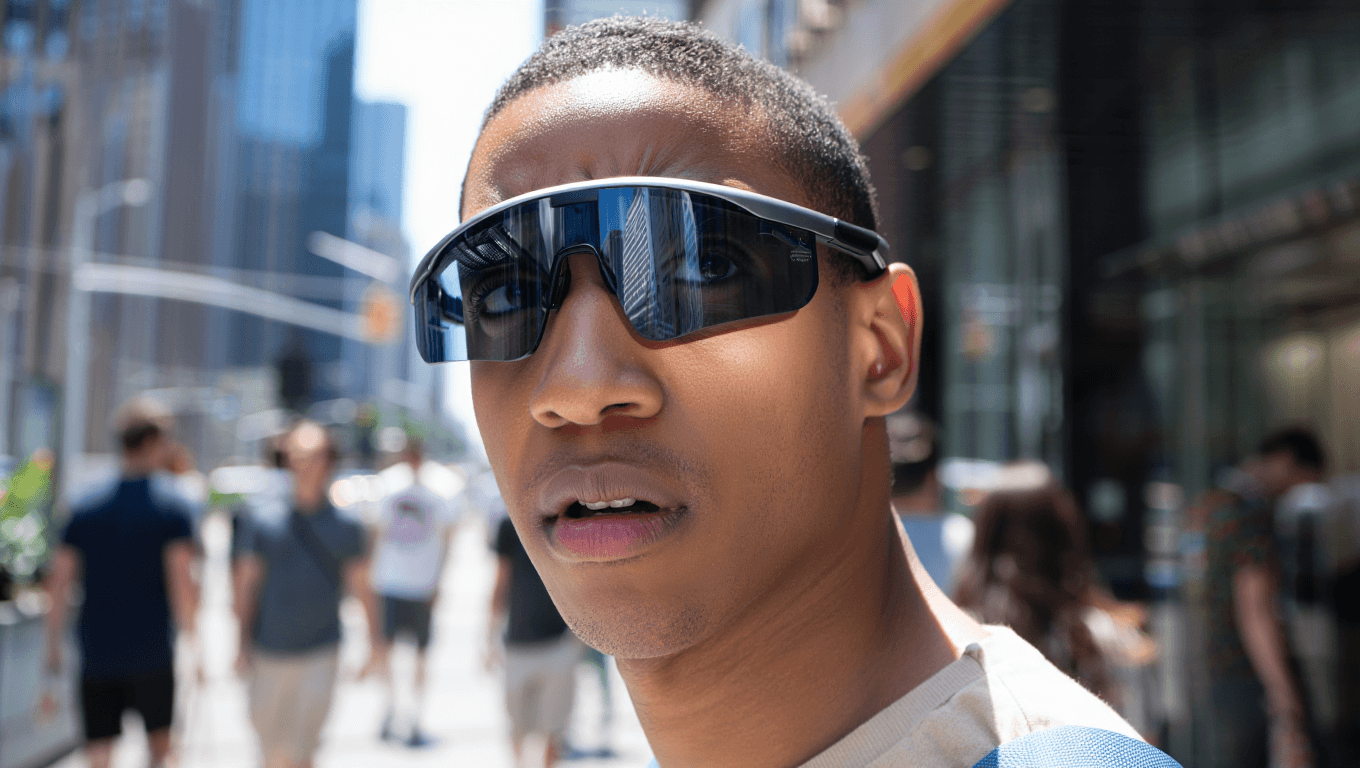

Smart glasses have leapt from experiment to everyday accessory, and no brand illustrates this more than Meta’s Ray‑Ban line. The company has shipped more than seven million pairs, giving it over 80% of the global AI eyewear market and making the glasses one of the fastest‑growing consumer electronics products on record. Their appeal lies in subtlety: camera lenses blend into familiar Wayfarer‑style frames, while open‑ear speakers and an AI assistant enable hands‑free photos, video, calls and prompts with a tap. Yet that same subtlety fuels concern. To bystanders, these devices look like ordinary eyewear; the small recording light is easily missed, especially in bright conditions. As Meta, Snap, Samsung and others push new models into stores, cameras are effectively “hiding in plain sight” in gyms, offices and public spaces, setting up a clash between convenience and expectations of privacy.

Covert Recording Becomes a Feature, Not a Fringe Case

The biggest smart glasses privacy risks stem from how easy it has become to film people without their knowledge. Early adopters use camera‑equipped frames to capture everyday moments, but others exploit their near‑invisibility for harassment and clout chasing. Reports describe women being approached by wearers who secretly film reactions to provocative questions, then upload the footage to social platforms where it can go viral before targets even realise they were recorded. Because photography in public spaces is often broadly lawful, victims frequently have limited legal recourse and must rely on voluntary takedowns—sometimes crudely treated as a “paid service.” As prices fall and more brands introduce consumer‑friendly designs, covert recording glasses are slipping into mainstream use. This normalisation raises the stakes for venues, employers and platforms that must decide whether to treat these devices like smartphones, banned cameras, or something entirely new.

Facial Recognition Smart Glasses: Consent on the Brink

The next wave of devices is poised to add facial recognition, turning passive recording into active identification. Reporting around Meta’s roadmap suggests forthcoming Ray‑Ban models could recognise people in real time, while leaks from other manufacturers emphasise AI‑heavy features layered onto familiar frames. Combined with constant video capture, these capabilities raise sharp questions: whose face data is being collected, how is it stored, and can individuals meaningfully opt out in public spaces? Biometric inferences—who you meet, where you go, what you react to—could be derived quietly from everyday interactions. Civil liberties advocates warn that, without clear smart glasses regulations, consent will become effectively impossible, especially in crowded environments where dozens of lenses may be scanning at once. What looks like a stylish accessory risks becoming a wearable surveillance node, blurring the line between personal convenience and systemic tracking.

BBC Investigation Exposes Holes in Today’s Safeguards

A recent BBC investigation underscores how early consumer models ship with thin guardrails. The report highlights how recording indicators are easy to miss and how users can upload clips of unsuspecting people with little friction. Behind the scenes, content moderators reviewing footage for AI training describe being exposed to highly sensitive material, including private acts that wearers did not realise had been captured or later shared for human review. Some buyers claimed they were unaware their recordings might be used this way, despite buried disclosures in terms of service. This mismatch between technical capability, user understanding and bystander expectations intensifies public criticism. The BBC’s description of smart glasses as “an invasion of privacy” has become a rallying line, forcing regulators, workplaces and venues to reconsider whether existing camera and CCTV norms are sufficient when recording is so portable, ambient and opaque.

Regulators Scramble as 2026’s AR Push Outruns the Rulebook

Meta, Snap, Samsung and other firms are treating 2026 as the year smart glasses move from demos to real deployment. Samsung’s leaked Jinju prototype, for example, aims for familiar, lightweight frames, while other tech giants explore partnerships with fashion‑focused eyewear brands to accelerate mainstream adoption. This commercial momentum is forcing regulators to catch up quickly. Governments and watchdogs are only now beginning to debate bans in sensitive locations, new disclosure rules for recording, and limits on biometric processing in facial recognition smart glasses. Without clear policies, public spaces and workplaces may have to improvise—posting ad‑hoc notices, enforcing device‑off rules, or creating “no recording” zones. The risk is that norms will be set by whoever moves fastest, not by deliberation. Unless lawmakers close today’s regulatory gaps, the spread of everyday AR could default into an era of normalised, poorly governed surveillance.