A New Stack of Realtime Voice Models

OpenAI is expanding its voice AI portfolio with three specialised realtime voice models aimed squarely at developers building next-generation voice applications. GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper are all exposed through the GPT realtime API, giving teams a consistent way to handle live voice reasoning, translation, and transcription within a single platform. Rather than forcing one monolithic model to do everything, OpenAI split the stack into distinct reasoning, voice translation AI, and speech-to-text tiers. This modular approach lets developers tune each workload independently, choosing where they need deep reasoning and where low latency or cost matters more. The models are positioned as infrastructure for live assistants, call flows, and tool-using voice agents that can keep speaking, manage interruptions, and stay in sync with users. As voice app development accelerates across mobile, automotive, and desktop experiences, this stack is meant to turn voice into a first-class interface for both consumer and enterprise workflows.

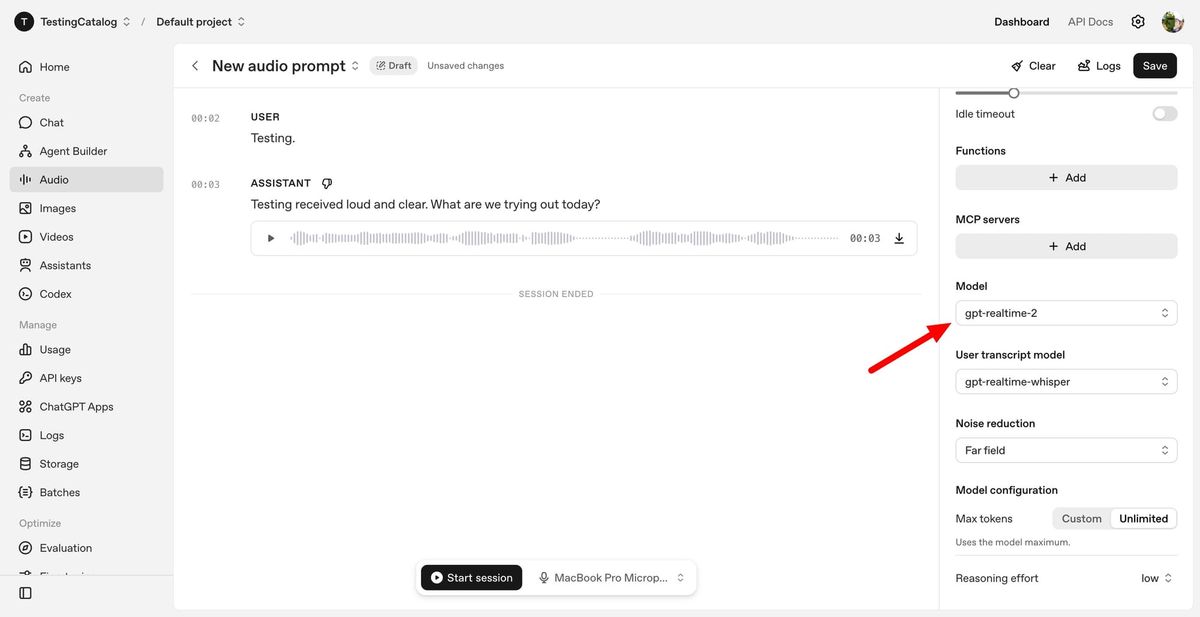

GPT-Realtime-2: GPT-5-Class Reasoning for Live Voice

GPT-Realtime-2 is the flagship reasoning model in OpenAI’s realtime voice lineup, bringing GPT-5-class reasoning to spoken conversations. Designed for live voice reasoning, it can track long, complex dialogues using a 128K-token context window, a jump from earlier 32K limits. The model is built to handle interruptions, topic shifts, and user corrections without collapsing into rigid call-and-response patterns. It supports parallel tool calls, allowing a voice agent to say short preambles like “let me check that” while it executes actions in the background. Developers can control reasoning effort from minimal to xhigh, trading latency for depth when tasks demand more deliberate thinking. OpenAI reports notable gains over GPT-Realtime-1.5 in audio intelligence and instruction following, with better recovery when tasks fail and clearer verbal feedback instead of silent errors. For developers, this reduces the orchestration overhead of keeping complex, tool-rich conversations coherent over time.

GPT-Realtime-Translate: Instant Multilingual Voice Translation

GPT-Realtime-Translate targets live multilingual scenarios where speed and comprehension must coexist. The voice translation AI model accepts speech in more than 70 input languages and produces spoken output in 13 languages while keeping pace with the speaker. It is engineered to cope with regional accents, rapid context shifts, and domain-specific vocabulary, making it suitable for customer support lines, cross-border sales calls, educational sessions, events, and media localization. Developers can pair translation with realtime transcriptions, enabling experiences where users see captions and hear translated audio simultaneously. Because GPT-Realtime-Translate is a separate tier in the GPT realtime API, teams can reserve heavy reasoning for GPT-Realtime-2 while leaning on this model purely for low-latency translation. Early experiments from telecom and media platforms suggest a growing appetite for voice app development that bridges languages in real time, turning previously text-bound workflows into fluid, conversational interactions.

GPT-Realtime-Whisper: Low-Latency Transcription for Live Workflows

GPT-Realtime-Whisper rounds out the stack as OpenAI’s streaming speech-to-text model for applications that need accurate transcription while conversations are still unfolding. It delivers continuous, low-latency recognition, making it well-suited for live captions, meeting notes, assistive interfaces, and voice-driven workflows where text must update as people speak. By keeping transcription separate from reasoning and translation, OpenAI lets developers plug this model into existing pipelines without paying for capabilities they do not need. Voice-to-action, systems-to-voice, and voice-to-voice patterns all benefit: a meeting assistant can capture decisions in real time, a CRM system can log calls automatically, and a helpdesk agent can receive live transcripts for auditing or training. Because GPT-Realtime-Whisper sits inside the same realtime voice models framework as GPT-Realtime-2 and GPT-Realtime-Translate, teams can compose transcription, translation, and reasoning in different combinations, tailoring latency and complexity to each step in their voice app development.

Why Modular Realtime Voice Matters for Developers

The split between reasoning, translation, and transcription is more than a product naming exercise; it reflects how enterprise and consumer voice systems are evolving. Long-running calls, tool hops, and context switches have historically forced teams to maintain elaborate state management layers just to keep conversations coherent. With GPT-Realtime-2 handling live voice reasoning and turn management, GPT-Realtime-Translate providing dedicated multilingual translation, and GPT-Realtime-Whisper delivering focused transcription, developers can push more orchestration into the model layer itself. This modular design lets them dial up reasoning only when a workflow truly needs it, while keeping simpler turns fast and inexpensive. As voice interfaces expand into dashboards, phones, and desktops, the GPT realtime API gives builders a flexible foundation for assistants that listen, think, and respond in real time. The result is a new generation of voice applications that move beyond sounding human to actually getting work done while people talk.