From Chat Windows to Working Agents

Google’s AI strategy is moving beyond simple question-and-answer chatbots toward AI autonomous agents that can actually do things on your behalf. Gemini started as a rebranded successor to Bard, focused on conversational responses and general-purpose language understanding. With Gemini Intelligence, Google is explicitly framing its assistant as “agentic,” emphasizing autonomous task execution rather than just dialogue. The system can already perform actions like ordering rides or takeout, but the new capabilities aim to go deeper into everyday workflows, from managing bookings to handling forms. This transition mirrors a broader industry shift toward agentic AI systems designed to operate independently within defined boundaries. Instead of waiting for prompts, these agents are meant to anticipate needs, act across apps and services, and stitch together complex tasks. The result is an assistant model that feels less like a chat companion and more like a digital co-worker embedded in your devices.

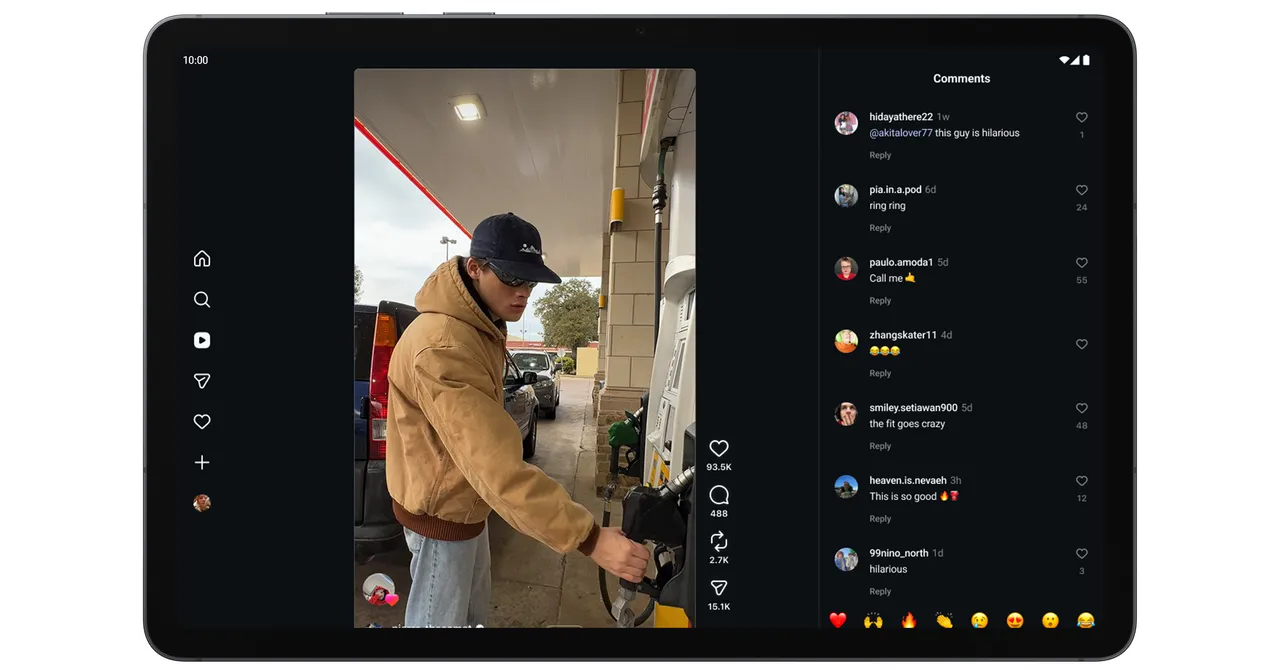

Gemini Intelligence on Android: Automation Built In

On Android 17, Gemini Intelligence features are turning the operating system into a platform for Android AI automation. Google highlights new agentic abilities that go far beyond traditional assistant shortcuts. Gemini can, for example, scan your Gmail for a class syllabus, then order the required books automatically, or reserve a front-row spot in your spin class without manual copy‑and‑paste. It can use image context to act too: you might open a grocery list in your notes app and ask Gemini to add every item to an online cart, or snap a travel brochure and have it find a matching tour for your group. An upgraded autofill experience taps into Personal Intelligence to populate secure details—like passport information—directly into travel forms via an explicit “Passport” button. These flows are strictly opt-in and can be disabled, but they illustrate how Android is becoming a playground for autonomous task execution rather than just smarter typing and search.

Remy: A 24/7 Personal Agent Inside Gemini

While Android showcases agentic behavior on devices, Google is also testing deeper autonomy in Gemini through an internal project called Remy. Described as a “24/7 personal agent,” Remy is being dog‑fooded by Google employees inside a staff-only version of the Gemini app. Unlike simple connected-app shortcuts, Remy is designed to integrate across Google services, monitor what’s most relevant, and handle complex tasks while learning AI user preferences over time. That means it could eventually coordinate calendar events, manage messages, or manipulate documents with minimal prompting. Details remain sparse: Google hasn’t disclosed Remy’s underlying model, how far its autonomy extends, or whether it can act without explicit confirmation. However, its design aligns with the emerging vision of AI autonomous agents that live inside productivity suites, constantly running in the background and orchestrating work across Gmail, Drive, Calendar, and other connected tools on behalf of users.

User Control, Privacy, and Personalized Proactivity

More autonomy inevitably raises questions about control and trust. Google is emphasizing that AI agents like Gemini Intelligence and Remy will operate within guardrails defined by users and policy. Gemini’s Privacy Hub is positioned as the central place to manage how agents interact with connected apps, what data they can access, and how long activity logs are retained. Users can review and delete Gemini Apps Activity, adjust auto‑delete settings, and decide whether their data is used to improve Google’s AI. Google’s research and cloud guidance stress principles like well‑defined human controllers, limited powers, observable actions, and transparency through logging. At the same time, features such as Remy’s preference‑learning and Gemini’s Personal Intelligence rely on persistent memory to deliver more personalized, proactive experiences. This tension—between convenience and control—will define how people experience next‑generation assistants: always‑on agents that feel tailored to them, yet remain constrained enough to respect boundaries and risk tolerance.

The Future of Assistants: Less Talking, More Doing

Taken together, Gemini Intelligence on Android and Remy inside the Gemini app signal a fundamental shift in how we’ll interact with AI assistants. The focus is moving from conversational flair to practical execution: agents that can fill out travel forms, polish dictation via features like Rambler, build custom widgets, or quietly coordinate tasks in the background. Instead of repeatedly asking for help, users may increasingly set goals, define constraints, and then let agents run within those boundaries. This aligns with a broader industry move toward agentic AI systems that are transparent, auditable, and limited by design, but still capable of complex, multi-step actions. For everyday users, the big change is psychological as much as technical: trusting software not just to suggest what to do, but to actually do it. As Google doubles down on AI agents that learn preferences and act autonomously, the smartphone may evolve into a hub for orchestrated, continuous assistance rather than occasional queries.