From WebXR Framework to No-Code VR Toolkit

Meta’s Immersive Web SDK (IWSDK) began as an open-source framework to simplify WebXR development, handling essentials like physics, hand tracking, movement, grab interactions, and spatial UI. The goal was clear: let creators focus on ideas rather than low-level engineering. The latest update pushes this vision further by weaving AI directly into the development pipeline. Rather than just being a set of JavaScript utilities, IWSDK is becoming a full-stack environment for AI VR creation on the web. Experiences built with the Meta immersive web SDK run directly in the browser, so they can be tested and shared instantly via URL on desktop and VR headsets. For many creators, this removes traditional hurdles such as long compile times, app store approvals, and complex deployment pipelines, making WebXR development feel more like publishing a web page than shipping a native app.

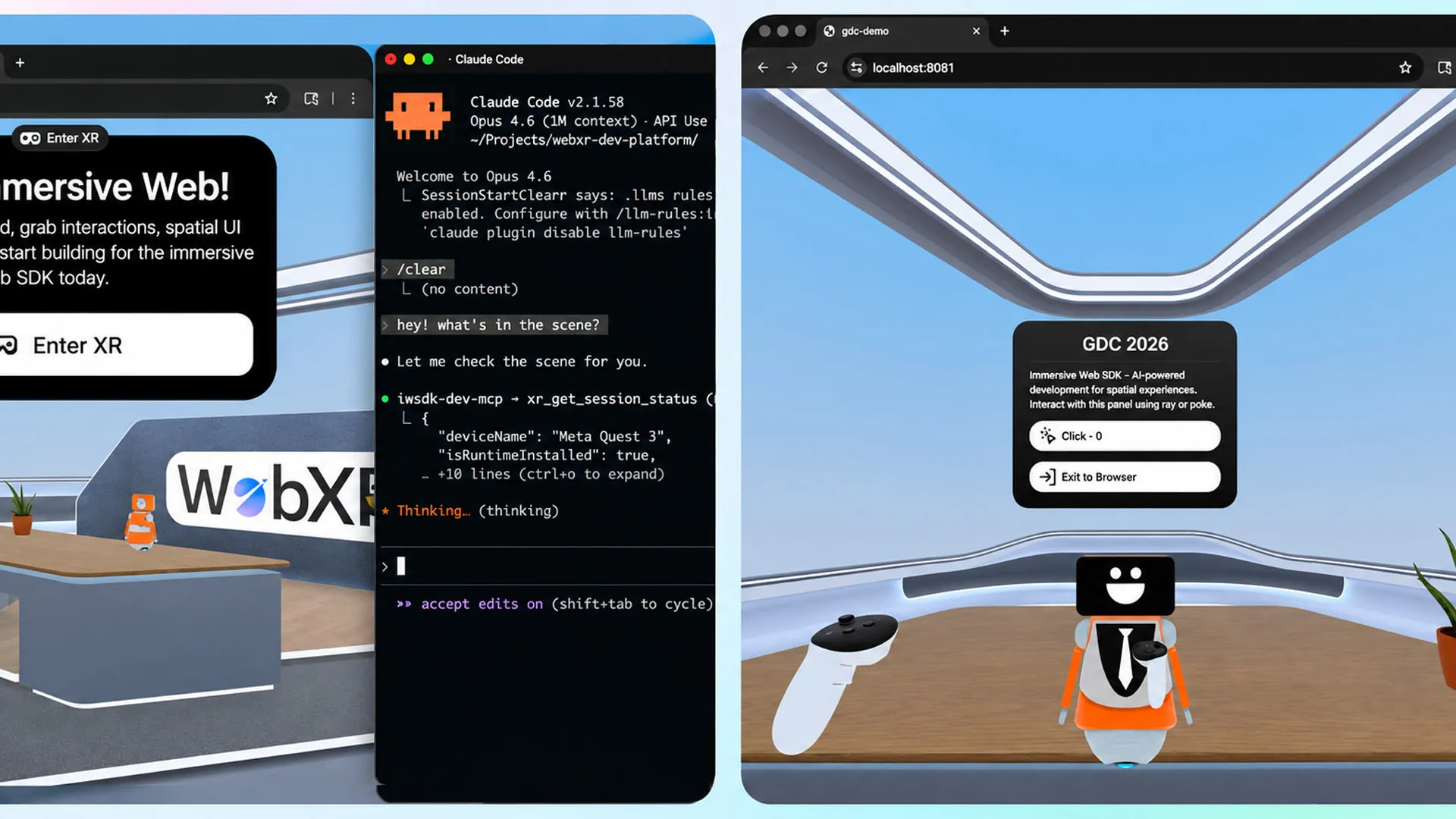

What Agentic AI Workflows Actually Do

The defining upgrade in Meta’s immersive web SDK is its “agentic workflow,” a new approach that treats AI as more than a code generator. IWSDK now integrates with AI coding assistants such as Claude Code, Cursor, GitHub Copilot, and Codex. These agents don’t just output snippets; they iteratively generate, test, and validate code in a closed loop. In practice, this means the AI can build entire interaction systems—like grabbing objects, handling collisions, or setting up spatial menus—and then immediately run and refine them. Meta emphasizes that this workflow is designed for full, interactive VR experiences, not just boilerplate. For WebXR development, this creates a feedback loop where creators describe what they want, see it running in the browser, and let the AI adjust behavior based on results, all without manually editing code.

Rebuilding a Complex VR Demo in Hours, Not Weeks

To demonstrate what agentic AI workflows can achieve, Meta revisited its earlier VR gardening demo, Project Flowerbed. The original 2022 version reportedly comprised tens of thousands of lines of custom code, reflecting the complexity typical of high-fidelity WebXR experiences. Using the Meta immersive web SDK’s new AI workflow and reusing existing art assets, the team recreated the entire application in just 15 hours. Meta stresses that this isn’t a case of fixing typos or auto-generating generic scaffolding; it’s a complete, interactive VR experience rebuilt by AI. For developers, this signals a shift in how no-code VR tools might operate: instead of rigid templates, AI-driven agents can assemble and refine bespoke logic on demand. As more complex demos are reconstructed this way, best practices for AI VR creation may emerge, accelerating iteration and reducing technical risk.

Democratizing VR Creation for Non-Technical Creators

The biggest implication of Meta’s update is who can now participate in WebXR development. By offloading most programming tasks to agentic AI workflows, IWSDK lowers the barrier for designers, artists, educators, and brands with minimal coding background. They can describe scenes, interactions, or learning scenarios in natural language, then rely on AI-generated code and the underlying SDK to handle implementation details. Because experiences are delivered directly through URLs, creators can distribute prototypes and finished projects without navigating app store ecosystems. Meta notes that over one million monthly users already access WebXR content on Quest, suggesting an audience large enough to make web-based XR commercially and creatively attractive. As no-code VR tools mature around IWSDK, the ecosystem may see a surge of lightweight, experimental experiences, as well as more inclusive collaboration between technical and non-technical teams.

The Future of Web-Based XR: AI-Native Workflows

Meta’s AI-infused immersive web SDK hints at a future where WebXR development is inherently AI-native. Instead of treating AI as an optional helper, IWSDK embeds it into the core workflow, from scaffolding projects to iteratively testing behavior in the browser. This could reshape the skill set required for VR creation: prompt crafting, UX vision, and content strategy may become more important than writing low-level code. It also aligns with the strengths of web distribution, where instant testing and frictionless sharing favor rapid, AI-driven iteration. As more open-source contributions flow into the SDK’s MIT-licensed codebase, third-party tools may extend these agentic workflows, integrating asset pipelines, analytics, or multi-user features. If successful, Meta’s approach could redefine expectations for no-code VR tools, making AI VR creation a default path rather than an experimental shortcut.