Inside the Anthropic–SpaceX Colossus 1 Deal

Anthropic’s new agreement with SpaceX gives the Claude maker a powerful boost in AI data center infrastructure. The company has signed on to use all available compute at SpaceX’s Colossus 1 facility in Memphis, a site that now hosts more than 220,000 Nvidia GPUs and over 300 megawatts of processing capacity. Colossus 1 has more than doubled its original scale, with dense deployments of H100, H200, and next‑generation GB200 accelerators, turning it into a flagship hub for LLM infrastructure expansion. For Anthropic, the move offers a fast route to significantly more Claude compute capacity, complementing existing arrangements with cloud partners like Amazon and Google/Broadcom. Crucially, Anthropic isn’t waiting for future build‑outs: Colossus 1 is live, meaning the firm can tap its GPUs immediately to relieve current demand pressure and prepare for the next generation of Claude models.

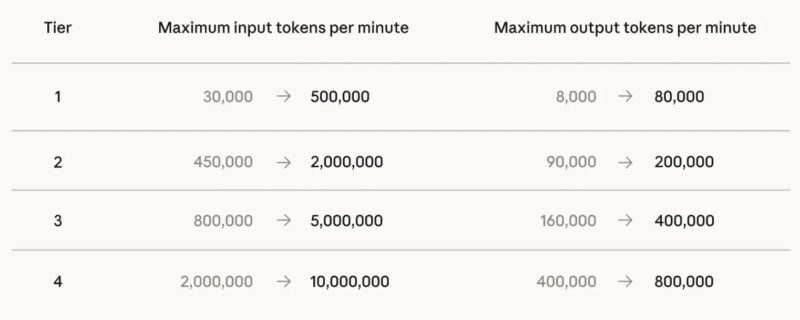

From Rate Limits to Reliability: What Users Will Notice

Anthropic’s SpaceX Colossus 1 deal is already translating into more generous usage policies for Claude users. At the Code for Claude developer event, chief product officer Ami Vora announced that rate limits on Claude Code and the Claude Platform are being raised. Specifically, Claude Code’s five‑hour limits are doubling for Pro, Max, Team, and seat‑based enterprise plans, while API limits for Claude Opus are being increased substantially. Anthropic is also ending its peak‑hour limit reductions on Claude Code for Pro and Max accounts, a change made possible by the expanded inference capacity. This is a direct response to recent strain: users had reported errors, strict rate caps, and perceived drops in quality as Claude Opus 4.7 adoption surged. With more GPUs online, Anthropic can better align model improvements with dependable availability, reducing friction for developers who now spend roughly 20 hours a week working inside Claude Code.

How Colossus 1 Changes Claude’s Performance Profile

Access to an entire large‑scale data center reshapes how Anthropic can operate Claude behind the scenes. Colossus 1’s 220,000‑plus GPUs effectively widen the “pipe” for both inference and experimentation, giving Anthropic more room to run heavy, long‑running jobs without choking user‑facing workloads. In practice, this should enable faster responses, higher concurrency, and more resilient handling of traffic spikes on Claude’s paid tiers. It also supports complex new capabilities such as multi‑agent orchestration, outcomes tracking, and the Dreaming feature, which lets Claude iterate on its own past sessions. While raw model benchmarks for Opus 4.7 show incremental gains over 4.6, the infrastructure leap is anything but incremental: it allows Anthropic to turn those modest capability improvements into a smoother, more consistent experience. The real test will be whether developers notice fewer errors and smoother throughput as Anthropic gradually shifts more of Claude’s traffic into the Colossus 1 cluster.

Beating Grok to the Rack: Competitive Stakes with xAI

The Memphis‑based Colossus 1 facility is more than just another data center; it is precisely the kind of capacity xAI’s Grok would have wanted close at hand. By leasing the full Colossus 1 compute stack, Anthropic has effectively seized a strategic asset in the race for AI data center infrastructure. SpaceX turns idle or underused capacity into revenue ahead of a potential IPO, while Anthropic gains a shortcut around near‑term bottlenecks just as demand for Claude Pro and Claude Max surges. For xAI, the optics are tougher: Grok must now pursue alternative capacity while a rival LLM taps directly into a SpaceX‑run cluster. In a landscape where GPUs, power budgets, and latency increasingly define competitive advantage, Anthropic’s move signals that infrastructure deals can be as decisive as model architectures in determining which assistant feels faster, more available, and more trustworthy to end users.

Why Data Center Scale Is Now a Core LLM Differentiator

Anthropic’s Colossus 1 lease underscores a broad shift in how large language model providers compete. Model releases still capture headlines, but sustained performance now hinges on how much scalable, high‑density compute an AI lab can command. As Anthropic’s experience shows, even a strong model like Claude Opus 4.7 can frustrate users if demand outpaces infrastructure. Year‑over‑year API volume growth near 17x has pushed AI providers to seek ever larger clusters, including Anthropic’s expressed interest in future orbital AI compute projects with SpaceX. Data center capacity is becoming a gating factor for new features such as long‑running agents and self‑learning systems like Dreaming, which demand continuous, resource‑intensive processing. For enterprises choosing between Claude, Grok, and other rivals, questions about GPUs, megawatts, and redundancy are increasingly tied to practical concerns: Will this assistant be online, responsive, and affordable when we need it most?