From Passive Chatbots to Goal-Driven AI Agents

AI agents are rapidly evolving from text-only assistants into systems that can plan, act, and deliver outcomes. Instead of waiting for prompts and responding with paragraphs, new autonomous AI systems are being designed to take continuous steps toward a user’s goal. That might mean creating an image, running a shopping search, or generating and deploying code—without the user micromanaging each move. This shift changes what people expect from AI. The focus is no longer on a clever conversation, but on finished work products and multi-step workflows. Companies are experimenting with agents that stay active in the background, monitor tools, and adapt to feedback. In practice, that makes AI agents autonomous tasks engines, capable of connecting models, apps, and data into something closer to an always-on digital worker than a glorified search box.

Meta’s Hatch Agent: Image Generation Meets Socially Grounded Automation

Meta’s upcoming Hatch agent illustrates how far consumer-facing agents are stretching. Hidden references in Meta’s codebase show that Hatch is being prepared behind a waitlist, hinting at a tightly controlled early rollout. The Meta Hatch agent is built to handle a wide range of autonomous tasks: image and video generation, shopping flows, learning sessions, research workloads, and even scheduled tasks with file generation. Crucially, Meta is weaving Hatch into Facebook and Instagram, so exploring feeds, discovering creators, or researching products can become agent-driven workflows rather than manual scrolling. Internally, Meta is reportedly training Hatch in mock environments that mimic platforms like Reddit or online marketplaces to sharpen its tool-use behavior. Backed initially by a mix of models, with Muse Spark expected as its long-term backbone, Hatch signals Meta’s ambition to build agents that quietly work day and night to advance user goals, not just chat on demand.

Hugging Face’s Agentic Toolkit: Plain English to Robot Actions

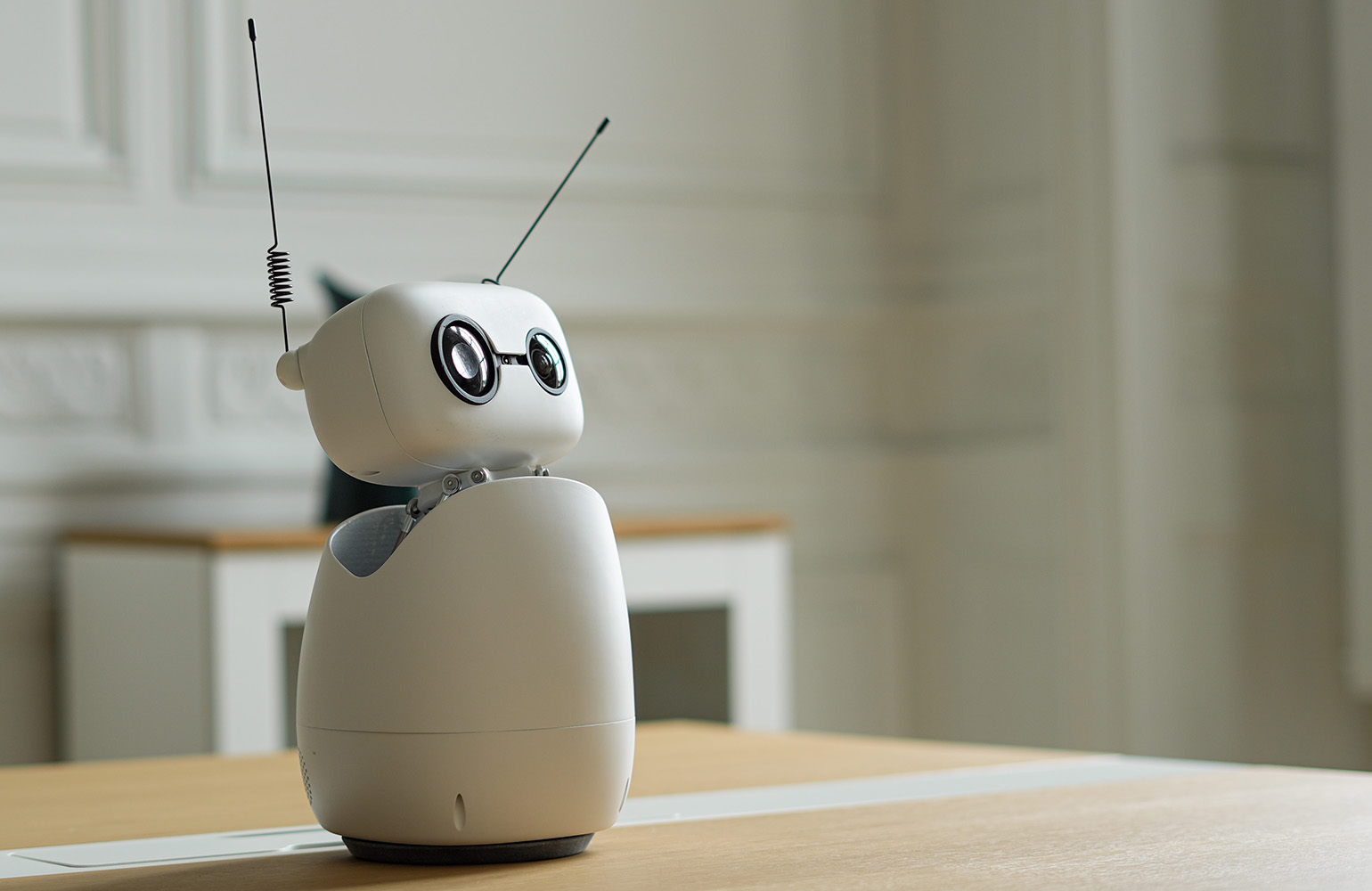

Hugging Face’s agentic toolkit for Reachy Mini shows what happens when AI code generation meets physical robots. Instead of writing programs, users describe the behavior they want in plain English—such as a helper that greets guests, answers questions, or teaches kids. An AI agent then writes, tests, and ships the code directly to the desktop robot, turning natural language into working software. This approach collapses the three traditional barriers to robotics: specialized expertise, pricey bespoke hardware, and weeks of integration. Reachy Mini is a compact, open-source robot, and its apps are hosted on the Hugging Face Hub, where anyone can install, fork, or tweak them with a single click. Because every app also runs in a browser-based simulator, even people without hardware can experiment. The result is a glimpse of autonomous AI systems that bridge text prompts and real-world motion—no robotics background required.

From AI Code Generation to an App Ecosystem for Robots

The Reachy Mini ecosystem shows how AI code generation can fuel a thriving marketplace of autonomous behaviors. Users have already created more than 200 apps, ranging from a voice-controlled co-facilitator for CEO peer groups to a language tutor, anti-procrastination assistant, and chess companion that reacts emotionally to each move. In every case, the underlying pattern is the same: a user describes a scenario, the agent writes and deploys the code, and the robot executes the behavior. The Hugging Face agentic toolkit turns what used to be a multi-week software project into a workflow that can be finished in under an hour. Because apps are searchable, forkable, and editable with natural language, the barrier to customizing robot behavior is dramatically lowered. This is a practical demonstration that AI agents autonomous tasks can extend beyond screens, powering physical interactions and shared, remixable experiences.

Plain English as the New Interface for Autonomous Workflows

What ties Meta’s Hatch and Hugging Face’s Reachy Mini together is a new interface paradigm: plain English as the control layer for complex workflows. In both cases, users set goals in everyday language, and the agent orchestrates tools, models, and services behind the scenes. For Hatch, that could mean running research workloads, generating media, and managing shopping flows autonomously inside social platforms. For Reachy Mini, it means using AI code generation to turn simple instructions into robot apps that run at a desk, in a classroom, or in an office. As these systems mature, agents are less like chat windows and more like orchestrators that chain together many steps without constant supervision. The result is a shift in how people interact with software: instead of clicking through menus or writing scripts, they describe what they want—and let autonomous AI systems handle the rest.