From Ten Blue Links to Trusted AI Answers

For years, success in search meant climbing Google’s results page. Now, the battleground is whether AI assistants mention a brand at all. Consumers are increasingly asking systems like ChatGPT, Claude, Perplexity, Copilot, and Meta’s AI for direct recommendations on everything from software tools to skincare brands. Instead of scanning ten blue links, users receive a single, synthesized answer. That shift places immense power in how AI systems evaluate authority, relevance, and trustworthiness. Traditional SEO still matters, but it is no longer the sole gateway to discovery. Recommendation visibility—being selected by AI as a credible answer—has become a new metric of digital visibility. As multiple AI search platforms compete, they must differentiate not only on speed and accuracy, but on how transparently they show their sources and how confidently users can trust those answers.

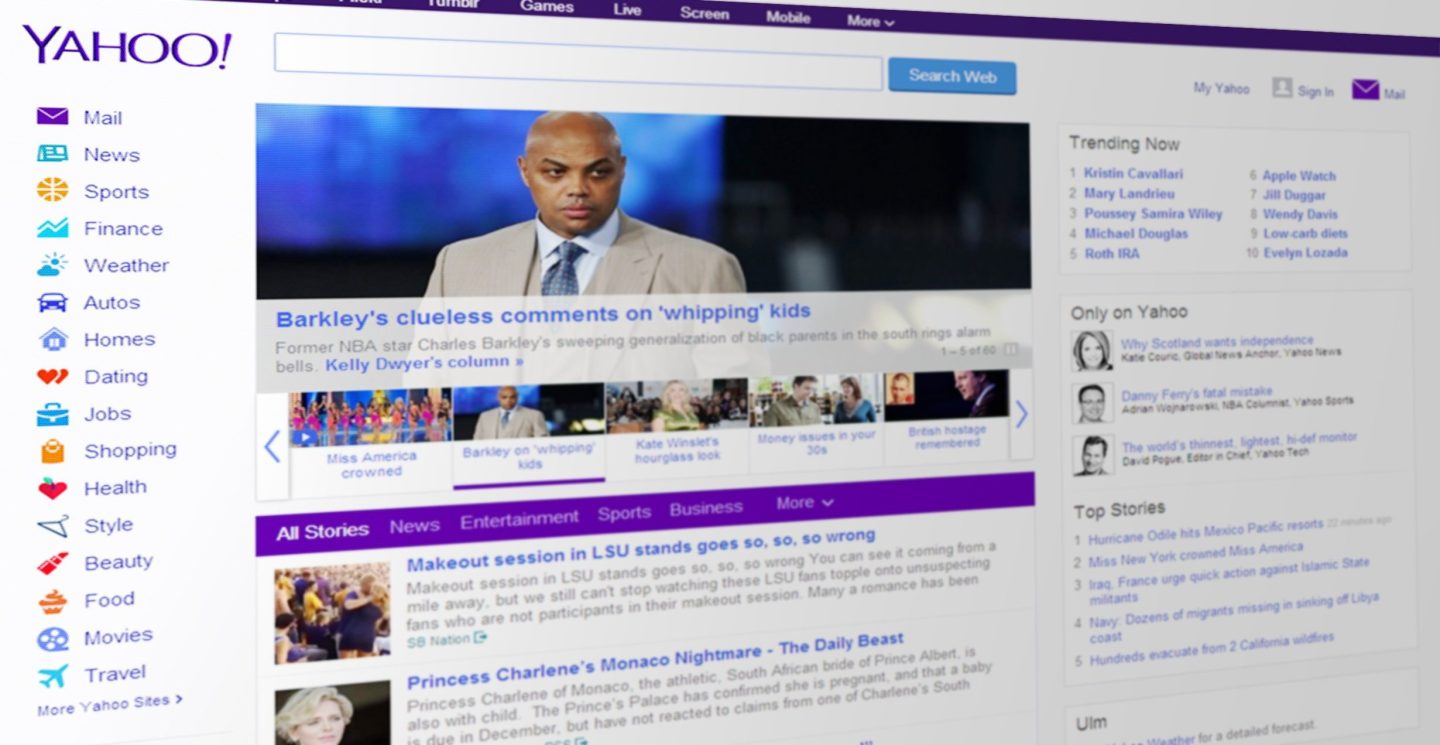

Yahoo Scout’s Pitch: Source Transparency as a Feature, Not a Footnote

Yahoo’s Scout AI assistant is positioning itself around one core promise: transparent, trustworthy answers. Built on Anthropic’s Claude and Microsoft’s Grounding with Bing search technology, Scout blends information from the open web with Yahoo’s extensive content network across Mail, News, Finance, Sports, and publisher partners. Rather than hiding its homework, Scout foregrounds it. Its marketing emphasizes concise responses that clearly display the underlying sources, a deliberate response to user concerns about AI hallucinations and opaque citations. Yahoo’s consumer research found trust to be the top requirement among users of answer engines, and Scout’s interface is designed to address that anxiety directly. By integrating Scout into existing services like Mail and Finance instead of isolating it on a separate AI search page, Yahoo is betting that familiar environments plus visible sources will encourage users to see Yahoo again as a dependable everyday information hub.

Everyday Questions, Everyday Trust: How AI Changes Credibility

Yahoo’s first campaign for Scout leans into everyday questions—like how the internet reaches computers or how the Tooth Fairy finds children—to underscore a deeper shift in how credibility is established. Where traditional search relied on ranking signals and user habits of cross-checking multiple links, AI assistants compress that research into a single conversational answer. That makes the provenance of information more critical than ever. By surfacing the sites, publishers, and data sources behind each response, Scout aims to make trust a visible part of the user experience rather than an invisible algorithmic calculation. Its access to 500 million user profiles, a knowledge graph exceeding one billion entities, and trillions of consumer events gives it a vast reference base—but the strategic differentiator is how openly it shows its work. In an era of AI skepticism, transparency becomes a brand promise, not just a technical feature.

AI Search Optimization: From Rankings to Recommendation Readiness

As AI answer engines shape discovery, brands are rethinking optimization strategies. Instead of focusing solely on keywords and backlinks for traditional search, marketers now ask: what signals make AI systems comfortable recommending us? According to AHOD’s PR Boost platform, authoritative editorial coverage is emerging as a critical input for AI search transparency and source credibility. Large language models lean heavily on trusted publications, structured brand signals, and clearly recognized entities when forming answers. That means AI search optimization increasingly overlaps with strategic PR: securing mentions in high-authority outlets, ensuring consistent brand narratives, and building a strong, machine-readable presence across news ecosystems. The goal is not only to be indexed, but to be recognized as a credible, safe recommendation when users ask AI for the “best” or “top” option in any given category.

Why Source Transparency Is the Next Competitive Edge

In a crowded landscape of AI assistants—spanning Google AI Overviews, ChatGPT with web retrieval, Gemini, Copilot, Grok, and others—how platforms handle sources may become a defining differentiator. Yahoo’s Scout is leaning into explicit citations, while services like AHOD focus on getting brands into DA70+ publications syndicated to Apple News and Google News, which serve as key training and retrieval pipelines. As conversational AI replaces traditional browsing for many users, opacity around sources risks eroding confidence. Users who understand that AI can be wrong will gravitate toward tools that show where information comes from and why it is being recommended. For brands, this means the battle is no longer only about where they rank, but whether transparent, authoritative references to their business appear in the places AI systems trust. Trust in AI search is becoming a shared responsibility between platforms and the brands that feed them.