From Factory Floors to Living Rooms: Inside the Gemini Robotics Demo

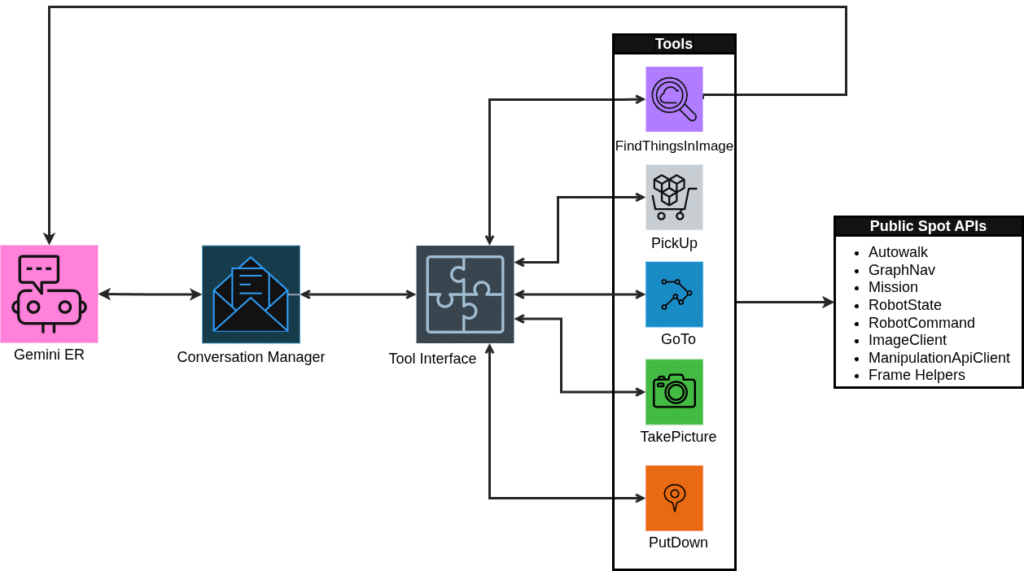

Boston Dynamics’ robot dog Spot is best known for industrial inspections, but its latest outing takes place in a living room. In a recent Gemini Robotics demo, the robot dog Spot uses Google’s Gemini Robotics-ER 1.5 visual‑language model to pick up scattered shoes and soda cans in a real home. Instead of running a rigid patrol script, the home robot dog receives high-level written instructions like “Make sure all of the shoes at the front door are on the shoe rack.” Gemini Robotics interprets the instruction, analyzes images from Spot’s cameras, identifies relevant objects, and sequences navigation and grasping actions through Spot’s SDK and API. Boston Dynamics’ engineers didn’t hard‑code every step; they defined a small set of “tools” such as going to locations, taking pictures, identifying objects, and placing items. Gemini Robotics acts like a remote operator plus controller, chaining these tools together to execute multi-step chores with minimal custom code.

What Embodied AI Robots Actually Are

The Spot and Gemini Robotics demo is an example of embodied AI: models that don’t just process text or images, but reason and act through a physical body. Traditional Boston Dynamics Spot deployments rely on hand-crafted state machines and Autowalk missions, where engineers painstakingly script behaviors and waypoints. By contrast, Gemini Robotics combines large language model abilities with visual understanding and direct access to the robot dog Spot’s sensors and arm via tools. Developers describe the robot’s capabilities in natural language—explaining, for instance, that the front cameras are too low to see elevated surfaces—and Gemini Robotics learns how to choose the right camera or motion sequence. This is similar in spirit to other cutting-edge robots that perceive, reason, and respond in complex physical environments, such as table tennis systems that adapt to human opponents in real time. The difference is that embodied AI robots like Spot are being pointed at everyday household tasks rather than just lab benchmarks.

Chores a Home Robot Dog Could Handle—and What Stays Out of Reach

In the demo, the home robot dog handles narrow but useful tasks: locating clutter, grasping objects it recognizes, and moving them to a designated area. With embodied AI, Boston Dynamics Spot can realistically tackle chores like tidying shoes near the front door, gathering cans, or visually checking rooms against a written to‑do list. The key is that Gemini Robotics translates those instructions into sequences of navigation, perception, and manipulation, instead of engineers coding every path and grasp. However, the system still relies on a curated set of tools and carefully written prompts; seemingly simple commands like “put down an object” required extra context before the model behaved reliably. More delicate or open‑ended jobs—cooking, laundry folding in messy environments, or caring for children and pets—remain technologically challenging. Dexterity, safety, and robust understanding of cluttered, dynamic homes are still active research problems, so consumer robot dogs will likely start as focused helpers rather than general-purpose butlers.

Safety, Privacy and Trust When a Robot Dog Roams Your Home

Turning robot dog Spot into a home assistant raises new questions beyond technical capability. To work, embodied AI robots need constant sensing: cameras to observe objects and spaces, microphones for voice commands, and connectivity for running or updating foundation models. That creates a moving sensor platform inside bedrooms and living areas, potentially streaming images, conversations, and personal habits. Any consumer-ready home robot dog will need strict data practices, local processing where possible, and transparent controls over what’s recorded or uploaded. Reliability is another concern. Even in high-profile robotics feats, physical systems can misjudge environments and require human intervention, underscoring the risk of missteps around furniture, pets, or people. Well-defined virtual boundaries, conservative motion policies, and clear override options will be essential for making embodied AI feel safe in domestic spaces, not just impressive in demos.

From Industrial Testbed to Consumer Expectation for Future Robot Dogs

The Gemini Robotics demo positions Boston Dynamics Spot as a bridge between industrial automation and domestic embodied AI robots. In factories and power plants, Spot already performs autonomous inspections; now the same platform is being used to explore how natural language, visual reasoning, and tool-based APIs can convert handwritten instructions into real-world chore execution. This mirrors a broader shift in robotics, where systems once confined to labs or competitive arenas—like marathon-running or ping‑pong‑playing robots—are edging closer to everyday life. For consumers, the big change will be in expectations: instead of buying a robot dog pre‑programmed for patrols or novelty tricks, people will expect a home robot dog that can learn new tasks from conversation and adapt to new layouts or objects. Over the next few years, that will likely mean pilot deployments and niche helper roles, gradually expanding as embodied AI systems prove they can be trustworthy, useful, and easy to direct.