From Static Arrow to AI Mouse Pointer

For more than half a century, the mouse pointer has been a passive on-screen arrow: it pointed, clicked, dragged, and not much else. Google DeepMind now wants to turn this humble icon into an AI mouse pointer that understands what you are doing, instead of merely where you are clicking. Rather than forcing users to open a separate chatbot, copy content, and craft a detailed prompt, DeepMind’s concept lets the AI come to the cursor. Hover over a table and ask for a chart, point at a PDF and request a summary, or select a recipe and say “double these ingredients.” The system, powered by Gemini, reads the pixels and context around the cursor and responds directly in place. It is a bid to make AI as ambient, constant, and invisible as the pointer itself.

Show, Point, and Speak: How the Gemini Cursor Upgrade Works

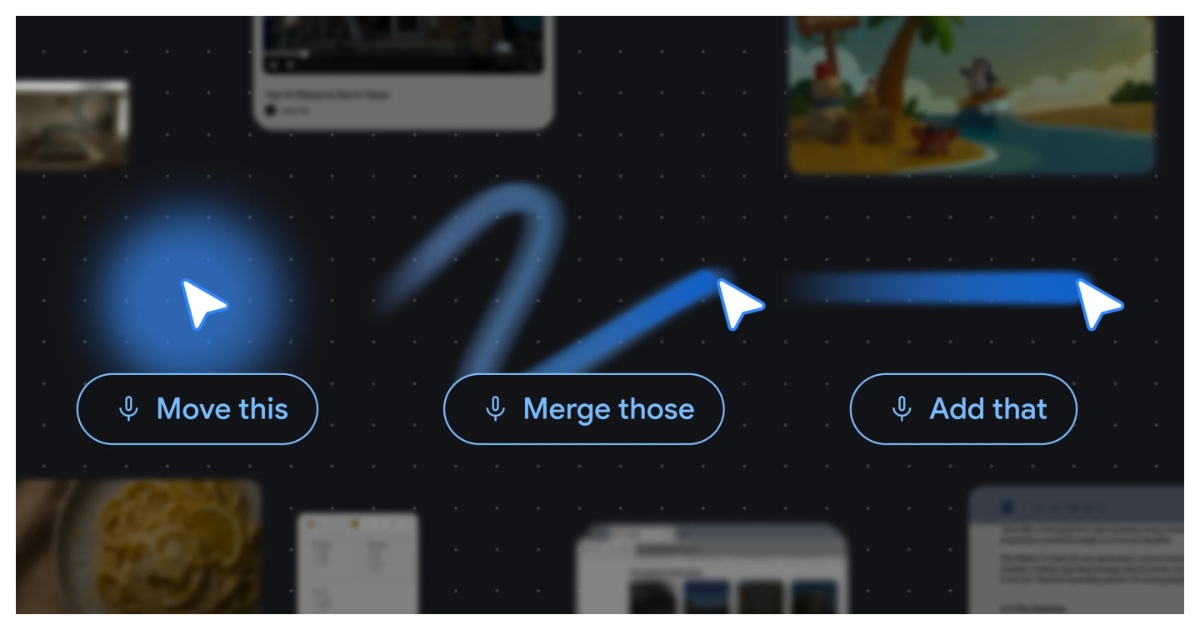

DeepMind’s design rests on four interaction principles that reimagine how a voice-controlled desktop could feel. First, AI should maintain the user’s flow by appearing wherever the pointer is, inside any app, instead of pulling you into a separate interface. Second, the system follows a show-and-tell model: Gemini sees what the cursor highlights, so you don’t need verbose prompts. Third, it embraces natural shorthand. People often point and say “fix this” or “move that”; the Google DeepMind cursor interprets these short commands by combining visual context and speech. Finally, it turns pixels into entities, recognizing dates, places, objects, or even handwritten notes and making them interactive. Together, these ideas transform the Gemini cursor upgrade into an overlay of intelligence on traditional navigation, where pointing and speaking replace much of the typing and app-hopping normally required for AI assistance.

Magic Pointer on Googlebook: A Proof of Everyday Integration

The most concrete expression of this vision is Magic Pointer on Googlebook, Google’s new Gemini-powered laptop category. Here, the cursor acts as an AI remote for the entire screen, not limited to a single browser or app. Users can select products on a webpage and ask Gemini to compare them, hover over a technical spec sheet and request a simplified explanation, or highlight a section of text and say “add this” or “merge those.” Because Magic Pointer is integrated at the operating-system level, the same voice-controlled desktop behavior applies across documents, videos, images, and web content. For everyone outside the Googlebook ecosystem, a more limited version appears as Gemini in Chrome, letting users point at specific webpage regions and ask questions. If this works smoothly, routine AI tasks may no longer start with a prompt box at all—they will begin with a cursor gesture and a few spoken words.

Why an AI Mouse Pointer Signals a New Interface Era

DeepMind’s AI-charged cursor is more than a clever feature; it signals a shift in how computing interfaces are conceived. Instead of treating AI as a separate destination, Google is weaving Gemini into every surface it controls—Search, Workspace, YouTube, Chrome, Googlebook, and now the pointer. The goal is a universal assistant that understands context wherever the user is working. If successful, this reduces the friction of constantly re-explaining tasks: the system already knows what you are pointing at, what app you’re in, and what you likely want to do. That changes mental habits. Users may start thinking less about prompts and more about simple, conversational commands anchored to whatever is on screen. Challenges remain, from latency to accuracy and privacy, but the direction is clear: the Google DeepMind cursor aims to turn a tiny arrow into the front door for everyday AI, redefining how we interact with computers.