From Autocomplete to Secure Code Assistants

AI coding assistants now span a spectrum that goes far beyond the autocomplete bars developers first met in their IDEs. At one end are cloud-based tools that suggest snippets, explain errors, and generate tests in real time, marketed as developer productivity AI. At the other are tightly controlled, secure code assistant deployments built on domain-specific models that live entirely inside an organisation’s own infrastructure. These systems ingest internal codebases, documentation, and workflows so they can answer questions in context, draft boilerplate, and help refactor legacy systems without sending sensitive data outside the firewall. The result is that AI in software development is shifting from an optional add-on to a background utility: it is there when a developer explores a new API, onboards to an unfamiliar service, or writes technical documentation, and it increasingly shapes how teams think about design, review, and maintenance.

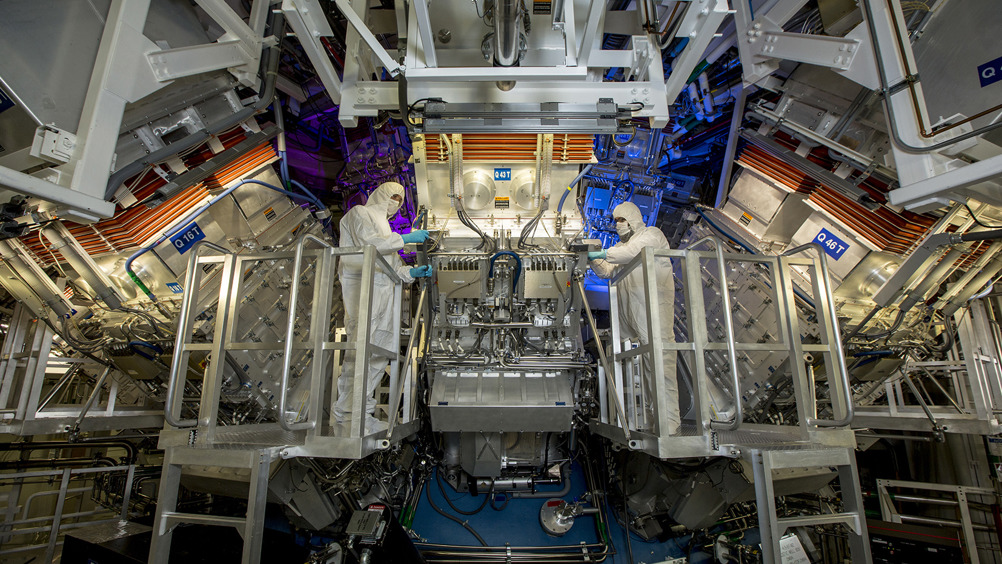

Inside First Light Fusion’s Air‑Gapped Copilot

Locai Labs’ deployment for First Light Fusion shows what high-stakes, domain-specific AI coding support now looks like in practice. The companies have rolled out a bespoke Large Language Model, trained using Locai Labs’ Forget-Me-Not framework on First Light Fusion’s own scientific and engineering workflows and code. Crucially, this AI coding assistant runs on an air-gapped high-performance computing environment in Oxford, so proprietary data and intellectual property stay entirely within the organisation’s systems. The deployment is not static: a long-term plan provides for two full model retraining cycles each year so the assistant can evolve with ongoing fusion research and changing software stacks. Leaders at both organisations say the tool is already a valuable asset, underlining how a carefully governed, secure code assistant can accelerate research-intensive engineering work without sacrificing data sovereignty or security.

What Teams Gain: Speed, Support, and Safer Workflows

Deployments like First Light Fusion’s highlight why AI coding assistants are becoming everyday infrastructure. When tuned to specific workflows, they help engineers prototype faster by generating scaffolding code, simulation scripts, and experiment harnesses aligned with existing standards. They support large refactors by suggesting consistent patterns and flagging likely integration pitfalls across sprawling codebases. Documentation, long a neglected chore, becomes more manageable as the assistant drafts docstrings, internal how‑to guides, and explanations of complex routines that engineers can then refine. New hires can query the system about architecture decisions and domain jargon, easing onboarding in complex environments. All of this is wrapped in strict security and privacy requirements: running models inside an air‑gapped environment and retraining them on internal workflows illustrates how mission‑critical organisations are insisting that developer productivity AI be both powerful and tightly controlled, rather than a generic tool living only in the public cloud.

AI Tools for Students: From Cheating Risk to Literacy Coach

As enterprises normalise AI in software development, classrooms are learning to treat AI tools for students as skills builders rather than shortcuts. Educators warn that banning AI outright will not stop cheating, but it will prevent students from learning to use these tools responsibly. Instead, they are reframing AI as a thought partner: students can test arguments, generate justifications and rebuttals for essays, and then evaluate which sources and ideas are credible. AI is also becoming an on-demand editor, giving feedback on clarity, structure, and tone so learners can revise their own writing. Voice-to-text and related tools reduce the friction between ideas and expression, especially for English language learners and students with dyslexia, who can dictate their thoughts and then refine the resulting text. Teachers are increasingly focused on coaching students to prompt effectively, question AI output, and see the tools as supports—not as authors of their work.

The New Literacy: Reviewing AI Output and Setting Guardrails

Across both fusion labs and classrooms, a new skillset is emerging: knowing how to read, review, and responsibly integrate AI-generated code and text. For professionals, that means treating suggestions from a secure code assistant as drafts that must pass the same code review standards as human-written changes. It also means maintaining code quality over time and resisting over-reliance on AI for design decisions. In schools, educators are teaching students to critique AI responses, check sources, and distinguish between assistance and plagiarism. Concerns about cheating and intellectual property are prompting both companies and institutions to write explicit acceptable-use policies that define when AI can be consulted, what data may be shared, and how attribution works. Looking ahead, AI coding assistants are likely to become even more document-centric—moving fluidly between technical specs, design docs, and code reviews—while users at every level learn to keep human judgment firmly in the loop.