What Tenstorrent Actually Demonstrated

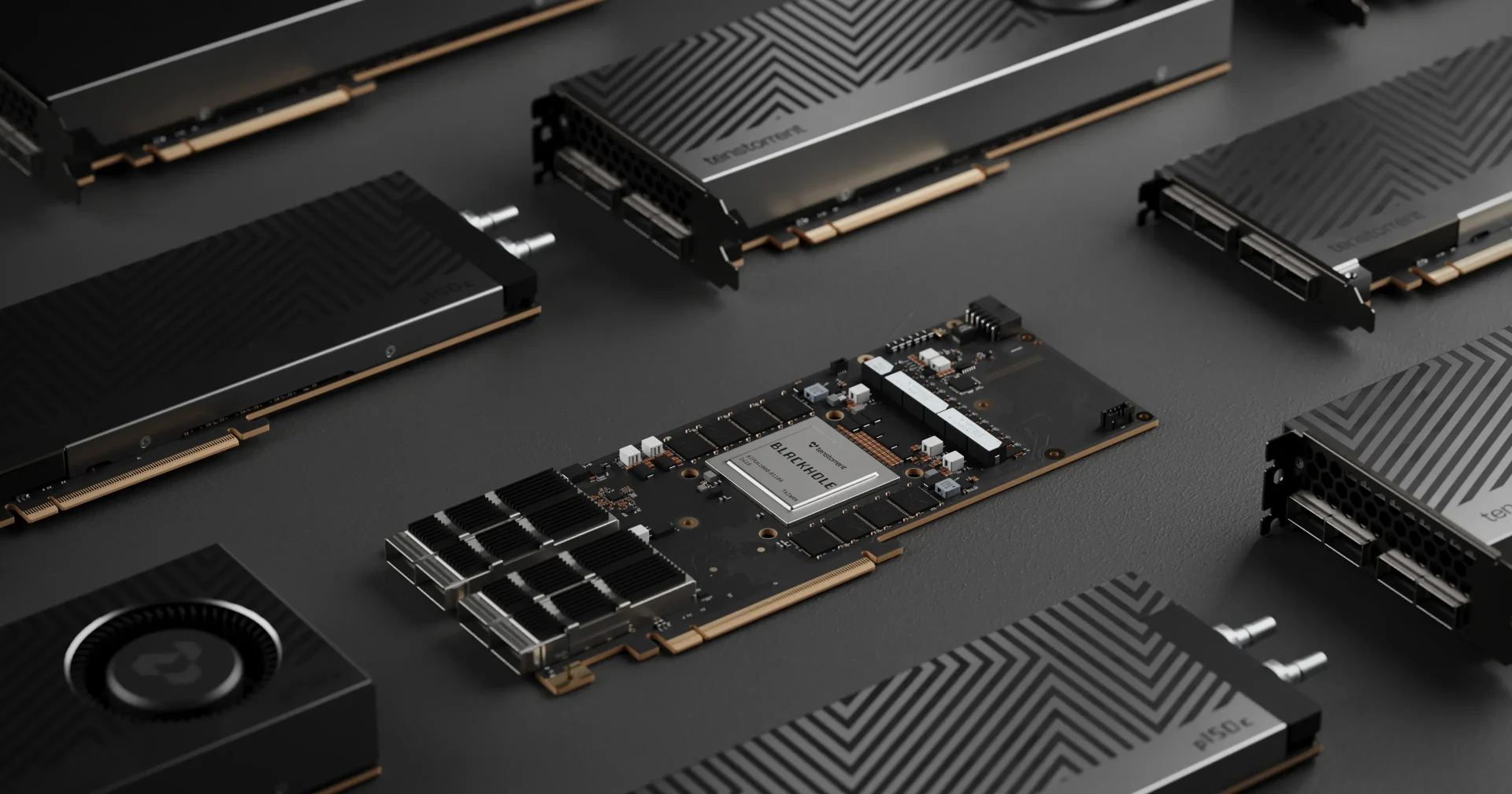

Tenstorrent recently showcased an optimized Tenstorrent AI model, Wan2.2-14B, running on its Blackhole servers and hitting a headline-grabbing AI video performance milestone: a 5‑second, 720p clip generated in as little as 2.4 seconds. In a live demo reported by EETimes, the system produced an 81‑frame video in about five seconds, with Tenstorrent later citing a best run of 2.4 seconds for a similar 5‑second video. That is faster than real time and, according to the company, roughly 10x quicker than competing setups. Under the hood, four Galaxy servers with 256 Blackhole accelerators—each packing 16 RISC‑V cores and 32 GB of GDDR6—handled the workload. Beyond raw speed, the demo highlights Tenstorrent’s open, unified architecture approach, where compute, memory, and networking are tuned to keep large models fed, instead of relying on proprietary stacks.

Why AI Video Is Harder Than Images

Static image generation already pushes hardware, but text‑to‑video adds a brutal twist: time. Instead of a single frame, models must generate dozens of coherent frames while tracking motion, lighting, and consistency between shots. Tenstorrent’s demo, for instance, used 81 frames and 40 steps, meaning the system iterated many times over each frame to refine quality. That multiplies compute and memory demands, especially at resolutions like 720p and above. This is why AI video generation speed lags far behind image tools on consumer GPUs and why workloads quickly become expensive if you rent generic cloud hardware. As realism improves—lip‑sync, animation, scene coherence have risen by over 54% in two years—models grow more complex and resource‑hungry. In this context, a fast text to video pipeline that can outpace real time is less a party trick and more an indication that specialized infrastructure is catching up with creators’ expectations.

How Blackhole Servers Make Fast Text to Video Possible

AI video generation speed hinges on how quickly a system can move data between compute cores, memory, and networked accelerators. Tenstorrent’s Blackhole servers tackle this by clustering hundreds of accelerators with a unified architecture, minimizing bottlenecks that typically slow large models during inference. Each Blackhole chip combines 16 RISC‑V cores with 32 GB of GDDR6, giving the Tenstorrent AI model both parallel compute and high‑bandwidth memory tailored to dense workloads like video. Running an optimized Wan2.2‑14B model across four Galaxy servers lets the system scale horizontally, essentially treating many chips as one large engine. For solo creators and agencies, the important part is that this power can live in the cloud. You do not need a top‑tier GPU at home; instead, you tap into Blackhole servers video performance via an API or hosted platform, paying per clip or subscription instead of buying hardware outright.

What Faster AI Video Enables for Creators

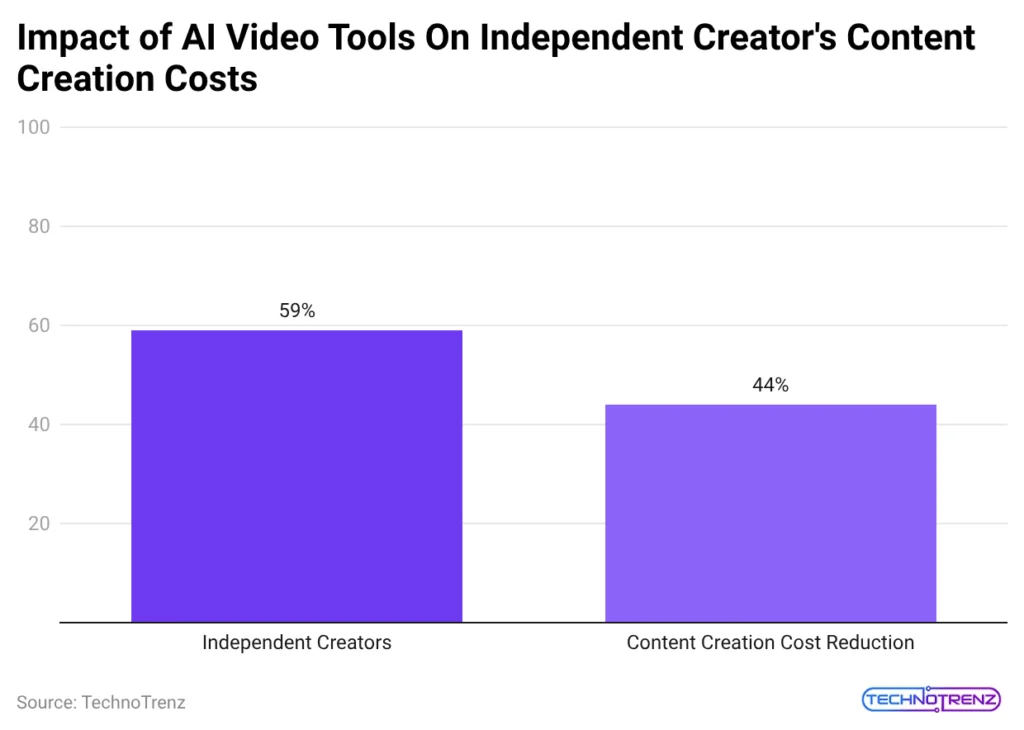

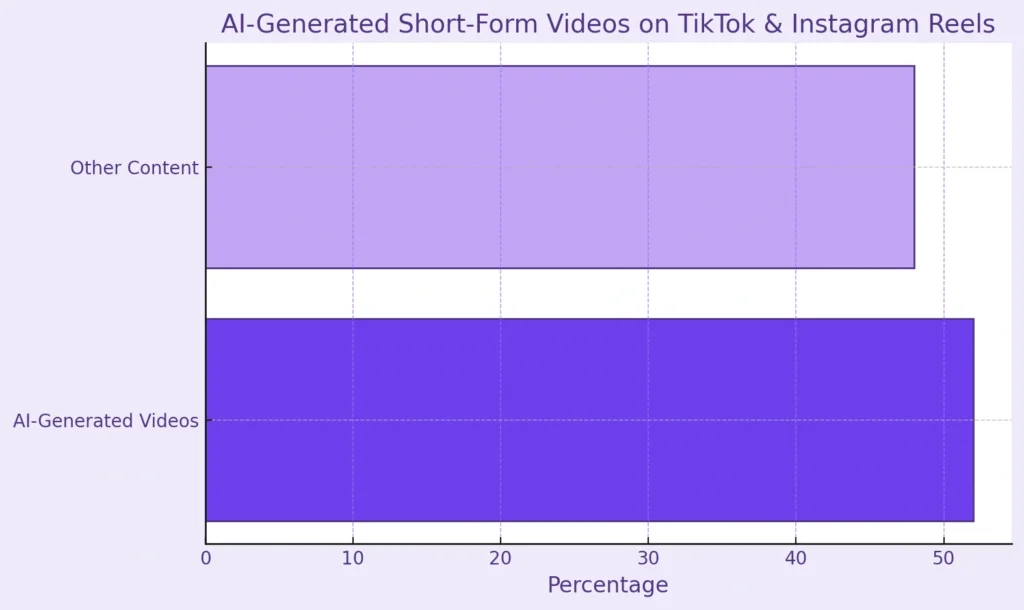

For everyday creators, the difference between waiting 30 seconds and 3 seconds per shot is huge. Faster AI video performance means you can iterate on prompts, styles, and storyboards almost as quickly as you think of them. Agencies can prototype multiple variants of an ad, swap products or languages, and test formats before locking a final cut—especially useful when 78% of social ads already lean on AI‑generated video and engagement gains can reach 45%. Since more than 81% of AI videos today are under 60 seconds, near real‑time generation opens doors to live or near‑live assets: reactive meme content, rapid A/B tests, or personalized clips aligned with trends on TikTok and Instagram Reels. As AI tools already cut production time by up to 79% and costs by about 58%, shaving seconds off each render compounds into entire extra campaigns for the same budget.

Demand, Costs, and the Speed–Quality Trade‑Off

The market signals that text‑to‑video AI is not niche anymore. The sector is projected to grow from USD 323.7 million (approx. RM1.49 billion) to over USD 2,479.7 million (approx. RM11.4 billion), while 63% of marketers are already experimenting with AI‑generated videos and about 58% of YouTube ads use them. Cost pressures are real, too: traditional video can run USD 1,000–USD 50,000 (approx. RM4,600–RM230,000) per minute, while AI generation often starts around USD 0.50–USD 30 (approx. RM2.30–RM138) per minute. Budget platforms charge as little as USD 0.64 (approx. RM3) for 5‑second 720p clips or USD 0.70–USD 1.00 (approx. RM3.20–RM4.60) for 10‑second 1080p. Tenstorrent‑class speed will not erase all constraints: longer clips will still face latency, cloud access adds network delays, and pushing models too hard for speed can hurt consistency or realism. For creators, the opportunity is to treat these systems as fast drafting tools—accepting some quality trade‑offs early, then spending extra time or budget only on the shots that warrant polish.