From Pipelines to Graphs: Why Netflix Reframed Model Lifecycle Management

As machine learning became embedded across more products and teams, Netflix found its traditional tooling straining under the weight of complexity. Separate pipelines, feature stores, and experiment artifacts created a maze of machine learning dependencies that were hard to track and even harder to govern. Questions like where a model’s data originated, who owned a feature, or what might break if a dataset changed became non-trivial operational risks. Netflix’s answer is the Model Lifecycle Graph, a graph-based architecture that treats models, datasets, features, evaluations, workflows, and production systems as interconnected entities rather than isolated steps. By elevating metadata and relationships to first-class concerns, the company aims to make model lifecycle management more transparent, scalable, and resilient, especially as enterprise ML operations expand across teams and applications.

How the Model Lifecycle Graph Maps Machine Learning Dependencies

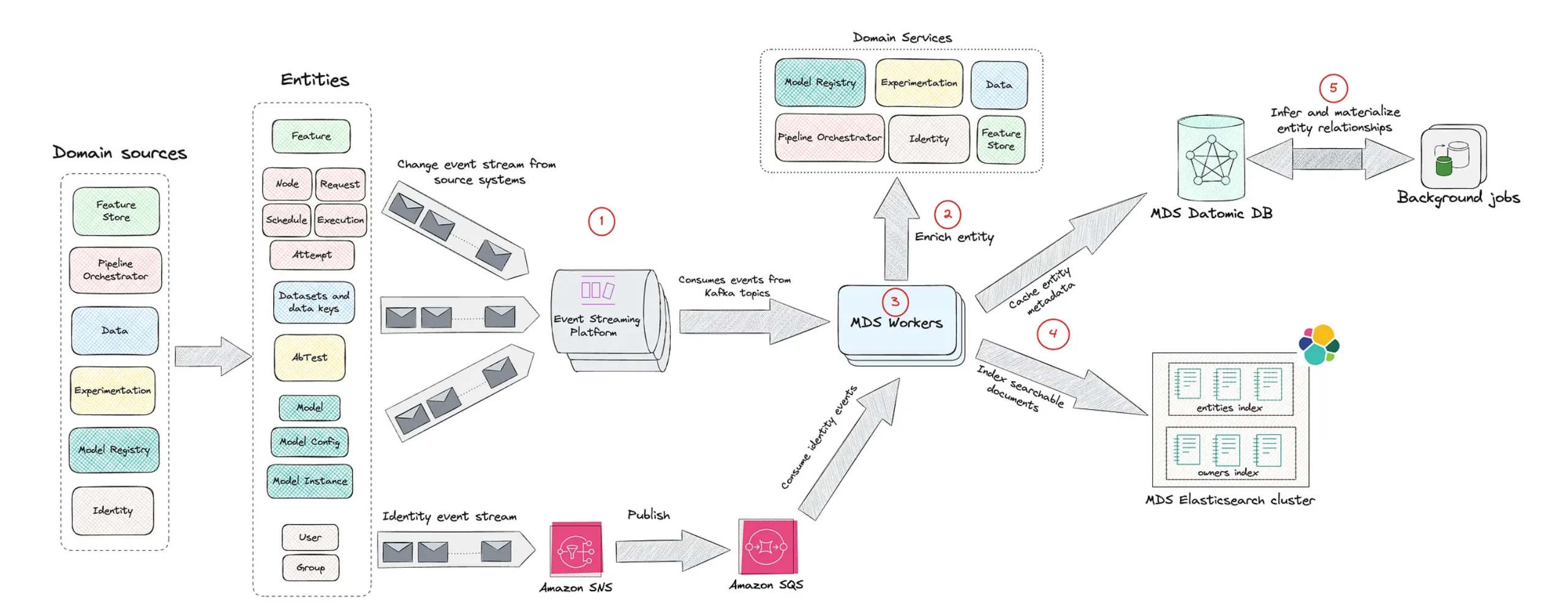

The Model Lifecycle Graph represents each ML asset—datasets, features, models, evaluations, workflows, and services—as nodes connected by explicit relationships. Instead of viewing a model as the end of a linear pipeline, Netflix models its full context: which datasets feed which features, which evaluations validate which models, and which production systems consume those models. This graph can be traversed in multiple directions, allowing engineers to move upstream to inspect data lineage or downstream to see where a model is deployed. That flexibility makes impact analysis far more precise than in conventional pipeline-centric views. When a dataset schema changes or a feature is deprecated, the graph reveals the exact models and workflows affected. In effect, Netflix turns its ML infrastructure into a navigable map of machine learning dependencies, making invisible couplings visible before they cause operational failures.

Visibility, Governance, and Reuse Across Enterprise ML Operations

Beyond pure dependency mapping, the Model Lifecycle Graph serves as a discovery and governance layer for enterprise ML operations. Because every asset is registered as a node with lineage and ownership, teams can search for existing datasets, features, or models before building new ones. This encourages reuse and reduces duplicated work, while also clarifying who is responsible for maintaining each component. Governance improves as well: compliance and operational teams can trace how data flows into models, how those models are evaluated, and where they are deployed. That end-to-end visibility supports reproducibility, auditability, and safer rollout processes. By organizing ML infrastructure around metadata and relationships, Netflix aligns its machine learning practice with broader software engineering trends that favor centralized visibility, consistent lifecycle management, and strongly typed ownership over ad-hoc, team-specific solutions.

A Metadata-Centric Future for Scalable ML Infrastructure

Netflix’s Model Lifecycle Graph reflects a broader industry shift toward metadata-centric platforms as ML infrastructure scaling becomes a priority. Similar ideas underpin systems like LinkedIn DataHub, lineage-focused initiatives such as OpenLineage, and ML platforms like Uber’s Michelangelo, all of which emphasize central lifecycle management and reusable assets. The approach also echoes internal developer portals like Spotify Backstage, where graphs represent services, infrastructure, and ownership to give organizations a holistic operational view. Netflix’s emphasis on traceability and institutional visibility contrasts with newer trends that prioritize rapid experimentation above structure. The underlying message is that, as machine learning weaves deeper into core software stacks, metadata, lineage, and lifecycle governance are no longer optional. They become architectural foundations, enabling enterprises to innovate quickly without sacrificing reliability or losing control over how their ML systems actually work.