Plain English Programming Arrives for AI Agents

AI agents that understand natural language are transforming how software and robotics are built. Instead of writing boilerplate code, developers now describe desired behavior in everyday English and let autonomous code generation handle the rest. This shift is particularly visible in agentic AI development, where systems are expected not just to respond, but to plan, execute, and refine complex tasks. Plain English programming lowers the barrier for experimentation: people can prototype workflows, test ideas, and deploy autonomous agents without deep expertise in specific SDKs or frameworks. That accessibility is pulling more non-traditional developers—business operators, educators, and hobbyists—into the AI ecosystem. At the same time, it challenges toolmakers to make agents robust enough to interpret ambiguous instructions, generate safe code, and iterate based on feedback. The result is a new class of AI agents natural language users can control like collaborators rather than tools.

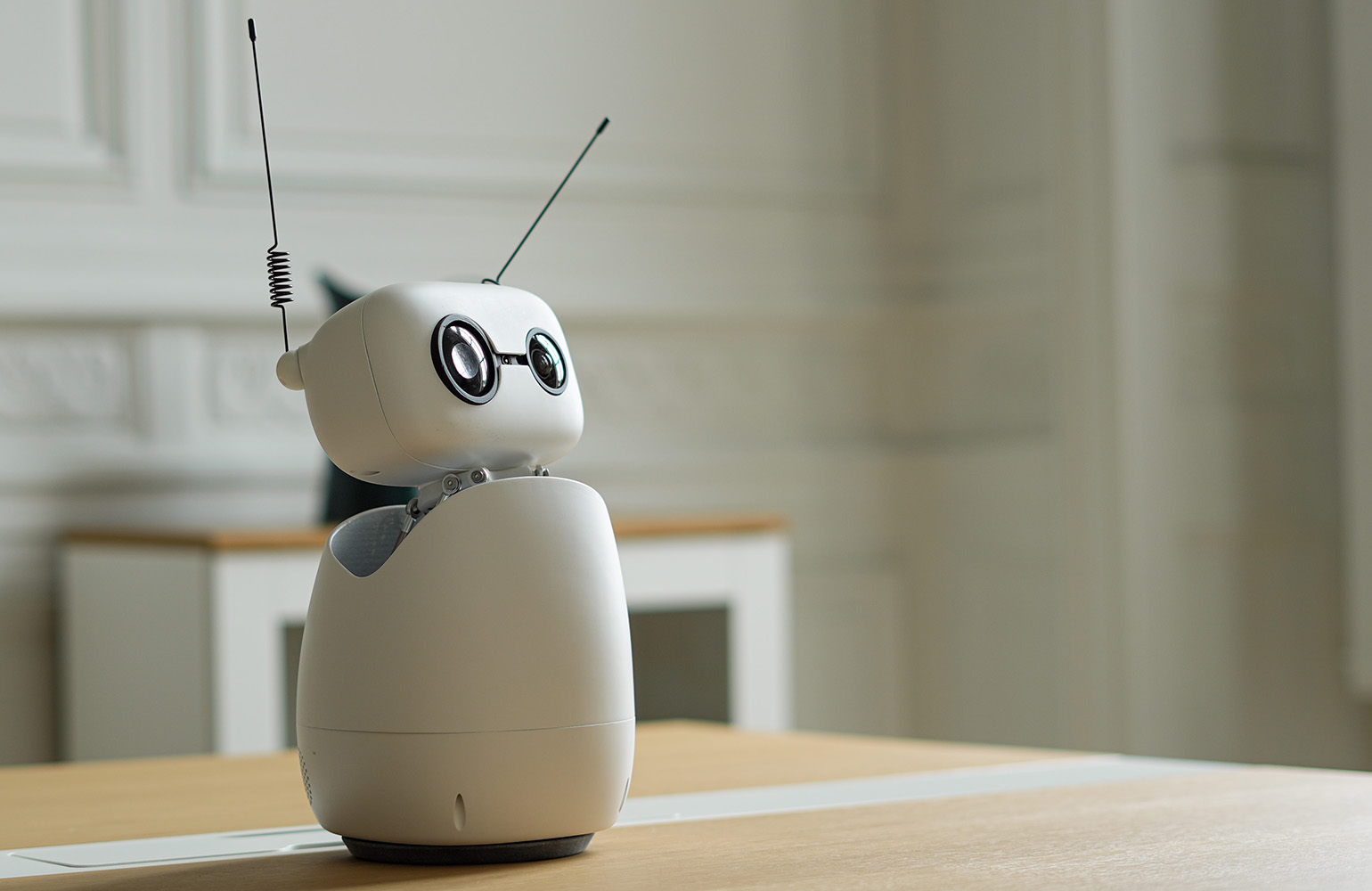

Hugging Face’s Toolkit Turns Robot Apps Into Plain English Projects

Hugging Face’s agentic toolkit for the Reachy Mini open-source robot shows how far natural language interfaces have come. Users simply describe what they want the robot to do in plain English, and an AI agent writes, tests, and ships the code automatically. Hugging Face frames this as collapsing three traditional blockers in robotics: expertise, expensive hardware, and lengthy integration work. The Reachy Mini platform pairs low-cost, open hardware with an AI-driven development flow that runs on a familiar web hub. Non-developers already use the toolkit to build sophisticated apps, such as a voice-controlled meeting facilitator that manages Zoom sessions, tracks participants, and generates questions on the fly. Every app also runs in a browser-based simulator, enabling experimentation without owning a robot. Combined with a searchable, forkable app catalog, this model turns agentic AI development into a remix culture powered by natural language prompts rather than manual coding.

Meta’s Hatch Agent Pushes Socially Grounded Autonomy

Meta’s upcoming Hatch agent illustrates how natural language control is expanding into consumer-scale, socially grounded AI. Prepared behind a waitlist, Hatch is designed as a mainstream, autonomous assistant capable of image and video generation, shopping flows, learning sessions, research tasks, and scheduled workflows such as file generation. What distinguishes Hatch is its deep integration with social platforms like Instagram and Facebook. Rather than pulling users into a separate chat app, the agent aims to live where they already scroll, turning feed exploration, creator discovery, and shopping research into agent-driven workflows. Internally, Meta is testing Hatch in mock environments resembling popular marketplaces and delivery services to refine its tool use behavior. The company frames its vision as agents that work continuously toward user goals, suggesting a future where AI agents natural language interfaces quietly orchestrate media, commerce, and research in the background.

Natural Language Control Lowers Barriers for Non-Expert Developers

Natural language interfaces are rapidly reducing friction in building autonomous agents. Where traditional development requires knowledge of APIs, SDKs, and deployment pipelines, agentic platforms now let users specify goals conversationally and rely on autonomous code generation to fill in the technical gaps. The Reachy Mini ecosystem shows non-developers building reliable, voice-driven applications by iterating on English descriptions alone. Meanwhile, Meta’s Hatch agent hints at a future where people configure sophisticated, socially aware behaviors—like continuous shopping research or content curation—via simple prompts embedded in their feeds. This shift broadens who can participate in agentic AI development, inviting domain experts, educators, and creators to design workflows without becoming programmers. It also emphasizes new skills: articulating intent clearly, testing agent behavior, and supervising autonomous actions. As these tools mature, the line between “user” and “developer” blurs, turning natural language into a primary interface for building software.

Waitlists, App Stores, and the Maturing Agentic AI Ecosystem

Early access patterns suggest agentic AI is moving from experimental demos to structured platforms. Meta’s choice to launch Hatch behind a waitlist indicates both demand and caution: the company can refine capabilities and safety controls before broad release, while signaling that autonomous, socially integrated agents are nearly production-ready. On the robotics side, Hugging Face’s Reachy Mini app store already offers hundreds of apps, all discoverable, forkable, and modifiable via natural language. This combination of curated distribution and open remixing marks a new phase in AI agents natural language tooling—one where ecosystems, not standalone models, define the experience. Developers and non-experts alike can build on proven templates, adjust behaviors using plain English programming, and redeploy agents in minutes. As more platforms adopt similar patterns of waitlisted rollouts, shared app catalogs, and browser simulators, agentic AI development is poised to become a standard part of everyday software creation.