From Ten Blue Links to Trusted AI Answers

Search behavior is undergoing a structural shift. Instead of scanning long lists of links, people are asking AI assistants direct questions and expecting concise, confident answers. This is redefining what visibility means online: the question is no longer where a brand ranks, but whether AI systems mention it at all. Platforms like ChatGPT, Google’s AI Overviews, Claude, Perplexity, Copilot, Meta AI and others are becoming primary gateways to information, products and services. In this environment, AI search transparency and clear source attribution are emerging as critical differentiators. Users have learned that generative models can hallucinate, and they now scrutinize where answers come from and how they are assembled. As AI answer engines compete for trust, the ability to show credible, traceable sources is becoming a core feature rather than a nice-to-have, creating a new layer of competition above traditional SEO.

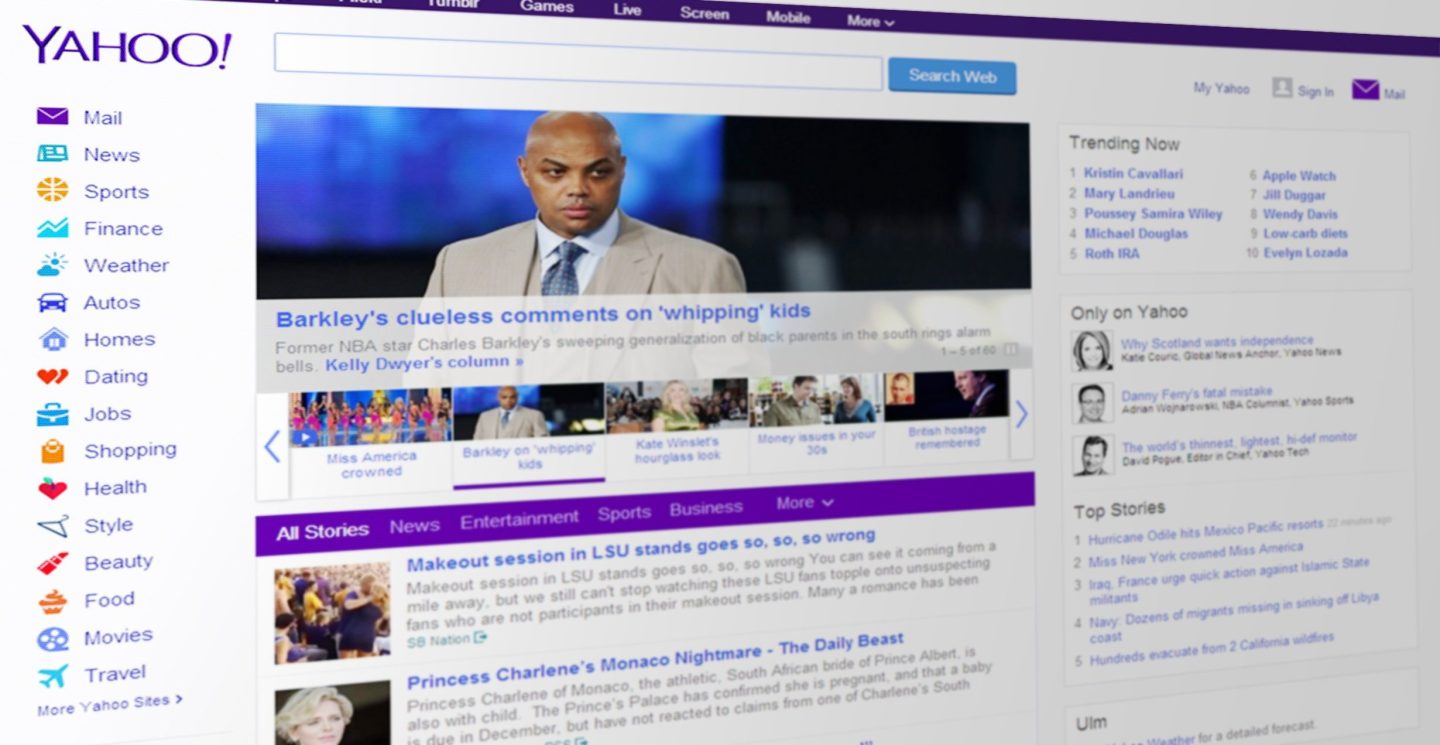

Yahoo Scout’s Bet on Source-Led AI Search

Yahoo’s Scout AI assistant is a prominent example of this source-first strategy. Launched in beta as an AI answer engine, Scout is designed to deliver concise responses with transparent sources instead of hiding the underlying web results. Built on Anthropic’s Claude and Microsoft’s Grounding with Bing, it combines public web data with Yahoo’s own network of Mail, News, Finance, Sports, publisher partners and rich user signals. Yahoo’s leadership positions Scout’s source display as its key point of difference, reflecting consumer research showing trust as the top need in AI search experiences. By integrating Scout directly into existing products rather than isolating it on a separate AI page, Yahoo is betting that familiar environments plus visible citations will encourage users to treat it as a reliable companion. This approach frames AI search transparency not just as a design choice, but as a branding strategy centered on credibility.

AI Search Optimization: Competing Beyond Google Rankings

As AI answer engines spread, brands are rethinking search strategies around being recommended, not just ranked. AI search optimization now involves influencing the knowledge and signals large language models use when they construct answers. Authoritative web sources, entity recognition, structured brand data, backlinks and trusted media mentions all help determine which companies surface in AI-driven recommendations and Google AI Overviews. This is creating a parallel track to SEO, where search ranking alternatives such as AI assistants and overview panels can capture user attention before traditional results. For marketers, the implication is clear: an exclusive focus on classic keyword rankings is no longer sufficient. Brands must ensure they are present in the high-authority ecosystems that feed AI systems and that their expertise is easily interpretable by machines, with clear, consistent signals that reinforce trustworthiness and relevance across different AI platforms.

PR as an Engine for AI Visibility and Trust

The rise of AI search is pulling public relations into the center of digital discovery strategy. Services like AHOD PR Boost explicitly aim to increase AI visibility by securing placements in high-authority DA70+ publications syndicated to Apple News and Google News. These outlets are increasingly used as training and retrieval material for AI assistants, making editorial coverage a form of AI search optimization. Not all press is equal: mentions on low-quality blogs offer limited value compared with well-indexed, trusted publications carrying strong backlink and authority signals. By combining AI-optimized content, entity-aware storytelling and AI visibility tracking, PR programs are evolving into infrastructure for recommendation readiness. For brands, the message is stark: if AI systems are learning from trusted media, then earning space in those environments is no longer just about human audiences—it is about being visible, attributable and trustworthy to machines as well.