What OpenAI’s Real-Time Voice Models Bring to Voice Apps

OpenAI’s new real-time voice models turn voice into a fully capable interface for apps, not just a dictation tool. Through the OpenAI Realtime API, developers can now plug in three specialized models: GPT-Realtime-2 for live reasoning, GPT-Realtime-Translate for instant multilingual conversations, and GPT-Realtime-Whisper for low-latency, streaming speech-to-text. Instead of one monolithic model doing everything, OpenAI splits reasoning, translation, and transcription so teams can optimize each part of a voice workflow. This architecture matters for voice app development because real-world conversations are messy. Users interrupt themselves, change topics, or need the system to call tools and services while they keep talking. These models are designed to keep speaking, hold context over long interactions, and respond while tasks run in the background. The result is a foundation for live voice agents that can reason, translate, and generate real-time transcription with far less orchestration code on the developer’s side.

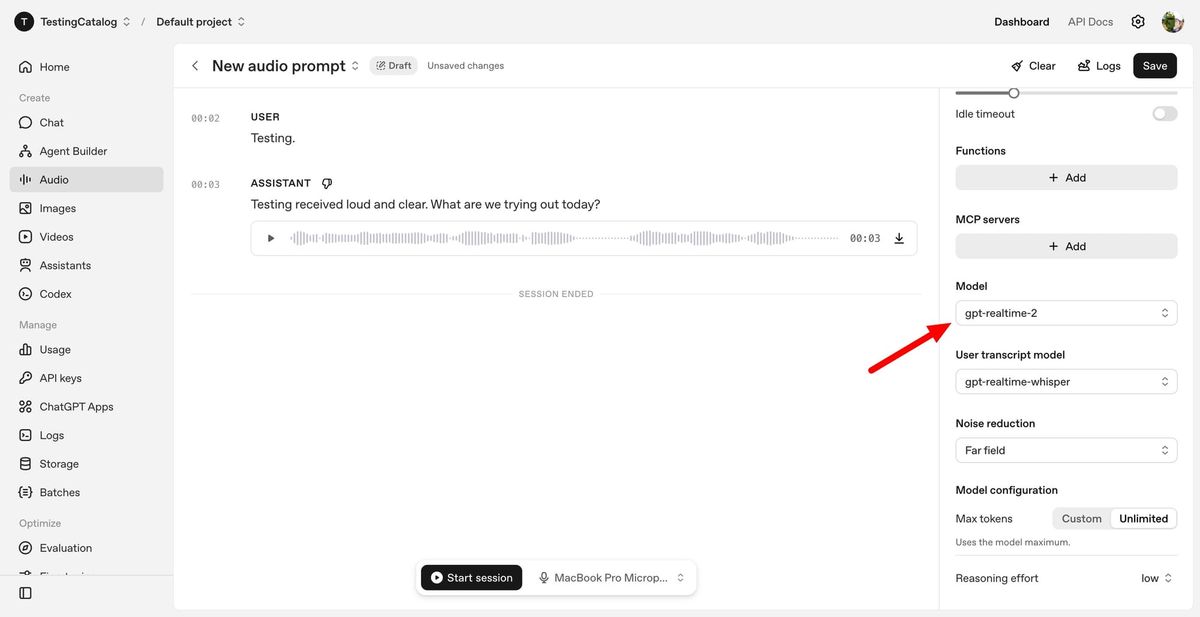

GPT-Realtime-2: GPT-5-Class Reasoning for Live Voice Agents

GPT-Realtime-2 is the reasoning engine of OpenAI’s real-time voice stack. Built with GPT-5-class reasoning, it enables voice agents that can handle complex requests, maintain long conversations, and react naturally when users interrupt or change direction. The model supports a 128K token context window, a big jump from the previous 32K, allowing it to track lengthy sessions and multi-step workflows without losing the thread. Key features include short spoken preambles like “let me check that,” parallel tool calls so the model can query APIs or back-end systems while continuing the conversation, and better failure recovery—rather than going silent, it can explain what went wrong and suggest next steps. Developers can tune the reasoning level from minimal to xhigh, trading latency for deeper analysis when needed. This makes GPT-Realtime-2 ideal for live voice agents that coordinate tools, manage workflows, and perform real-time reasoning instead of simple question-answer exchanges.

GPT-Realtime-Translate: Live Voice Translation with Context Awareness

GPT-Realtime-Translate focuses on live voice translation across more than 70 input languages and 13 output languages. It is built for scenarios where people speak continuously and the system must keep up without pausing the conversation. Beyond basic word substitution, the model aims to preserve meaning, context, and tone while handling regional pronunciations, fast speech, and sudden topic changes. For developers, this opens the door to live voice translation experiences inside customer support, sales, education, events, travel, and creator platforms. You can route incoming audio streams to GPT-Realtime-Translate, receive translated audio or text in near real time, and optionally pair it with a transcription feed. This enables live voice translation overlays for calls, multilingual support lines, and real-time language bridges inside existing apps. Because translation is separated from reasoning, teams can decide when they need only live voice translation and when they should send segments to GPT-Realtime-2 for deeper analysis or workflow control.

GPT-Realtime-Whisper: Streaming Real-Time Transcription for Voice Workflows

GPT-Realtime-Whisper provides low-latency, streaming speech-to-text for applications that need continuous real-time transcription. Instead of waiting for a speaker to finish, the model transcribes audio as people talk, making it suitable for live captions, meeting notes, webinar subtitles, or any workflow where text needs to appear while the conversation is still in progress. For voice app development, GPT-Realtime-Whisper can serve as the input layer for downstream systems. Developers can feed its transcripts into GPT-Realtime-2 for reasoning, into analytics pipelines for summarization, or into search indexes for later retrieval. Because it is optimized for streaming, it reduces lag between spoken words and visible text, improving usability for users who rely on real-time transcription. When combined with GPT-Realtime-Translate, it can also support dual workflows, such as generating both same-language transcripts and translated text for multilingual audiences while the session is ongoing.

Designing Real-Time Voice Apps: Practical Use Cases and Architecture Tips

The split design of OpenAI’s real-time voice models encourages developers to think in terms of voice pipelines. A typical architecture might send microphone audio to GPT-Realtime-Whisper for transcription, route key segments to GPT-Realtime-2 for reasoning and tool use, and optionally pass streams to GPT-Realtime-Translate for live voice translation. This lets teams apply high reasoning effort only where needed, keeping latency and costs under tighter control. Use cases are emerging across business workflows: voice-to-action apps where users issue spoken commands and GPT-Realtime-2 orchestrates tools; systems-to-voice dashboards that narrate system status; and voice-to-voice assistants that translate, reason, and respond in real time. Developers building live call flows, in-car assistants, or productivity tools can leverage streaming audio support in the Realtime API to maintain continuous interaction. By combining real-time transcription, live voice translation, and GPT-5-class reasoning, these models make it feasible to ship voice applications that truly think and act while people speak.