The ‘Data Wall’: Why Robots Are Starved of Real-World Experience

For decades, robot builders focused on motors, sensors, and metal. Today, the real bottleneck is data, not hardware. This ‘Data Wall’ describes the point where it becomes harder to gather and structure enough high-quality interaction data than to design the robot itself. Most physical AI models still rely on teleoperation or motion capture, where humans directly drive robots to collect examples of tasks. Synapath AI points out that this approach is slow, expensive, and locked to a single machine, making it nearly impossible to build a truly massive robot learning dataset. Worse, standard visual methods often miss fine-grained hand and object dynamics, leaving robots with an incomplete view of how the physical world actually works. Breaking the Data Wall requires a new kind of robotics data foundation: one that turns abundant raw video into structured, robot-ready experience at scale.

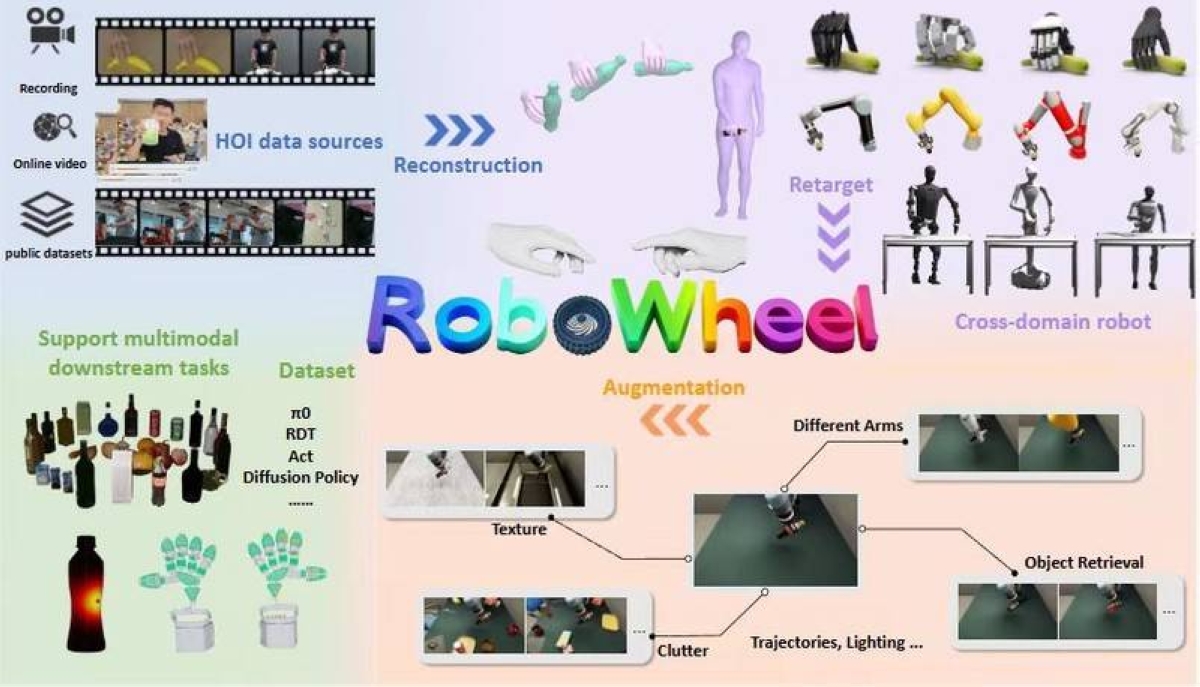

From Raw Footage to Robotics Data Foundation

A modern robotics data foundation is built on continuous streams of video, not isolated lab recordings. Cameras on robots, humans, and simulators capture everyday activities: reaching, grasping, opening, pouring, and navigating. On top of this, AI robotics analytics pipelines transform unstructured footage into structured, queryable data. Synapath AI’s HORA project exemplifies this shift. Using its SynaData pipeline, the company reconstructs hand–object interactions from ordinary human-activity videos with sub-centimeter precision, achieving trajectory errors within ±0.5cm. That level of detail allows systems to see exactly how fingers wrap around handles or how tools are oriented in space. Each clip is segmented into useful actions, labeled with object identities and poses, and indexed across modalities such as RGB(D) streams and tactile signals. The result is a rich robot learning dataset that can be reused across many tasks, models, and robot types, instead of being trapped in a single hardware setup.

How Video-Based AI Training Teaches Physical Skills

Once video has been converted into a structured dataset, it becomes fuel for video based AI training. In HORA, more than 150,000 high-quality trajectories link what the camera sees to how hands and objects actually move, including precise 6-DoF object poses and tactile information. This allows physical AI models to learn skills like grasping, in-hand manipulation, and tool use across many contexts. Because the data is hardware-agnostic and designed for cross-embodiment learning, the same dataset can train humanoids, dexterous hands, or multi-axis industrial arms. Learning is no longer tied to one lab’s robot; it generalizes across morphologies and environments. With enough diverse trajectories, models can infer robust control policies: how to adapt grasps to different object shapes, how to orient parts for assembly, or how to recover from small errors. In effect, the robotics data foundation becomes a shared motor memory of the physical world.

Open-Source Tools Like HORA and the New Data Stack for Robotics

Building this kind of data infrastructure is expensive and complex, which is why open-sourcing HORA matters for the wider robotics community. Synapath AI positions itself as a neutral data infrastructure provider, standardizing how unstructured video is turned into structured, reusable training data. By releasing HORA and the ideas behind its SynaData pipeline, the company gives startups, universities, and labs—especially those without massive compute budgets—a way to stand on a common robotics data foundation instead of reinventing it. This mirrors broader AI data infrastructure trends in cloud and data center ecosystems, where sophisticated analysis and protocol tools are becoming essential to move and inspect data at extreme speeds. In robotics, the parallel is clear: those who control scalable data pipelines and high-fidelity robot learning datasets will gain a durable edge, including teams in emerging markets that can now plug into global, open physical AI resources rather than starting from scratch.