From Coding Assistants to Autonomous Agents

AI code generation has moved beyond autocomplete-style helpers into a new phase: autonomous agents. Instead of waiting for you to type a function, these systems take a goal stated in natural language and then plan, write, test, and ship code with minimal human input. This is more than a productivity boost for developers. It is a shift in who can build software at all. Today’s agentic tools can spin up apps, connect services, and even manage research or creative workflows without traditional programming. That makes them a bridge between classic software development and no-code development, where plain English replaces complex syntax. For professionals, AI-powered automation means routine coding and integration work can be delegated to machines, freeing time for architecture, strategy, and quality control. For non-programmers, it transforms software from something you buy or hire out into something you can instruct directly.

Meta’s Hatch: An Always-On Digital Operator for Everyday Tasks

Meta’s upcoming Hatch agent illustrates how autonomous agents are being designed for mainstream use. Buried clues in Meta’s products indicate that Hatch is being prepared behind a waitlist, signaling a gradual, controlled rollout. Unlike simple chatbots, Hatch is aimed at running end-to-end tasks such as image and video generation, shopping flows, learning sessions, research workloads, scheduled tasks, and file generation. It is expected to tap deeply into services like Instagram and Facebook, turning feed exploration, creator discovery, and shopping research into agent-driven workflows that run continually on a user’s behalf. Under the hood, Meta is aligning this agent with its broader vision of systems that work day and night toward user goals, similar in scope to other enterprise-grade autonomous agents but rooted in social platforms billions already use. For developers, Hatch hints at future APIs and ecosystems. For everyday users, it suggests an on-demand digital operator managing complex tasks across their online life.

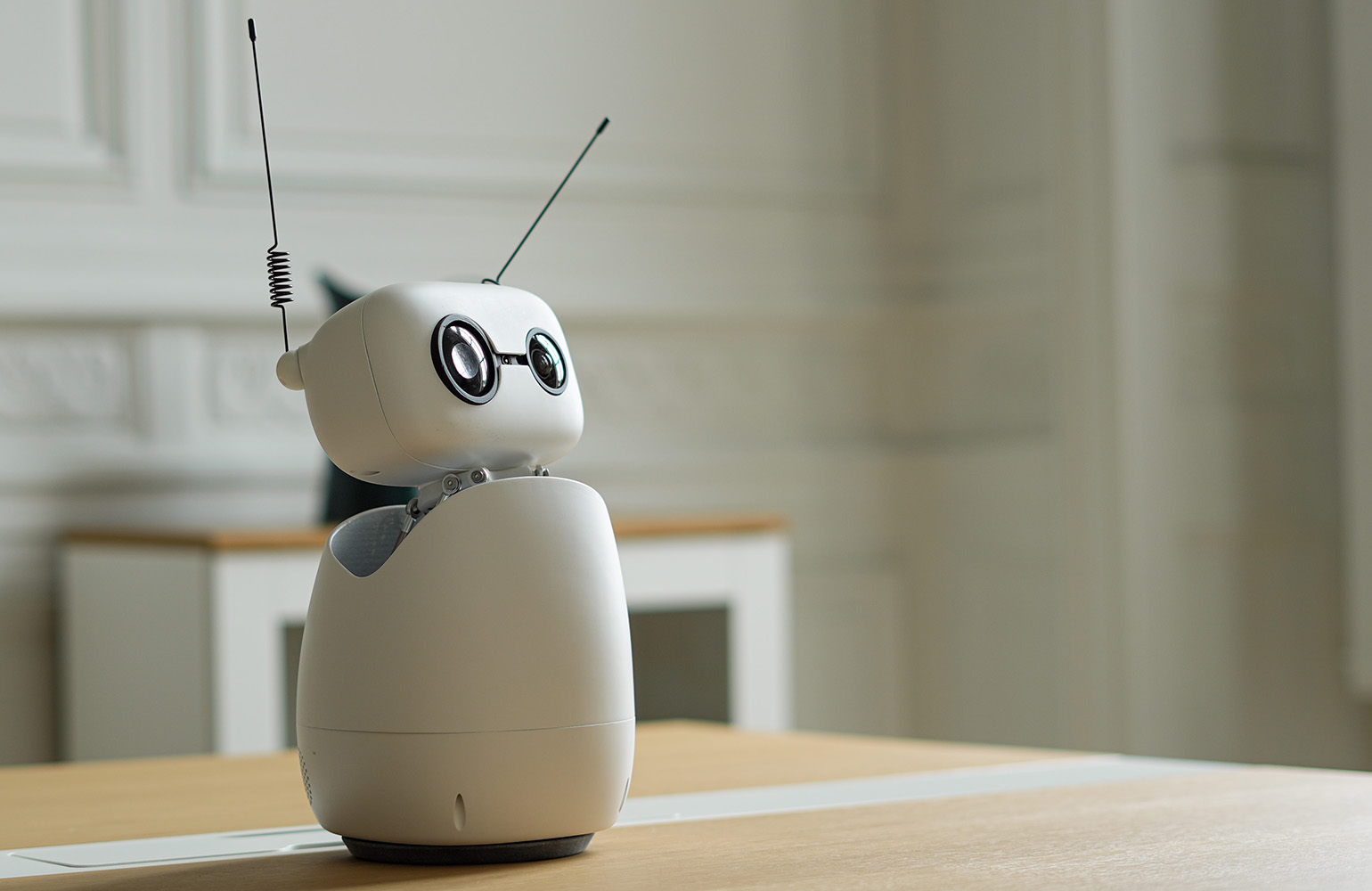

Hugging Face’s Reachy Mini: Coding Robots with Plain English

Hugging Face’s agentic toolkit for the Reachy Mini robot shows what happens when AI code generation meets physical devices. Instead of installing SDKs or learning robotics frameworks, users simply describe the behavior they want in plain English. An AI agent then writes, tests, and ships the code directly to the desktop robot, often in under an hour and without a single line of manual code. The company’s goal is to remove three historical barriers in robotics: specialist expertise, expensive custom hardware, and long integration cycles. Here, the expertise is embodied in the AI agent, the robot is a compact, open-source platform anyone can buy, and deployment happens through a streamlined, one-click web flow. This is AI-powered automation in a tangible form: non-technical users can craft interactive companions, tutors, or assistants that respond to voice, gestures, and context—without ever opening a code editor.

What This Means for Non-Programmers: From Ideas to Apps

For non-programmers, these tools turn software and robotics development into a no-code development experience driven by conversation. Joel Cohen, a retired marketing executive with no robotics background, used Hugging Face’s toolkit to build a voice-controlled co-facilitator for his CEO peer groups. By describing his needs in plain English, he created a robot that wakes on a cue phrase, greets participants by name, runs different facilitation modes, asks probing questions, and summarizes key themes. He never had to handle SDKs, IDEs, or wiring diagrams. This pattern is spreading: users can browse an app store of Reachy Mini projects, fork one they like, ask the AI agent to change it, and publish a new version in minutes. The result is a new kind of creative skill: instead of learning to code, people learn to specify goals, constraints, and behaviors clearly so autonomous agents can implement them.

Implications for Developers and the Future of Work

For software professionals, autonomous agents are less a replacement and more a reshaping of the job. As agents become capable of writing, testing, and deploying code, human developers shift toward defining architectures, governing security and reliability, curating prompts, and reviewing agent-generated changes. AI-powered automation will likely absorb repetitive integration work, while developers take on roles closer to product design and systems thinking. At the same time, non-technical colleagues gain direct power to prototype tools, dashboards, and robotic behaviors through no-code development interfaces. That raises new questions around quality control, maintainability, and ethical use, especially as agents plug into social feeds, shopping flows, and real-world devices. The next wave of software creation will be collaborative: humans framing problems and constraints, autonomous agents handling implementation details, and both groups—developers and non-programmers—sharing a common, conversational interface to build what they need.