From Inner Loop to Orchestration Layer: The Future of IDEs

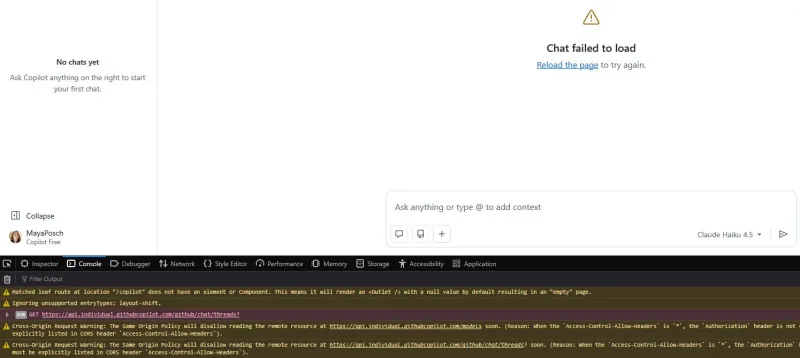

For decades, the IDE was the unquestioned home base: open a file, edit, build, debug, repeat. That tight inner loop shaped how developer workflow tools were designed. Now, a different pattern is emerging. Agentic AI coding tools can plan changes, rewrite multiple files, run tests, and even submit pull requests with minimal human prompting. In platforms such as Conductor, Claude Code Web, GitHub Copilot Agent, and others, developers increasingly spend more time in a control plane that orchestrates agents than in any single editor window. Even IDE-like products are repositioning themselves. Cursor’s Glass interface, for example, treats agent management as the core experience, with the editor demoted to a detail view when you need to inspect or tweak specific lines. This shift does not kill the IDE, but it does move the centre of gravity toward orchestration and workflow design rather than manual line-by-line editing.

Pair Programming With an LLM: Chat as Your New Coding Desk

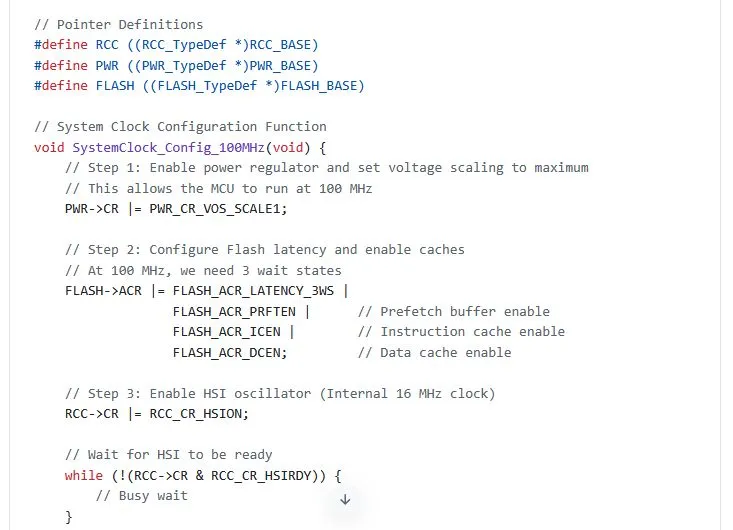

AI pair programming no longer means two humans at one keyboard. Many developers are already treating an LLM coding assistant as a chat-based pair programmer: discussing architecture, asking for alternative designs, or iterating on refactors in natural language. A Hackaday experiment documented what this looks like in practice, using an LLM for C++ embedded STM32 work and Ada network development. The author, a self-described introverted lone developer, explored how a chatbot could help without the social friction of traditional pair programming. The experience was mixed. The assistant could suggest structures and boilerplate, but also produced incorrect or mismatched output at times, forcing the human to review and correct the generated code. Still, the core interaction pattern was clear: developers increasingly talk to a chatbot about their intent, then let it generate or adjust code, rather than starting from a blank editor buffer.

What Agentic AI Coding Really Means

Agentic AI coding moves beyond autocomplete into systems that behave like autonomous teammates. Instead of responding to isolated prompts, AI agents can set goals, plan steps, and execute tasks end-to-end with limited guidance. In software development, that might mean an agent that reviews an issue, edits multiple files, updates dependencies, runs tests, and prepares a merge request. In more advanced setups, multiple agents collaborate: one focuses on code generation and refactoring, another on testing, another on deployment, all coordinated through an orchestration layer. Platforms like Zencoder emphasise that the big productivity gains come from this multi-agent collaboration, not from a single smart suggestion box. These agents integrate directly into CI/CD and version control, continuously monitoring pipelines, prioritising risky areas, and even generating new test cases. Developers shift from manually executing each step to supervising, constraining, and auditing what these agents do inside the codebase.

AI-First Developer Platforms: Beyond Code Suggestions

The future of IDEs is also being shaped by AI-native platforms that embed intelligence deeply into the stack. Tuya Smart’s upgraded Hey Tuya platform, unveiled at its Global Developer Summit, is one example. Rather than just sprinkling autocomplete into an editor, Hey Tuya expands AI assistance across smart home integration and everyday tools like Google Mail, Calendar, and Docs. Its Vibe Coding feature allows creators to build AI plus IoT applications using natural language instead of traditional code, effectively turning prompts into running services. For Tuya, this widens the developer base and pushes usage toward higher-value software and services. For developers, it hints at a future where the primary interface is a conversational or visual orchestration layer. You describe behaviours, workflows, and integrations, while AI agents generate and manage the underlying code, infrastructure, and glue logic behind the scenes.

Trade-Offs and Next Steps for Malaysian Developers

For working developers in Malaysia, these changes bring both opportunities and risks. AI pair programming and agentic AI coding can accelerate routine tasks: scaffolding services, refactoring legacy modules, or drafting tests. But relying too heavily on an LLM coding assistant can weaken your intimacy with the codebase and make subtle bugs harder to catch. There are also security and privacy concerns: sending proprietary code or customer data to external models may breach policies or regulations. To experiment safely, start with non-sensitive projects, keep agents read-only on critical repos at first, and enforce guardrails like code review and automated testing around any AI-generated changes. Skills that remain essential include strong fundamentals, system design, debugging, and the ability to evaluate AI output critically. To future-proof your workflow, treat AI tools as orchestration partners: learn their strengths, design robust review pipelines, and stay comfortable switching between chat, agents, and traditional editors as needed.