Onboard Autonomy Redefines Drone Target Lock

Autonomous drone tracking is rapidly moving from concept to field capability as AI processors migrate onto the airframe itself. Maris-Tech’s Jupiter platform is a clear example, bringing AI target lock-on directly to unmanned systems so they can follow designated objects without constant operator input. By embedding intelligent video engines and compact computing modules on the drone, the platform maintains stable lock-on even as targets move unpredictably or visibility changes. This trend is reshaping how operators manage surveillance missions: rather than manually steering cameras and continuously clicking to reacquire a subject, they can simply designate a target and let the onboard system handle tracking. The result is more reliable autonomous surveillance drones that can sustain focus over longer mission windows, reduce human fatigue, and keep situational awareness high even in complex environments where multiple moving objects compete for attention.

Real-Time Drone Processing Cuts Latency and Operator Workload

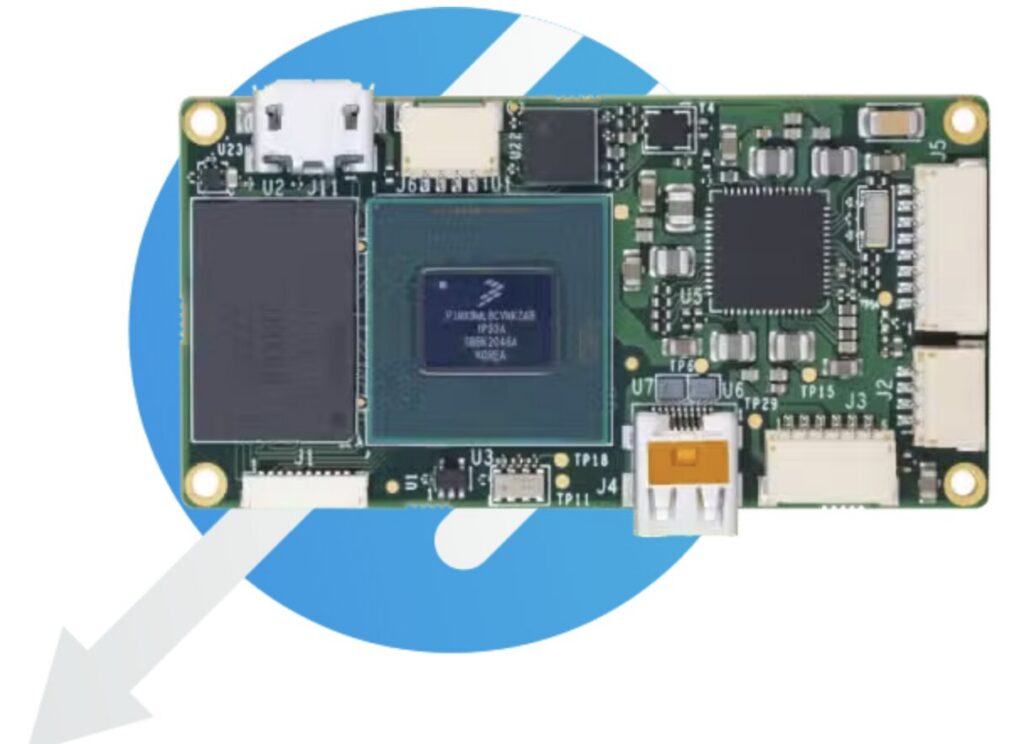

The shift toward real-time drone processing is central to making AI target lock-on practical in the field. Maris-Tech’s Jupiter family integrates video compression, multi-channel streaming, and onboard analytics to keep latency low while managing several camera feeds simultaneously. Jupiter-Drones can stream live video from two cameras, while Jupiter-AI adds a dedicated Hailo-8 processor for automated detection and tracking across multiple channels. For micro and constrained platforms, Jupiter-Nano and Jupiter Mini bring similar capabilities in smaller, power-efficient packages. This architecture enables the drone to analyze scenes locally, decide which targets to prioritize, and maintain lock without waiting for commands from a remote ground station. As a result, operators can supervise more platforms at once, intervene only when needed, and rely on autonomous surveillance drones to respond faster to new threats or opportunities than a human-controlled system could realistically achieve.

High-Accuracy Targeting in Contested Electronic Warfare Environments

While onboard autonomy is critical, precision still depends on knowing exactly where a target is on the ground. BAE Systems GXP and Vantor are addressing this by bringing high-accuracy targeting into new drone platforms that must operate amid GPS spoofing, jamming, and degraded sensors. Vantor’s Raptor software suite, integrated into the GXP ecosystem, georegisters full-motion video from a drone camera with 3D terrain data in real time. Its Raptor Sync capability injects corrected KLV metadata directly into the video stream, overriding inaccurate telemetry and delivering absolute ground coordinate accuracy of less than 3 meters. This approach ensures that even when inertial systems drift or GPS is unreliable, analysts can maintain confidence in the coordinates used for intelligence and targeting workflows. In effect, it closes the loop between what the drone sees and where that target actually is, a prerequisite for any weapon-quality application.

From Manual Control to Autonomous, Coordinated Targeting Workflows

Together, autonomous drone tracking and high-accuracy georegistration are reshaping how operators plan and execute missions. With onboard AI maintaining target lock and Raptor-enhanced workflows preserving coordinate fidelity, human operators can step back from manual joystick control and focus on higher-level decisions. Drones can autonomously follow vehicles, people, or locations, while the GXP ecosystem fuses corrected metadata with other sensor inputs for multi-domain operations. This automation reduces cognitive load, shortens the time from detection to action, and keeps operational tempo high even when communications links are constrained or intermittent. As more unmanned platforms adopt integrated video engines, edge AI processors, and resilient targeting pipelines, the role of the operator shifts from direct control to supervision and mission management, setting the stage for more coordinated swarms, persistent surveillance networks, and faster, more precise responses in dynamic scenarios.