From Passive Cursor to Active AI Mouse Pointer

For more than half a century, the mouse pointer has done one thing: show where you are on the screen. Google DeepMind now wants it to do something radically different. Instead of merely tracking position, its experimental AI mouse pointer is designed to understand what you are pointing at and what you want to do next. Powered by Gemini, Google’s flagship AI model, the pointer becomes a gateway to assistance that appears exactly where your work is happening. Rather than opening a separate chatbot, copying content, and rewriting prompts, you simply hover and speak. This approach places AI at the heart of the computer interface, turning a static icon into a context-sensitive collaborator and signaling a new wave of computer interface innovation focused on deeply embedding AI into familiar interactions.

AI That Comes to Your Work, Not the Other Way Around

DeepMind’s design goal is to remove the friction of traditional AI productivity tools. Today, using AI often means stopping your task, opening a separate window, and laboriously explaining what is on your screen. The AI mouse pointer reverses that flow. Hover over a data table and ask for a pie chart, select a recipe and say “double these ingredients,” or point at a dense PDF and request a summary ready to paste into an email. Because Gemini can see and interpret the visual and semantic context around the cursor, you no longer need to describe it in detail. AI becomes ambient: always there, following your pointer, ready for quick, natural commands that keep you inside your current app and in the mental flow of your work.

Four Interaction Principles: Shorthand, Context, and Flow

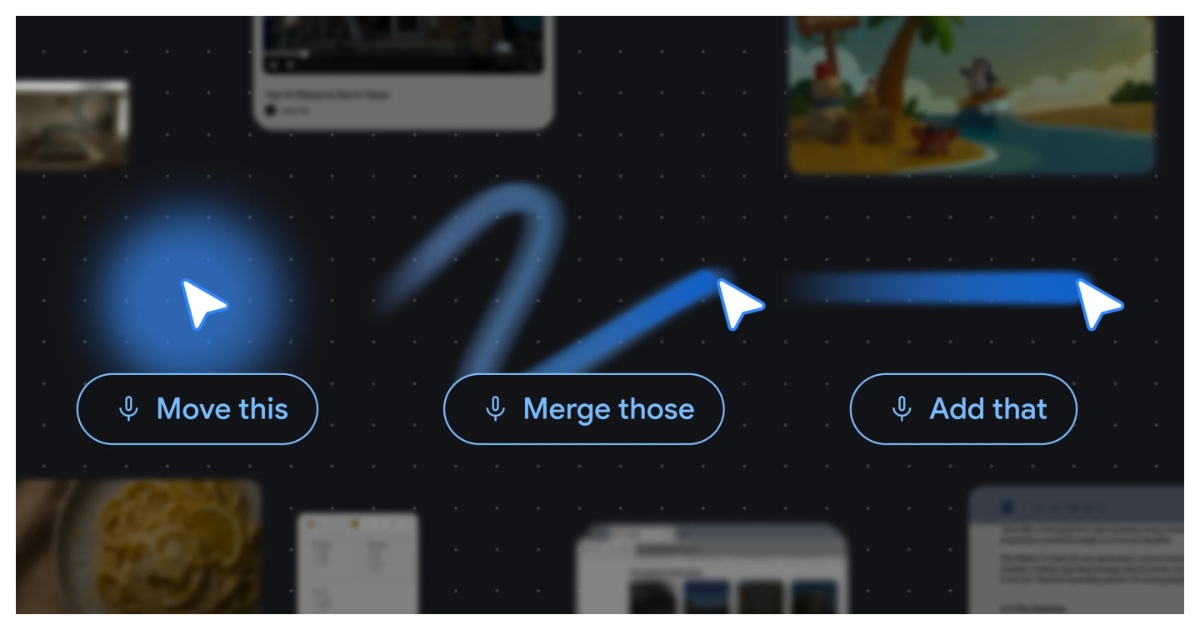

Under the surface, DeepMind’s concept rests on four interaction principles that rethink how people talk to computers. “Maintain the flow” keeps assistance inside the app you are already using, minimizing context switching. “Show and tell” means the system sees what you point at, so you do not have to write long prompts. “Embrace natural shorthand” reflects how people actually collaborate: they gesture and say things like “fix this” or “move that,” combining cursor position with brief speech. Finally, “turn pixels into entities” upgrades screen content from inert visuals into actionable objects, like recognizing a date, place, or handwritten note and turning it into something you can edit, schedule, or search. Together, these ideas recast the cursor as a conversational, multimodal bridge between human intent and machine capability.

Accessibility, Productivity, and the Future of Interface Design

If it works at scale, an AI-enhanced pointer could have significant implications for accessibility and productivity. For users who struggle with complex menus, dense documents, or fine motor control, being able to simply point and speak could lower barriers and streamline workflows. Everyday tasks—summarizing pages, transforming data, or extracting action items from images—could shrink to a couple of gestures. This fits a broader push inside Google DeepMind to make Gemini an ever-present universal assistant across Search, Workspace, YouTube, and now the operating system layer. The mouse pointer project hints at a future where core interfaces that seemed frozen for decades are reimagined with AI at their center. Instead of learning new tools, users may see their most familiar controls quietly become smarter, more responsive partners in getting work done.