A Real-Time Voice API Built for Live Systems

OpenAI has launched three new real-time voice models—GPT-Realtime-2, GPT-Realtime-Translate and GPT-Realtime-Whisper—through its GPT-Realtime API. Instead of treating voice as a thin layer on top of text chat, the company is positioning these OpenAI voice models as infrastructure for live assistants, call flows and always-on voice agents. The models are designed to keep speaking while they reason, translate or transcribe, and to handle interruptions without losing the thread of a conversation. This move signals that voice is becoming an operational layer for apps and workflows, not just a novelty feature. Developers can target specific voice workloads with the right model, then orchestrate them into unified experiences that combine voice reasoning, live translation AI and streaming transcription inside a single product or customer journey.

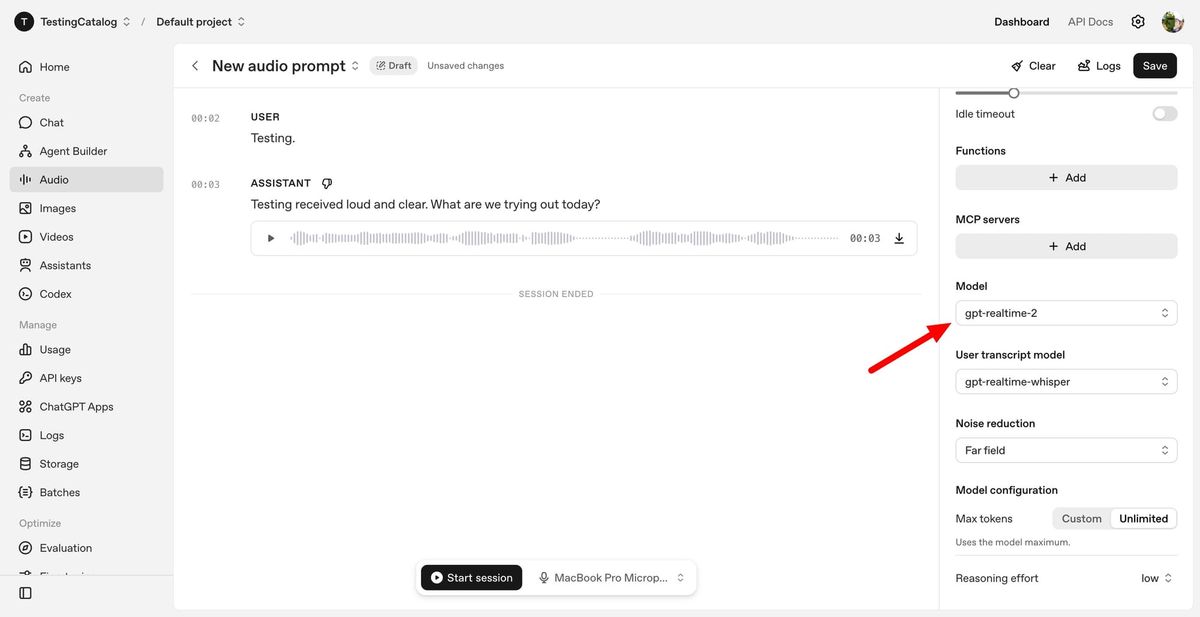

GPT-Realtime-2: Voice Reasoning Models With GPT-5-Class Intelligence

GPT-Realtime-2 is the flagship reasoning model in the new stack, bringing what OpenAI describes as GPT-5-class reasoning to spoken conversations. It is built to manage complex, non-linear dialogue: users can interrupt themselves, change topics or correct the system mid-task, while the agent continues to respond coherently. The model supports parallel tool calls, allowing it to execute multiple actions at once, such as fetching data while summarizing earlier context. It also introduces short spoken preambles like “let me check that,” improved recovery when tools fail, and a larger 128K context window, up from 32K in the previous generation. Developers can tune reasoning effort from minimal to extra high, trading latency for depth when needed. Together, these features push voice AI from simple call-and-response toward agents that can genuinely think while they talk.

GPT-Realtime-Translate: Live Translation AI for Multilingual Voice Products

GPT-Realtime-Translate focuses on live multilingual communication, turning the real-time voice API into a foundation for cross-language products. It accepts speech input in more than 70 languages and generates spoken output in 13 languages, enabling instant translation during conversations. The model is optimized to stay in sync with human speakers, even as they shift topics, introduce domain-specific jargon or speak with strong regional accents and pronunciations. This makes it suitable for customer support, cross-border sales calls, live events, education platforms and media localization workflows. Because translation is handled by a dedicated model instead of being bolted onto a general assistant, developers can tune latency, quality and cost for translation-specific workloads. In practice, GPT-Realtime-Translate helps teams build experiences where language barriers disappear inside a single, continuous voice interaction.

GPT-Realtime-Whisper: Streaming Transcription for Low-Latency Workflows

GPT-Realtime-Whisper fills the transcription lane in OpenAI’s voice stack. It offers streaming speech-to-text, transcribing audio as people speak rather than after the fact. This low-latency design is aimed at products that need text output in near real time, such as live call analytics, agent assist dashboards, meeting notes or compliance monitoring tools. By separating transcription from reasoning, developers do not need to run the heaviest voice reasoning models for every second of audio. Instead, they can use GPT-Realtime-Whisper to capture what was said, then selectively invoke GPT-Realtime-2 where deeper analysis, decision-making or tool use is required. This split reduces orchestration complexity that teams previously handled with custom state management and session resets, while keeping conversational context available for longer, more intricate voice-driven workflows.

From Chatbots to Voice-First Workflows

With these three OpenAI voice models, developers can build voice applications that move beyond simple chat into end-to-end workflows. GPT-Realtime-2 can turn spoken requests into actions by calling tools and systems in the background, supporting voice-to-action use cases like conversational search or booking flows. In systems-to-voice scenarios, apps can proactively speak to users, explaining status changes or suggesting next steps in natural language. Voice-to-voice interactions combine transcription, reasoning and translation so that both parties stay in a real-time, spoken dialogue regardless of language. Crucially, the GPT-Realtime API allows teams to mix and match reasoning, live translation AI and streaming transcription as separate layers, tuning each for latency and depth. This modular approach makes it easier to integrate voice into existing products while also enabling entirely new voice-first experiences.