Fast Code, Shallow Understanding

AI coding tools have transformed how quickly developers can ship features. Surveys of engineering organizations report juniors completing tasks up to 55% faster with AI assistance, while tools like Claude Code are spreading rapidly across teams. The catch is that code generation has been decoupled from genuine understanding. A junior can now submit a complex, well-structured pull request that passes tests and looks tidy in review, yet be unable to explain why a subtle timing bug appears in production. The AI wrote most of the logic; the human merely orchestrated prompts. This asymmetry—rapid production versus slow or nonexistent comprehension—creates hidden risks. The software may work today, under test conditions, but when something fails in a live system, the person nominally responsible lacks the debugging skills and mental models needed to reason about what went wrong or how to fix it safely.

Code Review Problems and the New "Expert Beginner"

Engineering leaders are increasingly encountering a new pattern during code review: AI-assisted developers who can’t articulate how their own solutions behave. The code looks careful and well-tested, yet when reviewers probe edge cases, the author stalls because they relied on an AI coding tool to synthesize the logic. This has given rise to a fresh twist on the “expert beginner” archetype. These developers are not arrogant or sloppy; they are fast, conscientious, and produce code that passes review. They simply cannot tell you why it works—or why it fails in rare conditions. That makes code review less of a collaborative design session and more of a forensic investigation. Senior engineers must reverse-engineer AI-generated patches, turning review into a debugging exercise. Instead of catching issues early, teams discover bugs only after deployment, when they are costlier to track down and resolve.

Developer Comprehension and the Debugging Skills Gap

The growing reliance on AI coding tools is widening the gap between writing code and understanding it. For senior engineers, years of architectural context help them validate or reject AI suggestions. They can triangulate between the generated code, existing systems, and their mental model. For juniors, that context is precisely what’s missing. They accept plausible-looking output they cannot fully reason about, and their debugging skills stagnate. When bugs emerge—especially complex, multistep issues—these developers lack foundational problem-solving habits: tracing execution paths, isolating variables, and forming hypotheses. Research into large language models shows that even advanced systems struggle with long-running, multi-step workflows, often corrupting documents or introducing errors over time. If humans merely supervise these agents without deeply engaging, they inherit the same fragility. The result is a class of developers who can assemble sophisticated solutions quickly but struggle painfully when those systems misbehave.

Stalled Talent Pipelines and Long-Term Risk

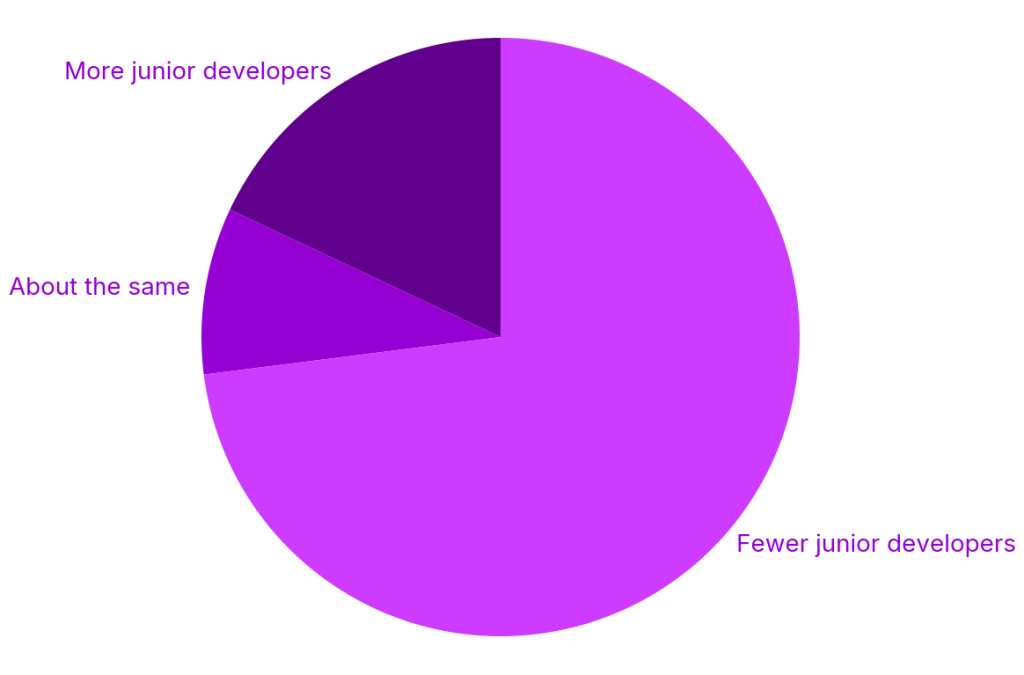

At the same time that AI is accelerating individual productivity, the industry’s traditional training ground is shrinking. Entry-level tech postings have dropped sharply, internships are declining, and research shows 73% of organizations have reduced junior hiring over the past two years. Many companies are betting on a “seniors with AI” model, where experienced engineers augmented by AI replace entire cohorts of beginners. In the short term, this looks efficient: fewer hires, more output. Long term, it erodes the pipeline of developers who learn debugging, design trade-offs, and systems thinking the hard way. Over half of so-called entry roles already require several years of experience, leaving fewer on-ramps for new talent. Without a generation that has wrestled with messy bugs and lived through failures, organizations risk accumulating teams that can manage tools—but cannot deeply understand, maintain, or evolve the complex software they produce.

Keeping AI on a Short Leash

Research into delegated AI workflows suggests that even frontier models are not ready to be fully trusted with complex, long-running tasks. Benchmarks simulating multistep professional work show that models often introduce substantial errors over repeated interactions, degrading or corrupting documents in a large share of scenarios. While programming tasks fare better than natural language work, the pattern is clear: the more you delegate, the greater the risk of silent failure. For engineering teams, this means treating AI coding tools as powerful assistants, not autonomous agents. Humans must retain ownership of reasoning, verification, and debugging. Organizations that embrace AI without investing in developer comprehension, robust code review practices, and deliberate skill development may gain short-term speed only to pay later in catastrophic bugs, brittle systems, and a workforce that can no longer confidently explain the software running their businesses.