A New Stack for Real-Time Voice Intelligence

OpenAI has expanded its OpenAI voice API with three real-time voice models tailored to different audio workloads: GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper. Rather than treating voice as a single monolithic feature, OpenAI is splitting reasoning, translation and transcription into distinct GPT realtime audio models that can be mixed and matched in production. All three models are exposed via the Realtime API, aiming to support live assistants, call flows, and streaming transcription products that must keep speaking while handling interruptions and tool calls. The launch signals a shift in voice app development: voice is increasingly an operational layer for applications, workflows and customer interactions, not just a friendly chat interface. By giving developers fine-grained control over latency, context and reasoning depth, OpenAI is positioning these real-time voice models as infrastructure for sophisticated voice agents that can act, not just talk.

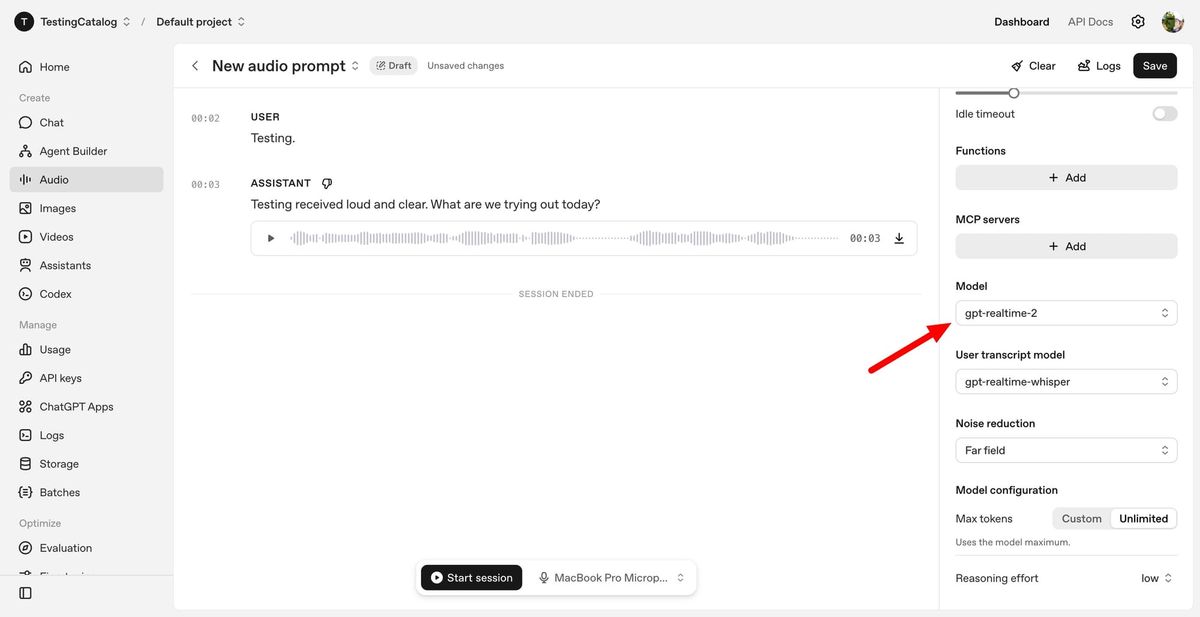

GPT-Realtime-2: GPT-5-Class Reasoning for Live Conversations

GPT-Realtime-2 is the reasoning engine of the new lineup, bringing what OpenAI describes as GPT-5-class reasoning directly into live spoken interactions. The model is designed to manage real-world conversational chaos: users interrupt themselves, change their minds, or switch topics mid-sentence. To cope, GPT-Realtime-2 supports parallel tool calls, short spoken preambles such as “let me check that,” and clearer verbal cues while work happens in the background. Its context window has been expanded from 32K to 128K tokens, enabling longer, more coherent dialogues without constant state resets. Developers can tune reasoning effort from minimal to xhigh, trading off latency against depth for complex workflows. OpenAI reports gains over GPT-Realtime-1.5 in audio intelligence, instruction following and conversation control, including better recovery when tasks fail. For voice app development, this means voice agents can move beyond brittle call-and-response behavior into sustained, task-oriented conversations.

GPT-Realtime-Translate: Live Voice Translation at Conversational Speed

GPT-Realtime-Translate targets live voice translation scenarios that demand both speed and nuance. The model accepts speech in more than 70 input languages and outputs translated speech in 13 languages, keeping pace with speakers during fast-moving exchanges. It is designed for live voice translation use cases such as customer support hotlines, cross-border sales calls, education platforms, events, creator tools, and media localization. Beyond raw language conversion, GPT-Realtime-Translate focuses on maintaining context, managing regional accents and domain-specific terminology, and handling mid-conversation topic shifts without losing meaning. It can also provide realtime transcriptions alongside spoken translations, helping developers build hybrid experiences like bilingual captions or compliance logs. As organizations adopt more global voice interfaces, this dedicated translation tier lets teams offload multilingual complexity while using other models, such as GPT-Realtime-2, for higher-level reasoning and workflow orchestration.

GPT-Realtime-Whisper: Low-Latency Streaming Transcription

GPT-Realtime-Whisper completes the stack with streaming speech-to-text designed for continuous, low-latency transcription. Instead of waiting for a speaker to finish, the model transcribes audio as people talk, enabling live captions, meeting notes and voice-driven workflows that stay in sync with conversation. This makes it suitable for voice platforms that need persistent awareness of what is being said—whether to power downstream analytics, automate documentation, or trigger tools and actions in real time. By separating transcription from reasoning and translation, OpenAI allows developers to choose where they need heavier models and where lightweight, responsive text output is sufficient. Combined with GPT-Realtime-2 and GPT-Realtime-Translate, GPT-Realtime-Whisper helps developers build voice agents that can simultaneously listen, understand, act and respond, with each capability optimized for latency, cost and reliability in live production environments.

From Simple Chatbots to Operational Voice Agents

OpenAI’s new real-time voice models push voice AI from casual conversation into serious business workflows. GPT-Realtime-2 enables voice-to-action scenarios where users speak naturally while tools run in the background, such as searching for homes, applying filters, or scheduling appointments through dialogue alone. Systems-to-voice patterns emerge when software systems surface updates, alerts or status changes via spoken interfaces. Meanwhile, GPT-Realtime-Translate and GPT-Realtime-Whisper support voice-to-voice interactions, enabling multilingual conversations and real-time transcription within the same application. Developers can orchestrate all three models through the OpenAI voice API to build voice agents that reason over long contexts, translate on the fly, and maintain low-latency transcriptions. This modular design aims to reduce orchestration overhead and make it easier to deploy enterprise-ready voice agents that maintain context across interruptions, tool hops and long calls, ultimately turning voice into a first-class interface for complex digital workflows.