From Autocomplete to Orchestrated AI Coding Agents

A new wave of AI coding tools is shifting focus from generating snippets quickly to orchestrating entire development workflows. GitHub’s Spec-Kit, OpenAI’s Symphony, and Anthropic’s Petri 3.0 all treat AI coding agents as components inside structured systems rather than clever autocompletes. Instead of a single prompt and a burst of code, they emphasize spec-driven development, governance, and integration with existing tools like ticketing systems and test harnesses. Each project is open-source, but none is framed as a plug‑and‑play productivity gadget. They are reference architectures and toolkits that encode process: how work is specified, dispatched, implemented, and audited. The common thread is clear. As AI coding agents become more capable, the bottleneck is no longer raw model speed; it is how safely and predictably those agents fit inside a human‑supervised code generation workflow.

GitHub Spec-Kit: Spec-Driven Development Before Any Code is Written

GitHub’s Spec-Kit pushes teams to formalize intent before asking an AI to write a single line of code. The toolkit turns feature ideas into specs, plans, tasks, and implementation steps, enforcing a staged flow of “Specify → Plan → Tasks → Implement.” Its Specify CLI and template library guide users through detailed specification writing and planning, while slash commands support constitution drafting, task breakdown, issue conversion, and implementation handoff. Optional commands for clarification, analysis, and checklists encourage teams to surface missing information and testing needs early, making AI coding agents operate inside an explicit design rather than improvising from a vague prompt. With Spec-Kit v0.8.7 already attracting more than 90,000 GitHub stars and over 8,000 forks, the project suggests growing appetite for slower, more deliberate spec-driven development if it yields more reliable, auditable AI-assisted code.

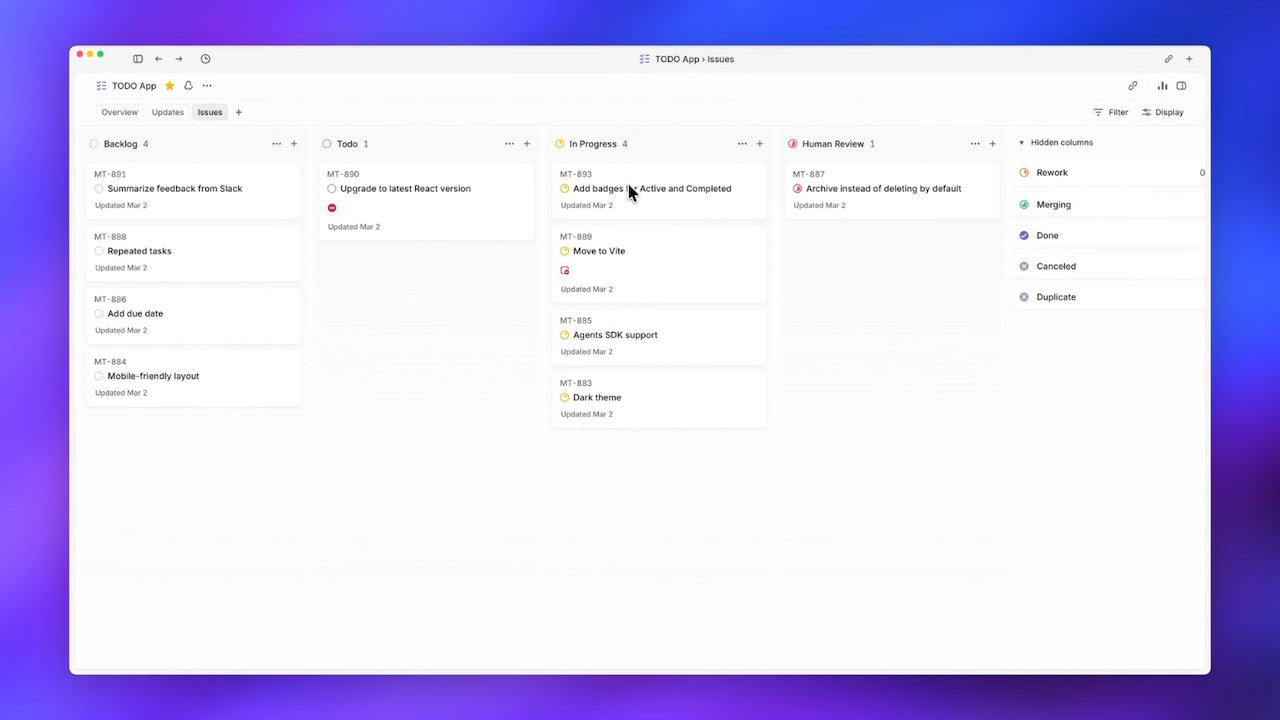

OpenAI Symphony: Autonomous Ticket-Driven Code Generation Workflow

OpenAI’s Symphony tackles a different bottleneck: human supervision limits how many AI coding agents can run in parallel. Symphony is an open-source specification and Elixir reference implementation that lets Codex agents pull tickets directly from Linear, treat the backlog as a state machine, and work autonomously until pull requests are merged. Each ticket gets its own agent, and the system respawns agents that crash mid-task, removing humans from the dispatch loop while keeping them in review roles. OpenAI reported a sixfold increase in merged pull requests for internal Codex teams during Symphony’s first three weeks, indicating that orchestrating agents around the ticketing system can unlock significant throughput. Symphony is explicitly shipped as a reference, not a maintained product, underscoring its primary value as a pattern for integrating AI coding agents into existing code generation workflows rather than a turnkey service.

Anthropic Petri 3.0: Modular AI Alignment Testing for Production Systems

Anthropic’s Petri 3.0 addresses the other end of the pipeline: verifying that deployed AI systems behave as intended. The update, handed to nonprofit steward Meridian Labs, transforms Petri into a more modular, production-aware AI alignment testing toolbox. A key change is structural: the auditor model is now cleanly separated from the target model, letting teams tune the judge’s behavior without rebuilding tests around each new system under review. Petri 3.0 also adds Dish and Bloom-based behavior checks, expanding how it probes real-world deployments for problematic or off-spec behavior. Within Meridian’s broader open evaluation stack, alongside tools like Inspect and Scout, Petri becomes part of a layered framework for AI alignment testing. Rather than chasing faster code generation, it focuses on governance—how AI behavior is measured, compared, and made easier for external researchers and public-sector teams to trust and run.

A Governance-First Future for AI Coding Workflows

Taken together, Spec-Kit, Symphony, and Petri signal a maturing view of AI coding: capability alone is not enough. GitHub is encoding spec-driven development into the front of the pipeline, forcing structure before code; OpenAI is encoding dispatch and lifecycle management into the middle, letting AI coding agents own tickets while humans review results; Anthropic and Meridian are encoding AI alignment testing and behavioral audits at the back. All three center human oversight, but at clear checkpoints rather than in constant babysitting loops. They integrate with established tools—issue trackers, specs, test frameworks—instead of replacing them. This move toward orchestration, governance layers, and end‑to‑end code generation workflows suggests that the industry’s next gains will come less from bigger models and more from carefully designed systems that keep AI agents accountable, observable, and aligned with team processes.