Why 1‑Bit LLMs Make Local AI Actually Practical

If you want to run a local LLM on a desktop GPU, VRAM is usually the hard limit. Traditional FP16 models eat gigabytes quickly, but 1‑bit LLMs like PrismML’s Bonsai flip that equation with extreme quantization. In the Bonsai Q1_0_g128 format, each weight is stored as a single sign bit (0 or 1) plus a shared FP16 scale for every 128 weights. That works out to just 1.125 bits per weight, yet still preserves enough structure for useful text generation. For a Bonsai‑1.7B model, the memory footprint drops from 3.44 GB in FP16 to around 0.24 GB with Q1_0_g128, over fourteen times smaller. Suddenly, running a local LLM on an 8 GB gaming GPU stops being a fantasy and starts looking like another high‑end workload alongside your games. This 1 bit LLM guide focuses on using that efficiency to build a fast, private desktop GPU AI setup.

Hardware and CUDA AI Setup on a Gaming PC

To run local LLM workloads comfortably, treat your rig like a compact AI workstation. For GPUs, 8 GB cards can handle small 1‑bit models such as Bonsai‑1.7B entirely in VRAM, while 12–16 GB GPUs leave more headroom for longer context, RAG indexes, and future model experiments. A modern multi‑core CPU helps with token sampling and file I/O, and 16 GB or more of system RAM keeps background tasks from interfering with inference. SSD storage is important because model files and embeddings can easily span multiple gigabytes even when weights are aggressively compressed. On the software side, a typical CUDA AI setup follows the Bonsai tutorial pattern: install recent NVIDIA drivers, CUDA, and compatible libraries; download or build llama.cpp with CUDA support; then grab a Bonsai GGUF model in Q1_0_g128. On Windows or Linux, the process is mostly about matching your CUDA version and binaries correctly so the llama cpp GGUF backend can offload as much work as possible to your GPU.

From GGUF to Tokens: Installing and Benchmarking Bonsai

Once CUDA is working, the next step is to actually run local LLM inference. Bonsai ships as GGUF model files designed for llama.cpp, which handles loading the quantized weights, running the transformer layers, and streaming tokens. After placing the Bonsai GGUF file in your models folder, point your llama.cpp build or Python wrapper at it and run a simple prompt to confirm everything works. Performance is best measured in tokens per second. The Bonsai tutorial includes a benchmark function that generates, for example, 128 tokens several times and reports an average throughput. In published results, an RTX 4090 with CUDA delivers around 674 tokens per second on a TG128 benchmark. Your own desktop GPU AI numbers will vary, but this gives a useful reference. You can tweak context length, batch size, and sampling parameters to trade speed for quality. Watching how those changes affect tokens per second helps you tune your local setup for either snappy chat or slower, higher‑quality generations.

Real‑World Uses: Chat, JSON Tools, and Lightweight RAG

With a tuned 1‑bit Bonsai model, your gaming PC becomes a flexible offline assistant. A simple multi‑turn chat wrapper can accumulate system prompts, user messages, and previous replies into a rolling context, giving you a privacy‑friendly alternative to cloud chatbots. By constraining prompts and decoding, you can nudge the model to emit structured JSON for tasks like note extraction, todo lists, or quick prototyping of tool‑calling workflows. For many enthusiasts, the most exciting workflow is lightweight RAG on local files. By pairing Bonsai with an embedding model and a small vector store, you can index documents, code, or notes, then feed retrieved snippets into the prompt. The model remains compact thanks to 1‑bit quantization, so most of your GPU and RAM budget is free for context and retrieval. This is an effective way to run local LLM features such as search, summarization, and Q&A entirely on your own hardware with full control over your data.

Troubleshooting and Why PC Builders Should Care

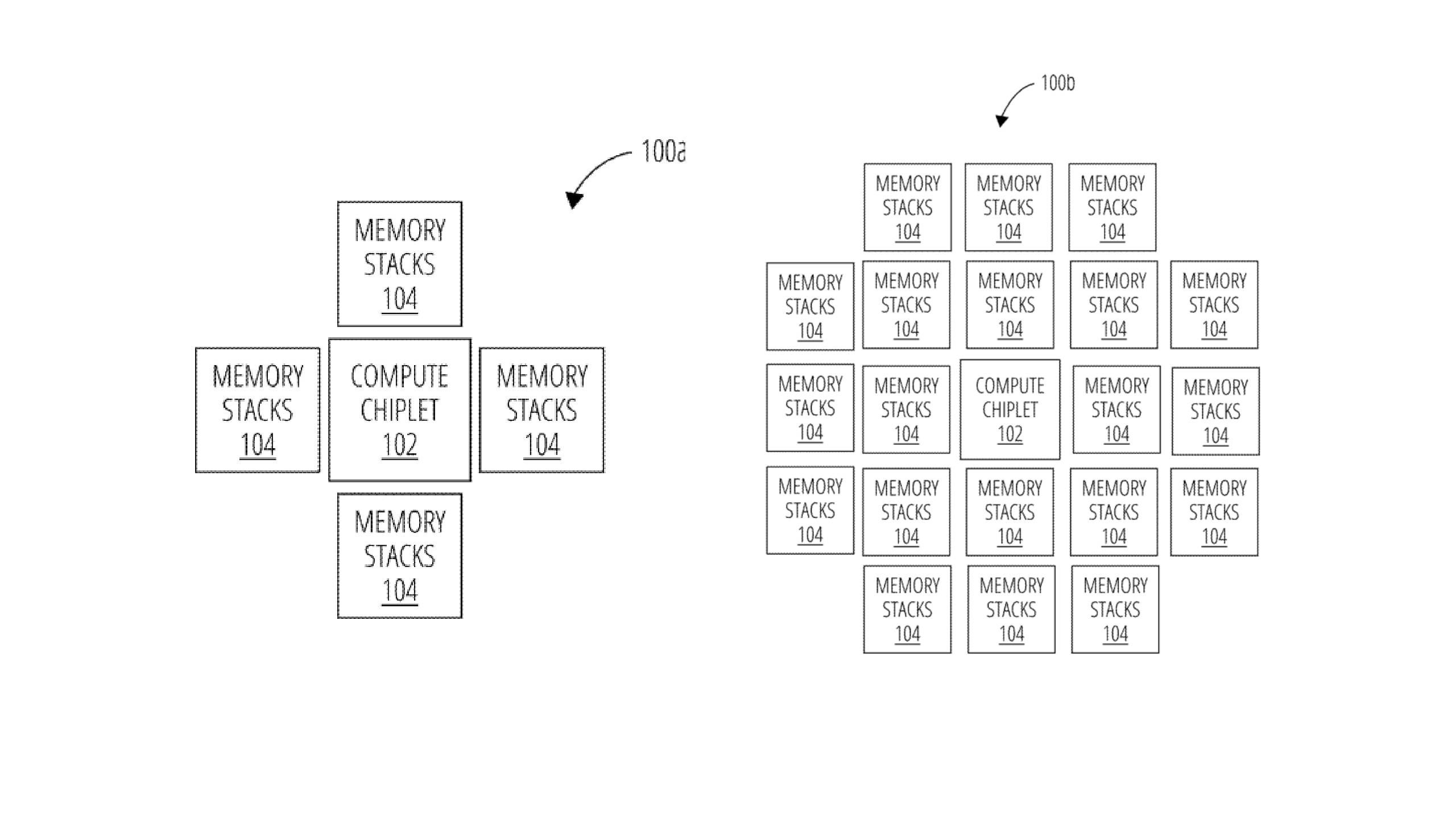

Running a 1‑bit LLM is still a real GPU workload, so expect some tuning and troubleshooting. Mismatched CUDA and driver versions, missing cuDNN libraries, or llama.cpp binaries compiled for the wrong compute capability are common culprits when the model fails to load or refuses to use the GPU. Checking that your CUDA toolkit aligns with your installed drivers, validating the GGUF model filename and quantization type, and watching console logs for clear error messages go a long way toward resolving issues. From a hardware perspective, local AI is another argument for caring about VRAM capacity, cooling, and power delivery. Even with extreme compression, longer contexts and multi‑model setups benefit from more memory, echoing the broader AI industry’s obsession with stacking more memory near compute. For PC enthusiasts, that means GPU choice and airflow matter not just for frame rates, but also for how smoothly you can run local llm experiments, RAG pipelines, and other GPU‑accelerated AI tools.