From Power-Hungry Brains to Brain-Like AI Chips

The biggest AI hardware breakthrough on the horizon may have nothing to do with bigger models and everything to do with how they’re powered. Researchers at the University of Cambridge have built a brain like AI chip using a nanoelectronic device called a memristor, based on a modified hafnium oxide film. Instead of shuttling data endlessly between separate compute and memory units, this neuromorphic design lets information be stored and processed in the same place, much like neurons in the brain. Early results suggest AI systems built on such hardware could cut energy use by up to 70% while maintaining highly stable operation over many switching cycles. This makes the technology especially promising for always‑on workloads, from recommendation engines to on‑device assistants, where today’s power-hungry accelerators are hitting practical limits in data centers and mobile devices alike.

Why Next-Gen AI Memory Technology Matters as Much as GPUs

Raw compute only goes so far if memory can’t keep up. NEO Semiconductor’s 3D X-DRAM proof of concept points to an AI memory technology shift that mirrors the move from 2D to 3D NAND in storage. Their test chips show that vertically stacked DRAM can be built using existing 3D NAND infrastructure while delivering sub-10 nanosecond read/write latency, long data retention at high temperatures and endurance above 10¹⁴ cycles. This combination promises much higher density and lower cost memory tailored for AI workloads. For large language models in particular, feeding parameters to the processor fast enough is a major bottleneck. Denser, more efficient 3D DRAM could keep more of the model closer to the compute units, boosting throughput and cutting energy use. The same approach can shrink and harden memory for edge devices, enabling faster, more reliable inference without constant trips to the cloud.

The Wearable AI Chip That Breaks the Old Rules

Anker’s THUS chip shows how a wearable AI chip can turn under‑the‑hood innovation into visible benefits. Built as a compute‑in‑memory (CIM) processor, THUS performs neural network calculations directly inside NOR flash memory cells instead of relying on a traditional CPU–memory dance. Because the model weights and the math sit in the same physical location, data movement—and therefore energy use—drops sharply. NOR flash is already known for low‑power reads, making it well suited to tiny batteries in Bluetooth earbuds and other wearables. By placing larger models on-device, THUS points to an AI infrastructure future where many assistants, translators and health monitors run locally rather than streaming everything to remote servers. Users gain lower latency, offline reliability and better privacy, while device makers unlock richer features without sacrificing battery life, all thanks to a fundamental rethinking of how and where AI computation happens.

Infrastructure, Not Just Models: Fabs, Labs and Quantum Tools

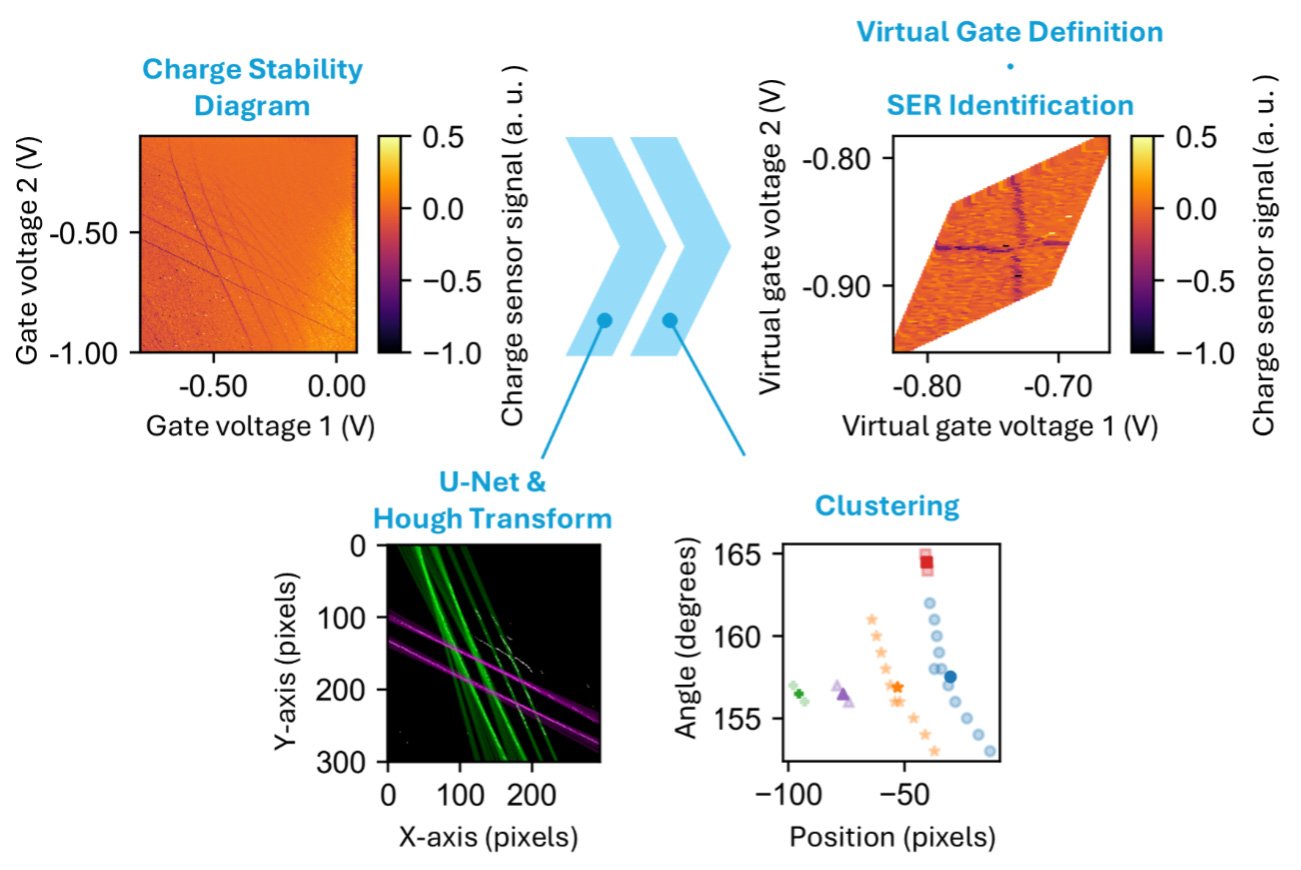

Across the industry, a pattern is emerging: the next AI wave is being built through infrastructure, not just clever model architectures. One example is Musk’s Terafab initiative, where Tesla, SpaceX and Xai plan to use Intel’s advanced 14A process to develop AI chips, backed by a research fab capable of producing a few thousand wafers per month. This kind of vertical integration aims to secure massive, tailored compute for robotics and autonomous systems. In parallel, Cognizant’s AI Lab is attacking costs on the training side, using evolution strategies to fine‑tune large language models more efficiently than traditional reinforcement learning. Even quantum hardware is getting an AI boost: researchers are applying U‑Net models to automatically tune semiconductor spin qubits by extracting charge transition lines from complex diagrams. Together, these efforts signal an ecosystem shift where specialized fabs, smarter training pipelines and AI‑assisted hardware tuning become core drivers of progress.

From Digital Rails to Everyday Devices: What Changes for You

The convergence of AI and digital public infrastructure suggests that the most important breakthroughs may arrive quietly, embedded in the systems we already use. Digital identity, instant payments and consent-based data exchanges form shared rails that public and private providers build on. As AI is layered onto these rails—helping translate languages, detect patterns and personalize services—reliability, interoperability and trust matter as much as raw model intelligence. At the same time, brain like AI chip designs, advanced memory and wearable AI chip deployments will let more capabilities run at the edge. Consumers can expect cheaper, more efficient AI features on phones, earbuds and home devices, with less reliance on always‑connected cloud services. Enterprises gain new options for keeping data local, cutting latency and controlling infrastructure costs. The trade‑offs will revolve around where to run which workloads, balancing privacy, performance and power across a rapidly evolving AI infrastructure future.