From Vision Pro Centerpiece to Smart Glasses First

Apple is quietly reshaping its spatial computing strategy, shifting engineering priority away from enclosed Vision headsets and toward lightweight Apple smart glasses and other wearables. The once-separate Vision Products Group was dismantled over a year ago, with staff folded back into broader hardware and software teams. Former headset lead Mike Rockwell now spends most of his time on a combined Siri and visionOS organization, signaling that software and intelligence matter more than a rapid hardware sequel. Apple has reportedly scaled back Vision Pro production after weak demand at a USD 3,499 (approx. RM16,000) entry price and canceled a cheaper “Vision Air” variant, with any new enclosed headset said to be at least two years away. Internally, leadership now frames Vision Pro as a technical stepping stone, not the endgame—the ultimate goal is everyday AR glasses that look and feel like normal eyewear.

AI at the Core of Apple’s Spatial Computing Strategy

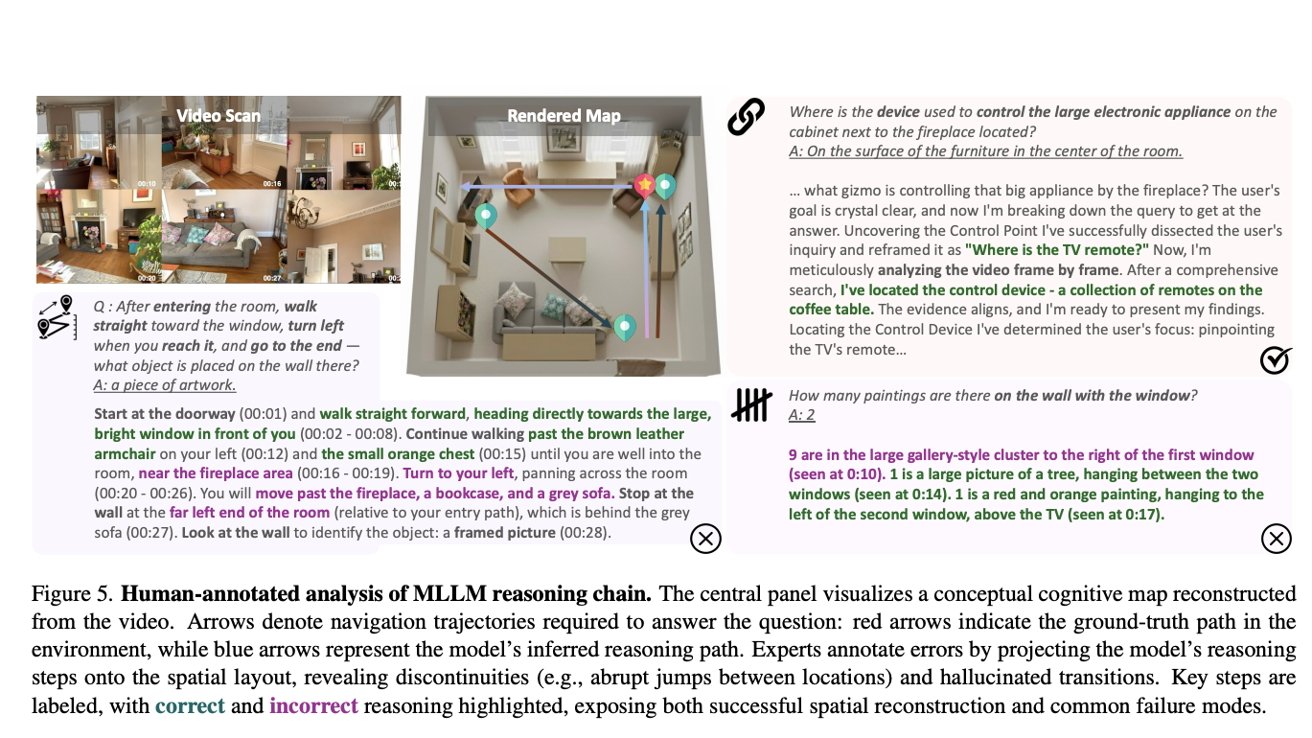

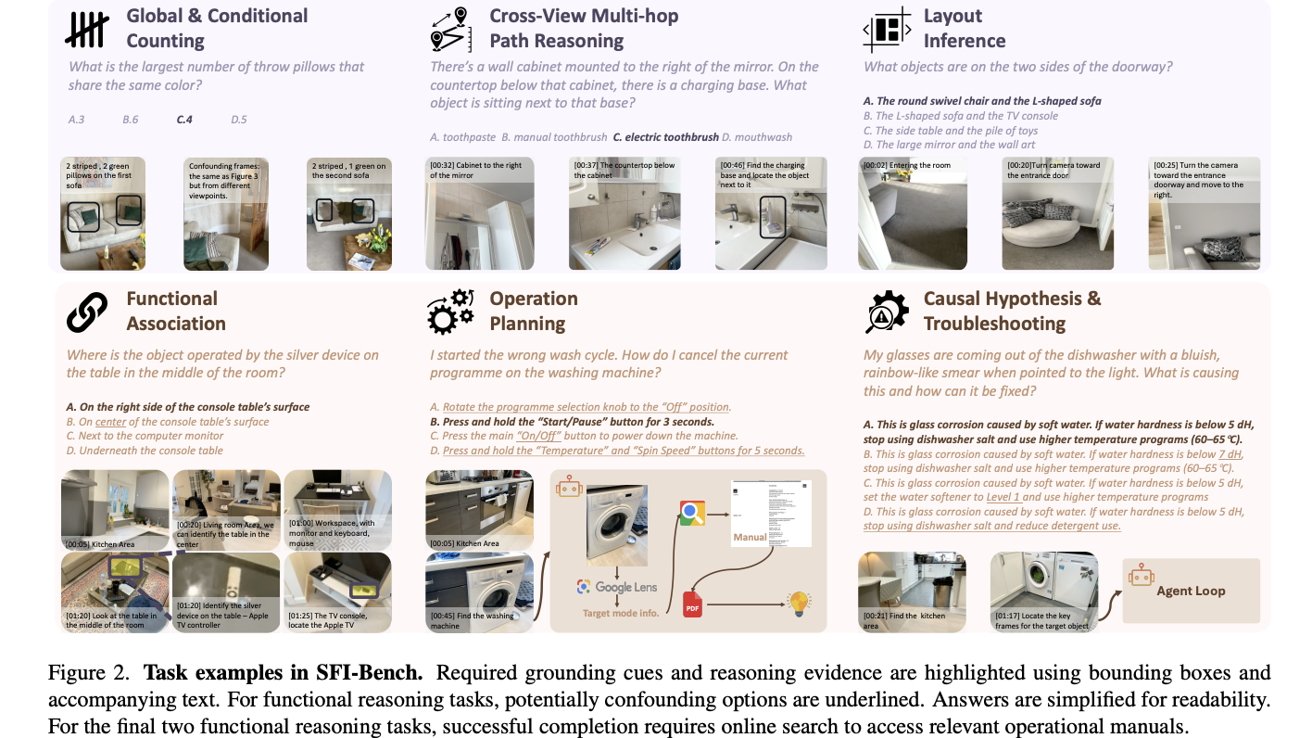

Even as Apple deprioritizes a near-term Vision Pro alternative, its investment in AI-driven spatial computing is accelerating. Recent studies from Apple’s machine learning teams focus on giving large multimodal language models a human-like understanding of physical spaces. One project introduces the Spatial-Functional Intelligence Benchmark (SFI-Bench), a video-based test suite that probes how well models grasp not just where objects are, but what they are for and how they are operated or fixed. This type of spatial-functional reasoning is crucial for AR glasses that must interpret a user’s environment in real time and offer relevant guidance—such as identifying a device, locating controls, or suggesting troubleshooting steps. Rather than betting solely on immersive VR, Apple appears to be building the AI foundation for context-aware assistants that live inside subtle, always-on wearables.

Sign Language, Accessibility, and the Future of Apple Wearables

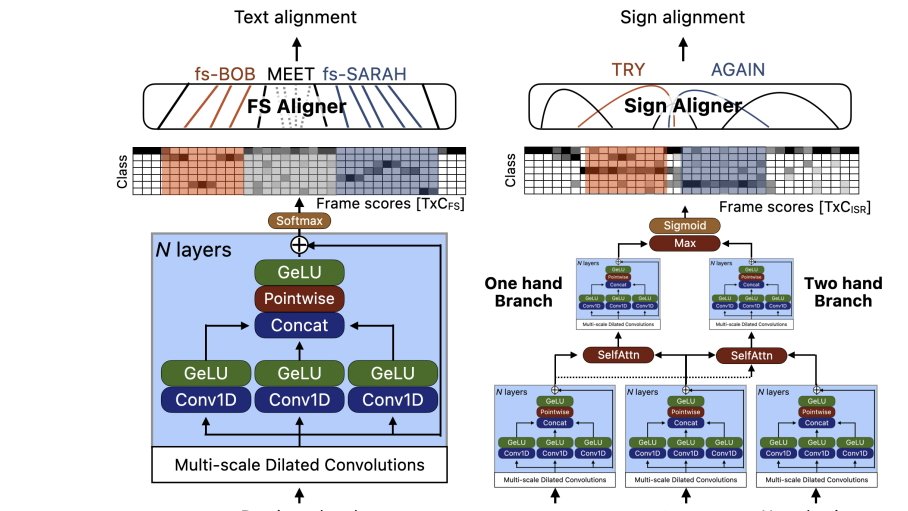

Apple’s spatial computing research also points toward more inclusive and practical Apple wearables. A separate study explores using AI models to bootstrap sign language annotation from video, combining signed footage with English input to generate likely glosses, fingerspelled words, and classifiers. The team reports state-of-the-art performance on key sign language datasets using relatively modest GPU resources. This work could underpin real-time or near-real-time sign language understanding in camera-equipped devices, tying into long-rumored AirPods with built-in cameras that feed environmental context to Siri and Apple Intelligence. Taken together, spatial reasoning benchmarks and sign language research suggest Apple is less focused on spectacular VR showpieces and more on everyday assistance—translation, instructions, accessibility—delivered through lightweight AR glasses and subtle audio wearables rather than a bulky headset.

What the Pivot Means for the AR Glasses Market

Apple’s move away from enclosed headsets aligns with a broader industry turn toward practical AR glasses over fully immersive VR. By treating Vision Pro as a necessary stepping stone instead of a mass-market endpoint, Apple is signaling that mainstream spatial computing must be comfortable, socially acceptable, and useful in short, frequent interactions. VisionOS updates are expected to remain modest as engineering attention shifts to AI models, Siri integration, and camera-equipped wearables that can power future Apple smart glasses. For the AR glasses market, this suggests competition will center less on resolution and field of view, and more on spatial-functional intelligence, accessibility features, and how seamlessly devices mesh with daily life. Consumers should expect fewer “VR worlds” and more ambient, context-aware help woven through the devices they already wear.