From Operating System to Intelligence System

With Android 17, Google says the platform is no longer just an operating system but an “intelligence system,” and Gemini AI is the reason why. The new release puts Gemini at the center of everyday phone use, going beyond voice commands and simple suggestions. Instead of manually hopping through menus, Gemini-powered agents are designed to quietly manage tasks in the background, stepping in when they can save you time. That means Android 17 features are increasingly framed around what Gemini can do on your behalf, not just what apps you can open. This reorientation marks a strategic bid to define the next era of mobile computing around AI smartphone control, positioning Gemini as the primary way you get things done while apps and system settings recede a little further into the background.

Gemini Intelligence: Deeper App Control and Automation

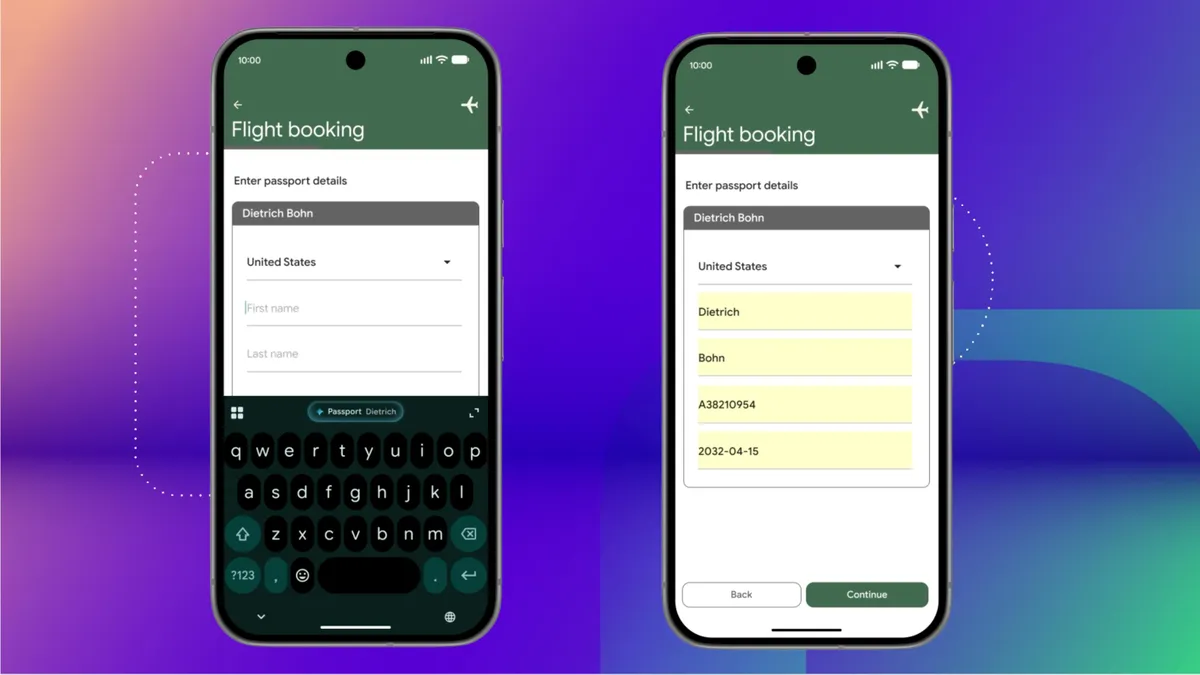

Gemini Intelligence is Google’s name for the expanded layer of smarts running across Android 17. Instead of merely answering questions, Gemini AI Android capabilities now extend into coordinating many apps at once. You can ask it to schedule a dentist appointment, assemble a grocery delivery from a notes app list, or scan a brochure photo and find a tour that matches your group size. It can autofill complex forms by pulling data from services like Google Drive, including details such as passport or driver’s license numbers, and even generate custom widgets from a simple prompt to show exactly the information you care about. Underneath familiar Android screens, Gemini becomes an agent that understands context, pulls the right information from connected apps, and executes multi-step actions end to end, so your phone behaves more like a proactive assistant than a collection of isolated icons.

AI-First Smartphone Interaction and Design

This shift to Gemini Intelligence is also reshaping Android’s look and feel. Google’s Material Expressive design refinements aim to make Gemini “melt into the background,” surfacing only when needed. Subtle visual cues now indicate when Gemini is listening, thinking, or working on a task, so you can see at a glance whether the AI is still processing or done. Rather than flashy effects, the design focuses on clarity and trust—important when an AI is making decisions inside your most personal device. Features like Chrome Auto Browse, which can plan a party or hunt down hard-to-find items for you, reinforce the idea that you start with a goal and let Gemini figure out which apps and services to use. The deeper the integration, the more Android 17 feels like an AI-first experience, where tapping icons becomes optional rather than essential.

What Users Can Expect as Gemini Spreads Across Devices

Gemini Intelligence is rolling out first to premium Android phones, including recent Google Pixel and Samsung Galaxy models, with Google describing it as a unified layer that also extends to Android Auto, Wear OS, and even smart glasses. For users, the promise is consistent assistance that understands personal context across screens: start planning an event on your phone, get driving help through Android Auto, and receive timely nudges on your watch without re-explaining what you need. Google is positioning this as a free upgrade on supported high-end devices, creating an incentive to stay in the Android ecosystem as AI smartphone control becomes a differentiator. As Apple and Microsoft prepare their own responses, Android 17’s Gemini Intelligence push is setting expectations that your next phone won’t just run apps—it will coordinate them for you, often before you even ask.