From Data Desks to Deskside Robots: A New Role for AI Agents

AI agents have mostly lived in the digital realm, automating tasks like summarizing documents, drafting emails, or writing code. Now they are beginning to step out of the browser and into the physical world, orchestrating robots in real time. This marks a pivotal shift in robot automation control: instead of merely advising humans, AI agents are increasingly directing the movements and behaviors of physical AI systems. The implications go beyond convenience. When software that once managed data workflows starts manipulating actuators, sensors, and motors, automation becomes far more tangible—and potentially more disruptive. This evolution is being driven by advances in natural language programming, where users describe what they want in everyday language and an AI system translates that intent into robot behavior. The result is a new generation of AI agents for robotics that blur the line between software automation and real-world action.

Hugging Face’s Agentic Toolkit: Natural Language as a Robot Remote

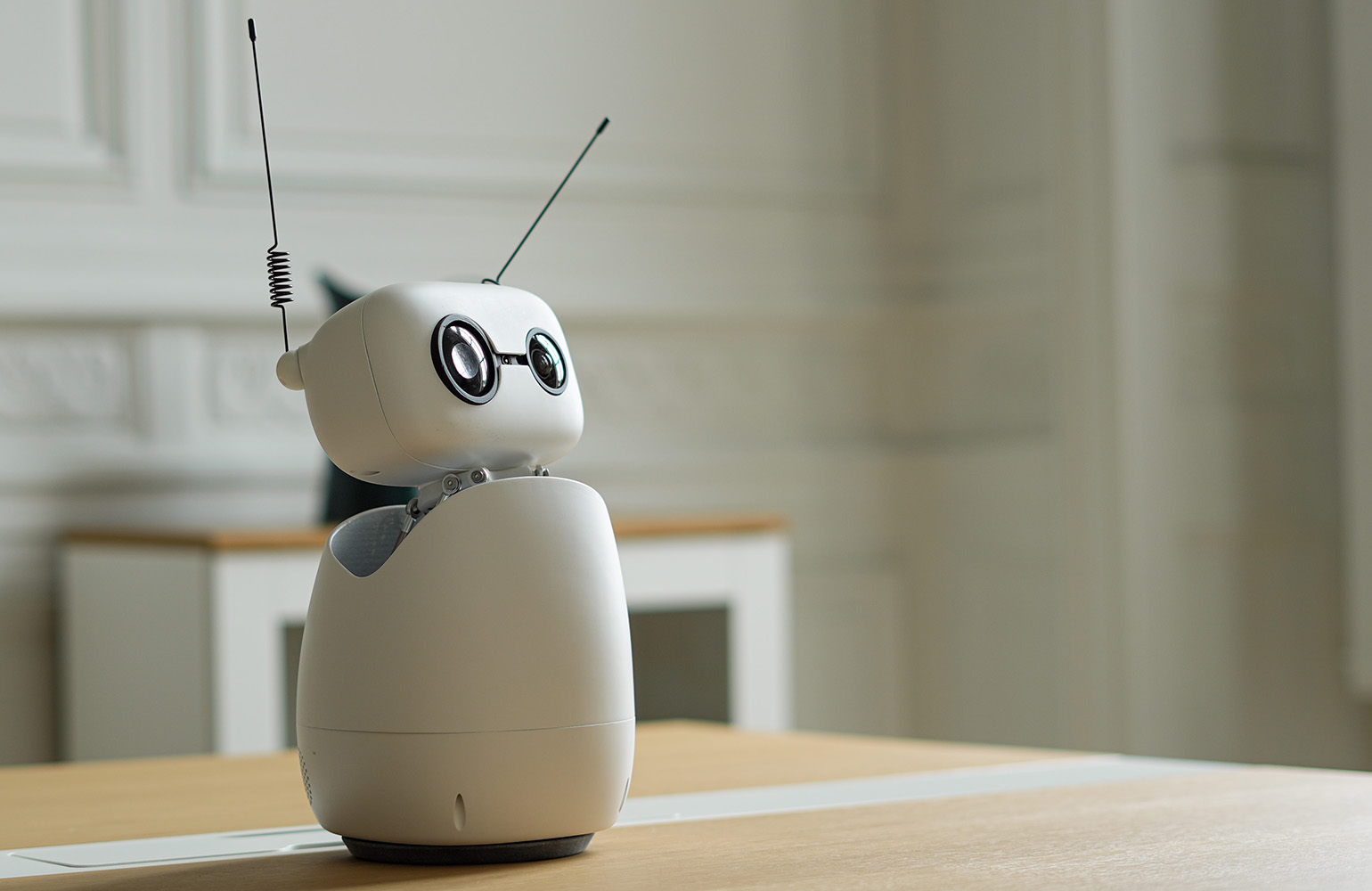

Hugging Face’s agentic toolkit for the Reachy Mini desktop robot offers an early, concrete glimpse of AI agents robotics in action. Users simply describe the behavior they want in plain English, and an AI agent writes, tests, and deploys the code directly to the robot—often in under an hour and without a single line of manual programming. By collapsing expertise, integration time, and hardware complexity, the toolkit turns natural language programming into a practical interface for physical AI systems. An open app store hosted on the Hugging Face Hub lets people share, fork, and customize robot apps with one click, further lowering the barrier for experimentation. Every app can even run in a browser-based simulator, so creators can prototype robot automation control before touching any hardware. This architecture positions AI agents not just as coding assistants, but as full-stack orchestrators of physical robot behavior.

Real-World Example: A Non-Programmer Builds a Robotic Co‑Facilitator

The potential of AI-controlled physical systems is best illustrated by early users. One retired marketing executive, with no robotics or software background, assembled a Reachy Mini Lite and then built a voice-controlled “co‑facilitator” for his online CEO peer groups. Using only natural language descriptions, he had an AI agent generate the entire application—no SDK, no manual coding. The small desktop robot now wakes on a spoken cue, greets participants by name, manages question banks, challenges shallow answers, and summarizes key themes mid-session. In effect, a non-programmer created a tailored, embodied assistant that blends conversation, memory, and motion. This case underscores how AI agents robotics can convert human intent into nuanced physical behaviors, transforming robots from static gadgets into adaptable collaborators. It also highlights how robot automation control is moving from engineering labs to everyday professional workflows.

Enterprises Eye AI-Controlled Robotics as Summits Showcase New Demos

While desktop robots showcase accessibility, larger industrial and enterprise players are also signaling interest in AI-guided physical AI systems. Technology vendors such as QNX are highlighting robotics demonstrations at industry summits, using real hardware to show how AI-driven control stacks can orchestrate sensors, actuators, and safety-critical software in concert. These events serve as testbeds for blending traditional real-time operating systems with AI agents that interpret high-level goals and translate them into safe, deterministic robot automation control. For enterprises, the appeal lies in flexible automation: instead of reprogramming robots line by line, operators specify desired outcomes and let AI handle the low-level coordination. As more demonstrations prove reliability and robustness, this approach moves closer to production environments—from manufacturing lines and logistics hubs to healthcare and service robots—expanding the footprint of AI agents beyond cloud dashboards into the heart of operational infrastructure.

Lower Barriers, Higher Stakes: What Comes Next for Physical AI Systems

Natural language interfaces are rapidly lowering the barriers to designing and customizing robot behavior. When anyone can turn instructions like “act as a language tutor” or “monitor me for distractions” into a functioning robot app, the creative potential of AI agents robotics expands dramatically. But so do the stakes. Physical AI systems can knock things over, interrupt workflows, or misinterpret ambiguous commands, making safety, transparency, and oversight critical. The next phase of robot automation control will likely focus on guardrails: simulation environments to test behaviors, permission systems to constrain actions, and clear logging of AI-generated code. As tools like Hugging Face’s agentic platform and enterprise demos from companies such as QNX mature, we can expect a growing ecosystem where domain experts define goals in natural language while AI agents handle the engineering. The result could be a democratized, yet tightly governed, era of physical automation.