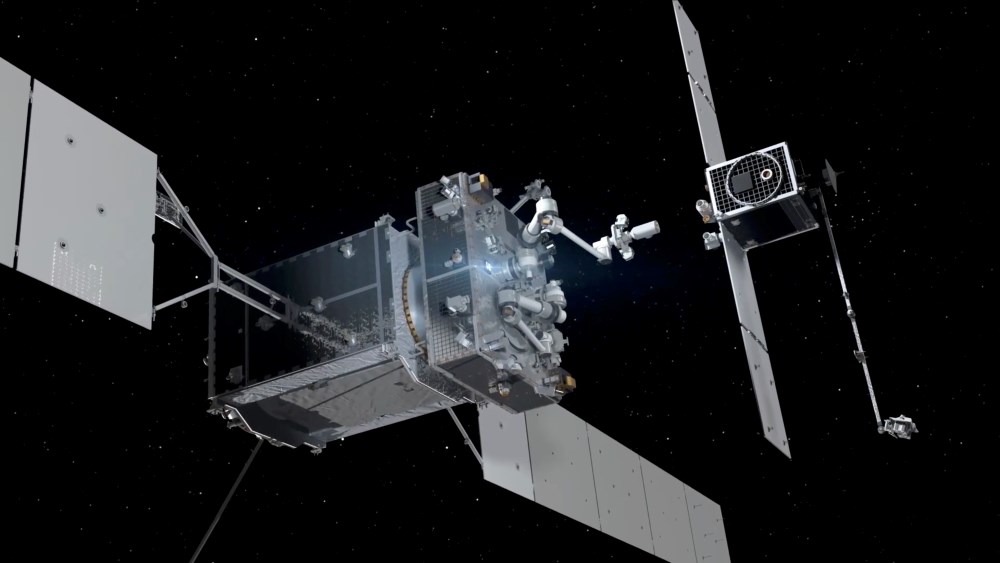

AI in aerospace: from years to hours in spacecraft design

In space engineering, AI is turning once‑intractable physics problems into near real‑time decisions. Northrop Grumman has teamed with Flexcompute, using NVIDIA’s PhysicsNeMo platform to build an AI model that predicts thruster impingement – the complex interaction between exhaust plumes and nearby structures. Analyses that previously took days now run roughly 100 times faster, effectively shrinking design cycles from long engineering stretches into work that can be executed between meetings. That speed matters for satellite servicing, orbital docking, and space robotics, where every thruster miscalculation risks damaged hardware or failed missions. The company frames this as life‑cycle compression: faster simulations enable more design iterations, earlier fault discovery, and ultimately more ambitious missions without stretching schedules indefinitely. For AI spacecraft design, this signals a shift from one‑off simulations to continuously updated digital engineering pipelines, where models run in the background and quietly de‑risk the next generation of orbital infrastructure.

Morphing wings and adaptive control: AI reshapes the aircraft itself

Aerospace engineers are also using AI to rethink the basic physics of flight. Under the morphAIR programme, the German Aerospace Centre (DLR) has flight‑tested AI‑controlled morphing wings on its PROTEUS unmanned aircraft. Instead of traditional flaps, slats and ailerons that deflect air in discrete steps, the wing surface itself flexes smoothly, preserving a clean aerodynamic profile. An adaptive AI controller monitors behaviour in real time and continuously updates the wing shape to match actual airflow, turbulence and structural loads. Early results show cleaner flow, lower drag, and more precise control in demanding conditions, moving morphing aerodynamics from the wind tunnel into real airspace. This is AI in aerospace as a deeply embedded control layer, not a cockpit gadget: software that understands the aircraft’s physics well enough to subtly reshape its structure mid‑flight. Over time, such systems could cut fuel burn, reduce noise, and unlock unconventional airframe layouts that traditional control surfaces cannot support.

Tesla’s AI spending wave and the new capex logic of autonomy

On the ground, AI’s shift from experiment to infrastructure is transforming capital budgets. Tesla has told investors it expects capital expenditures to exceed USD 25 billion (approx. RM115 billion) this year, nearly triple the USD 8.5 billion (approx. RM39 billion) it spent previously, with around USD 2.5 billion (approx. RM11.5 billion) already deployed in the first quarter. Executives link this surge directly to AI, as the company pivots from a pure car maker toward businesses built on autonomy, custom AI semiconductors and its Optimus humanoid robot. The move lands in a broader context where tech giants are projected to spend nearly USD 700 billion (approx. RM3.22 trillion) on capital expenditure, much of it AI‑related. For industrial AI, the signal is clear: autonomy, advanced manufacturing and robotics are now treated as long‑horizon infrastructure bets, even at the cost of near‑term free‑cash‑flow pressure, and other big players are unlikely to reduce their own AI spending while this arms race is underway.

AI retail technology: from shelf‑edge vision to cloud‑wide product intelligence

Retail is experiencing a quieter but equally profound AI transition, focused on inventory accuracy and product discovery rather than flashy chatbots. Pensa Systems has won the RetailTech Breakthrough "Shelf Monitoring Solution of the Year" award for four consecutive years as its Vision AI expands from shelf monitoring to full‑store visibility, across back‑room inventory, case packs, top‑stocks, promotions, pricing and signage. The company reports automating up to 70% of manual shelf activities and driving up to a 20% revenue lift through better space planning and key item availability. Salsify’s Intelligence Suite, named "RetailTech AI Innovation of the Year," embeds AI directly into product experience workflows, cutting content validation per item from 20–30 minutes to around five. Brands can now translate, rewrite and optimize thousands of SKUs with human‑in‑the‑loop control and governance. Complementing these tools, StorageChain’s cross‑cloud AI intelligence layer unifies data across Microsoft, Amazon, Dropbox and more, eliminating silos so merchandising and operations teams can query real‑time information without migrating a byte.

Guardrails for AI autonomous agents and what comes next

As organisations deploy AI autonomous agents into real workflows, cost and safety controls are becoming as critical as model accuracy. Portal26’s new Agentic Token Control module is one example: it lets enterprises monitor, cap and dynamically throttle the tokens their agents consume. That addresses a new operational risk where agents loop on tasks, inflate token usage, and generate unpredictable bills or unstable processes. Finance and IT teams can now set policy‑based thresholds, pause or terminate agents in real time, and see usage patterns across workflows and departments. Taken together with AI in aerospace, AI retail technology and cross‑cloud data layers, a clear pattern emerges. The next phase of AI will be defined less by standalone chatbots and more by embedded, task‑specific systems: thruster models sitting inside spacecraft design tools, controllers inside morphing wings, vision stacks behind store shelves, and governance layers behind agentic platforms. The most powerful AI may increasingly be the kind users never see—but rely on every day.