The Perception Trap: Why “Faster” Isn’t Always Better

Teams adopting AI coding tools often report faster cycles, but few can prove those gains are real, sustained, or visible in business outcomes. Industry figures are all over the place: controlled trials show task-level speed-ups, forecasts promise future productivity gains, and surveys reveal only a minority of teams seeing high benefits. These numbers are frequently treated as interchangeable, even though they measure different things across different contexts and time horizons. This is how engineering leaders fall into the perception trap: assuming that happier developers and quicker tasks automatically translate into durable productivity. Real AI coding tools ROI demands more than anecdotes about “feeling faster.” It requires connecting developer velocity metrics to codebase changes, delivery performance, and ultimately business value. Without a structured approach, AI gets credited for improvements that might come from unrelated workflow changes—or blamed for issues that stem from deeper organizational bottlenecks.

Start with Foundations: AI as an Amplifier, Not a Fix

The latest DORA research frames AI as an amplifier of whatever system it enters. Strong internal platforms, clear workflows, and aligned teams see their strengths magnified. Struggling organizations, by contrast, see their existing chaos intensified: more code, more handoffs, more instability. This means AI implementation success depends less on tool selection and more on foundational engineering practices. Before declaring victory on AI ROI, leaders should assess basics like version control hygiene, automated testing, deployment pipelines, and the accessibility of internal data to AI systems. DORA’s value model explicitly routes AI benefits through these capabilities before they show up in delivery metrics, developer experience, or user outcomes. Put simply: if you can’t ship reliably today, AI will not fix that; it will shine a spotlight on your bottlenecks. Measuring AI impact therefore begins with measuring how robust your engineering foundations are.

Using DORA Metrics to Separate Signal from Noise

The DORA metrics framework gives engineering leaders a common language for engineering productivity measurement: deployment frequency, lead time for changes, change failure rate, and time to restore. In the AI context, these metrics help distinguish perceived productivity from actual outcomes. For example, AI-assisted coding might increase deployment frequency and shorten lead times, indicating higher throughput. At the same time, research shows it can raise delivery instability, with more frequent failures or outages. Treat this as a measured trade-off, not a surprise. The goal is to track how AI affects each DORA metric over time, rather than relying on raw output like lines of code or commit counts. Combined with non-financial indicators such as developer experience and user satisfaction, DORA metrics map the path from AI-driven workflow changes to real business value, and highlight where governance, testing, or automation must catch up.

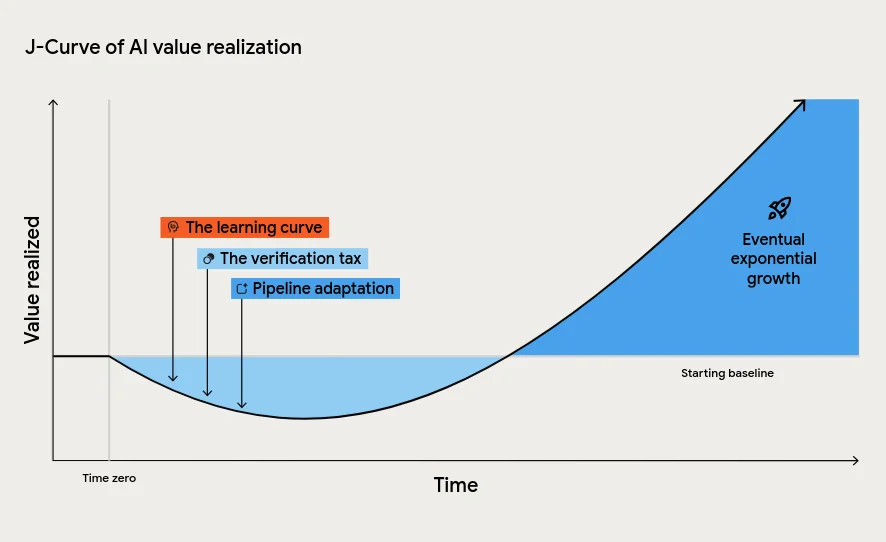

Mind the J-Curve: Planning for the Productivity Dip

DORA’s updated ROI model introduces the J-Curve of AI value realization: a predictable dip in productivity before long-term gains appear. Three forces drive this dip. First, the learning curve as engineers adapt their habits, prompts, and workflows. Second, the verification tax: AI-generated code must still be reviewed, tested, and integrated, which initially slows teams down. Third, downstream processes—testing, approvals, release management—often can’t handle the higher volume of changes without retooling. Leaders who misinterpret this tuition cost as failure may pull funding at the very moment when structural improvements are needed. Instead, plan for the J-Curve by investing in continuous integration, automated tests, and smaller batch sizes. Track DORA metrics through this phase so you can see whether dips are temporary adaptation costs or signs of deeper instability that require rethinking how AI is woven into your delivery pipeline.

From Metrics to Money: Calculating AI Coding Tools ROI

To turn developer velocity metrics into a credible AI coding tools ROI story, you need a consistent value model. DORA’s approach links improvements in engineering capabilities and delivery metrics to non-financial outcomes (like better developer and user experience) and finally to financial impact. Value is then quantified using the classic ROI formula: value minus investment, divided by investment. In this model, AI’s costs are no longer dominated by infrastructure; falling inference costs shift the burden to governance, verification, and upskilling. Tools like Engineering Throughput Value (ETV) extend this by scoring each commit against a pre-AI baseline, grounding claims in what actually changed in the codebase. Combined, these methods let finance and engineering speak the same language: instead of vague claims about “x% faster coding,” teams can show how AI shifted specific metrics, cleared concrete bottlenecks, and contributed measurable business value over time.