What Agentic AI Workflows Actually Do for Builders

Agentic AI workflows go beyond chat-style “prompt and wait” tools. Instead of giving you a single code snippet and stopping, an agent decomposes your request into a sequence of steps, executes them inside your real toolchain, checks the results, and iterates until it hits a defined goal. For developers and makers, that means less time on boilerplate and glue work, and more time on design and validation. The pattern is consistent across new tools: agents read requirements, operate directly inside engineering or editing environments, then self-correct. They are particularly effective for repetitive, structured work—configuring systems, wiring components together, or generating first-draft assets. However, they are not autonomous coworkers. Human review remains essential to confirm safety, performance, and product fit. The practical mindset shift is to treat agents as junior assistants who are very fast, somewhat naïve, and best pointed at clearly-bounded tasks rather than open-ended product decisions.

Meta’s WebXR AI Tools: From Prompts to Playable Scenes

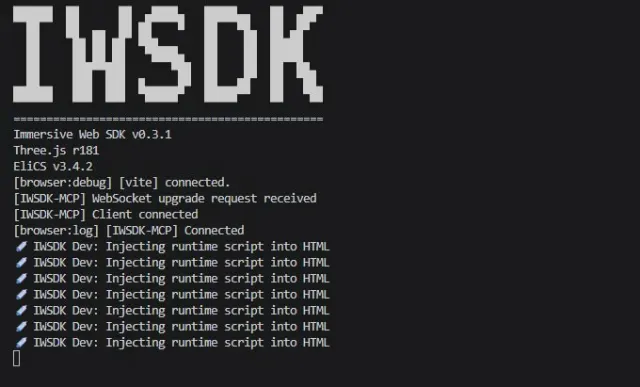

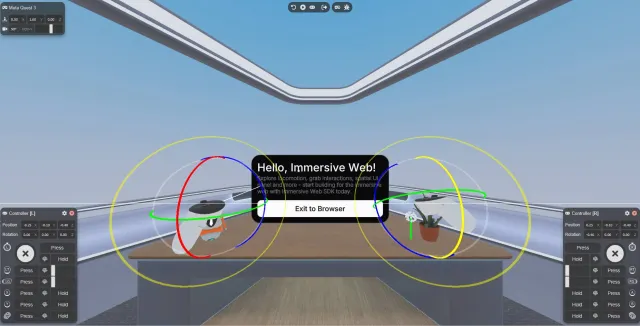

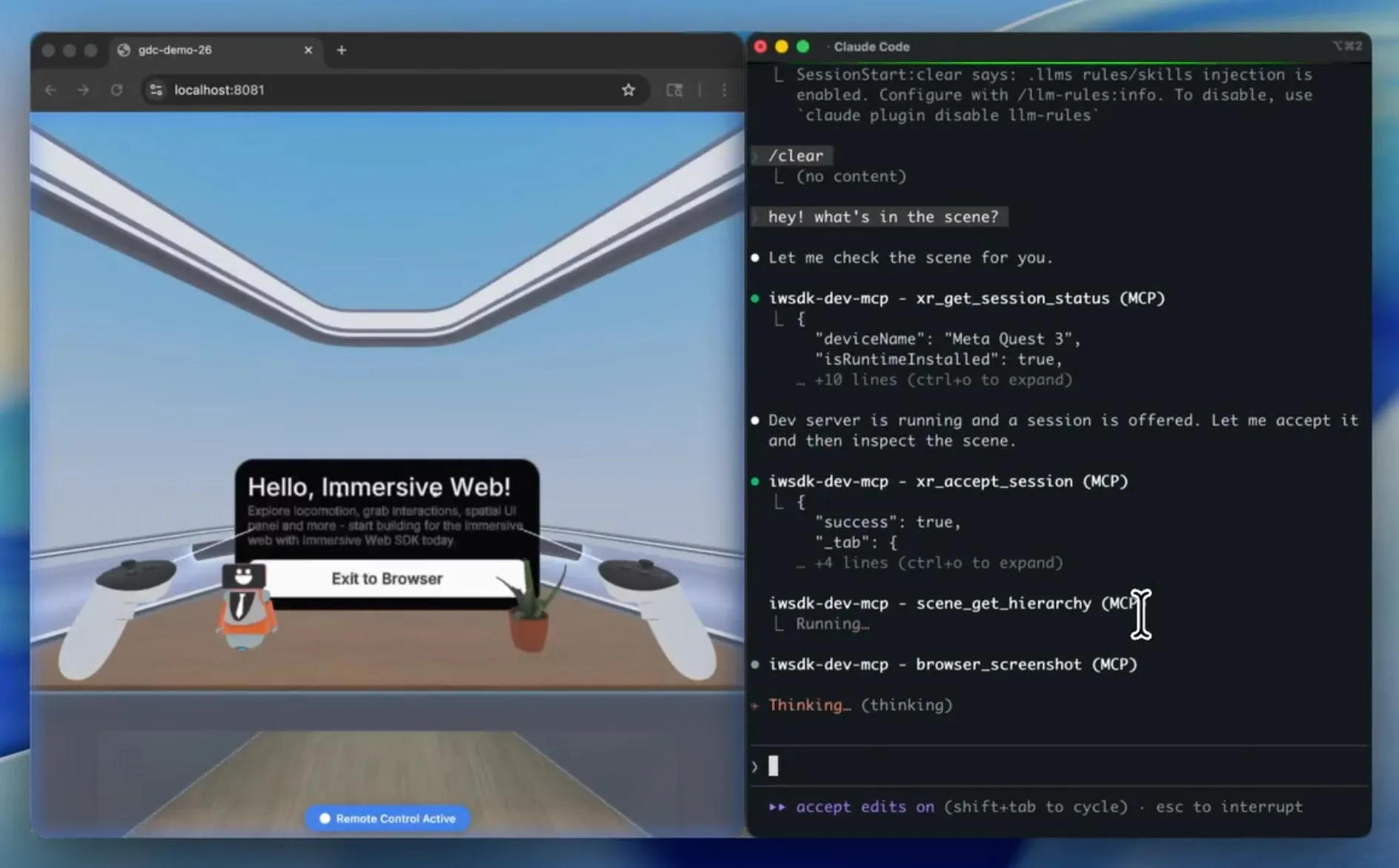

Meta’s Immersive Web SDK (IWSDK) adds an agentic layer on top of a WebXR stack built over Three.js, giving creators high-level building blocks for grabbing, locomotion, and spatial audio instead of hand-rolling everything in low-level JavaScript. The new agentic workflow bakes AI instructions directly into project templates, including detailed guidelines in files like copilot-instructions.md so AI coding tools understand IWSDK’s entity–component architecture and common pitfalls. This is crucial because IWSDK is niche, and generic AI for developers usually lacks training on it. Meta also exposes MCP tools that let agents interact with a running WebXR build: taking screenshots, moving controllers, pressing buttons, and using Playwright APIs to run tests. In practice, the agent can implement a feature, spawn the experience in a dedicated browser instance, exercise it through these tools, and refine the code when behavior diverges from the goal. Hands-on impressions suggest this is powerful for rapid prototyping but still clunky in places, especially when local models are slow or unstable.

Automation Engineering AI: Siemens and Rockwell’s Orchestrated Workflows

In industrial automation, Siemens and Rockwell are applying the same agentic pattern to engineering workflows. Siemens’ Eigen Engineering Agent sits inside the TIA Portal environment, where it can interpret project requirements, generate PLC code, configure HMIs and devices, and validate results against predefined performance targets. Because it has access to project structures, component relationships, and control hierarchies, it can align outputs with existing standards and even work with legacy or poorly documented systems. Pilot projects suggest tasks can be executed two to five times faster than manual workflows while maintaining accuracy. Rockwell’s newly unveiled AI-orchestrated factory design workflow targets end-to-end simplification of factory engineering: from layout to deployment. While details are still emerging, the goal is similar—cut engineering time and streamline deployment by letting AI orchestrate repetitive design and configuration steps. In both cases, human engineers stay in the loop to approve final configurations, but much of the busywork of drafting, wiring, and iterating configurations is delegated to automation engineering AI agents.

Low Code AI Builder: Joget’s Governed Conversational Composition

Joget’s AI Composer shows how agentic AI workflows can serve non-developers in enterprise settings. Instead of generating raw source code that later needs debugging, security review, and compliance documentation, AI Composer uses natural language to assemble governed application components within the Joget DX low code platform. Forms, workflows, data views, and interfaces are created as structured metadata and immediately visible in Joget’s visual builders. Because the AI operates inside an existing governance framework, every change inherits audit trails and compliance controls. This matters to teams facing heavy regulatory and security demands, where unguided AI-assisted coding can shift effort rather than reduce it. Business users can propose changes conversationally, while developers refine and harden them using the same visual tools. The result is a low code AI builder that lowers friction for app composition without bypassing enterprise standards—an important template for how agentic AI for developers and non-developers can coexist safely in production environments.

Designing Your Own Agentic AI Workflows—And Staying in Control

To apply agentic AI workflows in your own projects, start by breaking work into AI-friendly subtasks: generate scaffolding and boilerplate, wire standard components, propose configurations, then run scripted tests. Reserve human review for architecture decisions, safety-critical logic, performance tuning, and UX. Track time saved per task and error rates before and after introducing agents; this will reveal whether the workflow is actually worth keeping. Avoid over-reliance on autogenerated code or configs. Even when an agent has access to rich project context, it can still misinterpret requirements or introduce brittle abstractions. Vendor lock-in is another risk: WebXR AI tools tied to specific SDKs, or automation agents embedded in proprietary engineering platforms, can be hard to swap later. Treat them as accelerators, not crutches. Maintain a working understanding of your PLC logic, WebXR scene graph, or app metadata so you can debug and evolve systems even when the AI-generated first draft is long forgotten.