The Hidden Cost of Clinging to Web Scraping

For years, web scraping has been the default way to inject live information into applications and AI systems. But as sites evolve, the strategy is showing its age. HTML structures change without warning, layouts are redesigned, and new anti-bot defenses appear overnight. Each tweak can silently break a scraper, leaving teams scrambling to debug parsers and rotate proxies just to restore basic functionality. What starts as a quick script often turns into a fragile production dependency. As usage grows, so do headaches: escalating IP blocks, CAPTCHAs, and inconsistent output formats that undermine downstream pipelines. This “scraping tax” drains engineering time that should be spent improving products, not firefighting infrastructure. In a landscape where AI tools depend on reliable real-time data access, brittle scrapers are less a clever hack and more a liability waiting to surface at scale.

APIs Like SerpApi: Clean, Structured Search Data on Demand

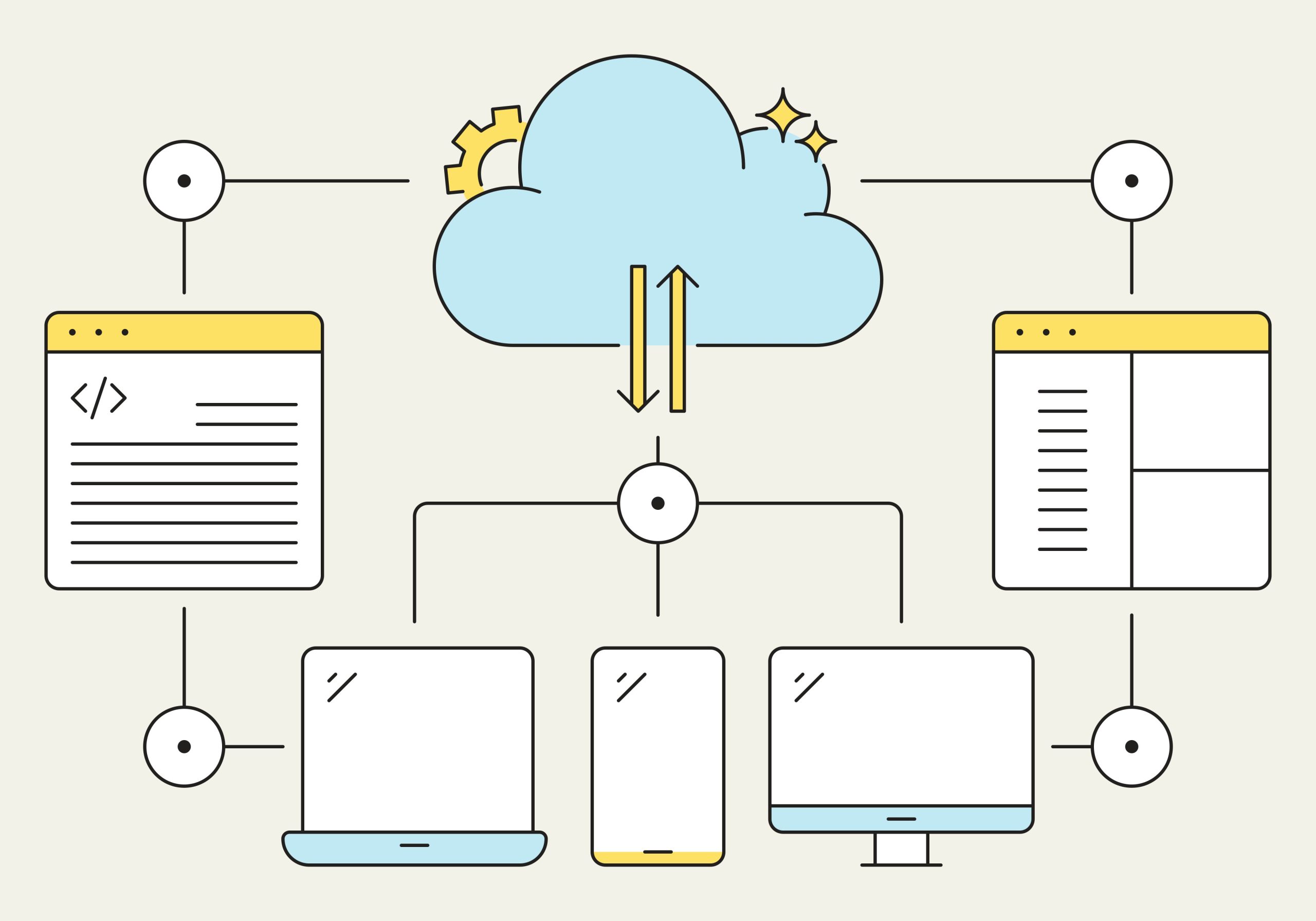

Search-focused APIs are emerging as the primary web scraping alternatives for teams that need dependable data feeds. SerpApi positions itself as a search engine API overlaying services like Google, Bing, Amazon, and dozens more, returning results as structured JSON instead of raw HTML. The platform absorbs the messy work: it manages proxies, solves CAPTCHAs, and continuously tracks layout changes so developers don’t have to rebuild scrapers every time a search page shifts. For AI and app developers, this means replacing brittle parsing logic with a single, stable endpoint that delivers consistent, real-time data access. Whether it’s web results, maps data, shopping listings, or AI overviews, the same pattern applies: call an endpoint, receive normalized fields suitable for immediate use in agents, pipelines, or dashboards. The complexity stays behind the API boundary, freeing teams to focus on features rather than infrastructure triage.

AI Teams Are Burning Months on Scrapers They Don’t Need

As AI products move from prototypes to production, the limitations of scraping become painfully clear. Early experiments often rely on quick scripts tied to specific result pages. Once usage scales, those scripts evolve into sprawling scraper frameworks that demand constant care. Engineers juggle proxy networks, chase down intermittent failures, and patch parsers whenever a search engine tweaks its interface. The opportunity cost is enormous: instead of refining models, improving latency, or building better user experiences, teams are stuck just keeping data flows alive. Platforms like SerpApi flip this dynamic. They offer a single integration that abstracts away IP blocking, CAPTCHAs, and layout volatility, providing a predictable contract for data retrieval. For AI agents that need fresh context and live search, a dedicated search engine API is often the simplest way to ensure continuity, accuracy, and reproducibility without burning months on maintenance work.

Reducing Legal and Technical Risk with Purpose-Built Data APIs

Beyond operational fragility, scraping raises legal and compliance concerns that many teams underestimate. Terms of service, robots.txt rules, and unclear data provenance can complicate how scraped content is stored and used, especially in regulated industries. Purpose-built APIs help mitigate this risk by offering defined, contractual access paths and consistent output. Instead of reverse-engineering public interfaces, teams rely on documented endpoints that are intentionally designed for programmatic consumption. This stability is crucial for production systems that must be auditable and defensible over time. When an API provider monitors upstream changes and maintains compatibility, downstream teams gain not just technical resilience but also a clearer compliance story. In effect, specialized APIs turn a grey-area scraping practice into a managed service with known behavior, making them an increasingly attractive SerpApi alternative for organizations that prioritize governance as much as raw data.

Why Professional Investigators Need More Than Google and Scrapers

The shift from ad hoc scraping to professional data tools is especially evident in investigative work. Public search engines prioritize relevance for casual users, not completeness or auditability for fraud investigators, law enforcement, or corporate risk teams. A single name search can surface millions of loosely related results, with no clear boundary indicating when the search is truly complete. Personalization and ranking algorithms further distort what each user sees, making it harder to verify that critical records were not missed. Large portions of public records and licensing data never surface cleanly through ordinary search at all. Investigators instead rely on specialized platforms that aggregate trusted sources, maintain an auditable trail, and expose structured fields tuned to investigative workflows. In this context, both manual Googling and one-off scrapers fall short; professional data tools and APIs provide the precision, consistency, and coverage that high-stakes investigations demand.