From Developer Framework to AI-Powered WebXR Creation

Meta’s latest update to its open-source Immersive Web SDK (IWSDK) marks a shift from a developer-first tool to a broader WebXR development platform that embraces non-coders. Originally introduced to streamline core VR tasks such as physics, hand-tracking, movement, grab interactions, and spatial UI, the SDK aimed to let creators focus on ideas instead of low-level engineering. Now Meta has layered in an AI-powered, “agentic workflow” that turns the SDK into a kind of AI-powered VR builder. Instead of manually wiring every interaction, creators can describe behaviors and scenes in natural language while AI coding assistants generate, test, and refine the underlying code. Because IWSDK targets WebXR, the resulting experiences run directly in a browser across desktop and VR headsets, accessible via a simple URL. This combination of AI assistance and web-based deployment pushes the SDK beyond a traditional framework into a no-code VR creation environment.

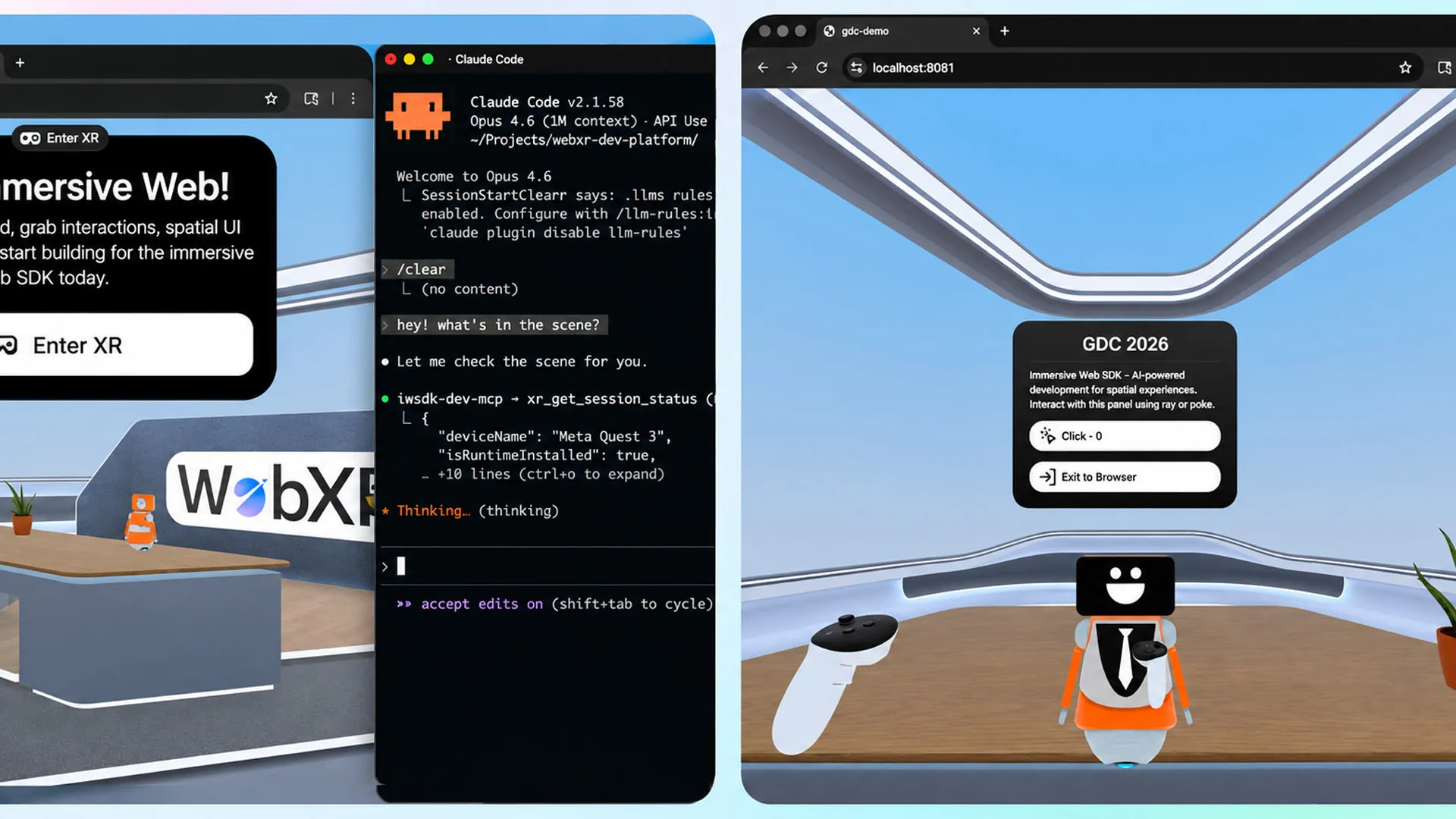

How Agentic AI Workflows Lower the Barrier for Non-Coders

The new agentic workflow in the Meta Immersive Web SDK is designed to handle more than just generating snippets of code. Meta describes it as a closed-loop system where AI coding assistants such as Claude Code, Cursor, GitHub Copilot, and Codex automatically generate, test, and validate code until it behaves as intended. For non-programmers, this means they can focus on describing scenes, interactions, and user journeys instead of wrestling with syntax or debugging. The AI effectively acts as an autonomous collaborator, iterating on the project within the IWSDK’s structure. Because common VR mechanics—like object grabbing, spatial interfaces, or hand-tracking input—are already encapsulated in the framework, the AI can rapidly assemble them into complete experiences. This transforms WebXR development tools into something approachable for designers, artists, educators, and domain experts who understand what they want to build but lack traditional engineering skills.

Rebuilding Project Flowerbed: A Proof of Concept for AI VR Production

To showcase what an AI-powered VR builder can achieve, Meta revisited its 2022 VR gardening experience, Project Flowerbed, originally composed of tens of thousands of lines of custom code. Using the Immersive Web SDK’s agentic workflow and existing art assets, the company reports that the entire application was recreated in just 15 hours. Meta stresses that this is not about fixing typos or auto-generating boilerplate; the AI produced a full, interactive VR experience for the web. This demonstration suggests that complex, content-rich WebXR projects can be iterated far more quickly when AI handles the repetitive and structural coding work. For teams with limited engineering resources, it hints at a future where rebuilding, localizing, or expanding VR experiences becomes a largely AI-assisted process, with human creators focusing on narrative, interaction design, and visual polish rather than implementation details.

Democratizing WebXR and Expanding the Immersive Creator Base

The combination of no-code VR creation workflows and browser-based deployment could significantly expand the pool of WebXR creators. Because experiences built with the Meta Immersive Web SDK can be tested instantly in a browser, there are no lengthy compile times or complex build pipelines. Distribution is similarly streamlined: a single URL can reach desktop users and headset owners alike, sidestepping app stores and downloads. Meta notes that more than one million monthly users already access WebXR content on Quest, signaling a ready audience for web-based immersive experiences. By lowering technical barriers and leveraging open-source frameworks under an MIT license, IWSDK positions WebXR as a more inclusive platform. Educators, brands, and independent creators can experiment with interactive VR content without investing in heavy engineering teams, potentially leading to a more diverse and experimental ecosystem of browser-delivered immersive experiences.