From Flat-Rate Comfort to Usage-Based Reality

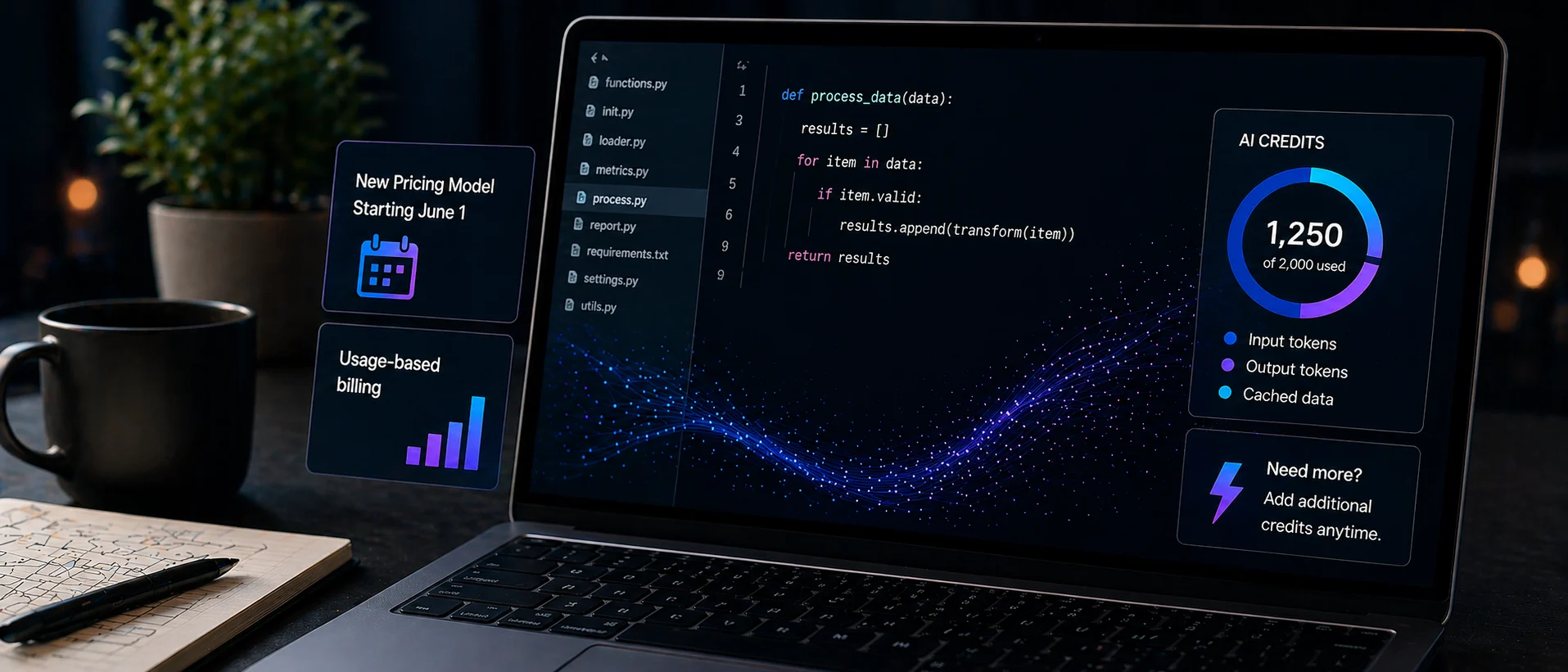

GitHub Copilot is moving from flat-rate plans to a usage-based billing system built around AI credits, marking a major shift in how AI coding tools are sold. Starting June 1, Copilot’s existing Pro, Pro+, Business, and Enterprise tiers will keep their current monthly subscription prices, but the underlying entitlement changes dramatically. Instead of loosely defined premium request units, users will now receive a monthly pool of AI credits tied directly to token usage, including input, output, and cached data processed by Copilot’s models. Once those credits are exhausted, additional capacity can be purchased on demand. This change is driven by escalating inference and compute costs as Copilot evolves from simple inline suggestions into agentic workflows that can operate across entire repositories for extended periods. In effect, GitHub is aligning its commercial model with the true cost of modern generative AI workloads. [1][2]

What the New GitHub Copilot Pricing Means Day to Day

Under the new GitHub Copilot pricing structure, developers still pay a fixed monthly subscription but their actual AI usage is now metered. Each individual, business, or enterprise plan receives a monthly allocation of AI credits proportional to the subscription tier, and these credits are consumed based on token usage. Crucially, GitHub says basic capabilities such as code completions and editing suggestions will not draw down credits, while more advanced, agentic activities such as Copilot code review and multi-hour autonomous sessions will. When credits run out, usage stops unless more capacity is purchased, replacing earlier fallback mechanisms that allowed reduced functionality beyond limits. For existing monthly subscribers, the transition will be automatic; annual subscribers keep the old model until renewal. GitHub will also offer a billing preview tool so customers can simulate their future costs before the switch, helping teams understand and plan their AI consumption. [1][2]

Budget Shock: How Developers and Teams May Need to Adapt

For individual developers, usage-based billing introduces a new budgeting challenge: AI-heavy days may cost more, while lighter periods cost less. Power users who rely on long Copilot sessions for refactoring, repository-wide analysis, or intensive code review could see higher monthly bills once their AI credits are depleted and they opt to buy more. On the other hand, developers who mostly use lightweight inline suggestions may stay within their allocations with minimal impact. In organizations, the shift reshapes cost management for AI coding tools. Enterprise customers gain more granular financial controls: they can pool unused credits across teams and define spending thresholds for users and cost centers, deciding whether usage can exceed those limits. This adds predictability but also forces engineering leaders to actively monitor AI consumption, set policies for agentic features, and weigh productivity gains against newly visible compute costs. [1][2]

A Turning Point for AI Coding Tools and Usage-Based Billing

GitHub’s move is widely seen as a signal that the era of cheap, flat-rate AI coding may be ending. Copilot’s old model let a quick chat and a multi-hour autonomous coding session cost the same to the user, with GitHub absorbing much of the escalating inference cost. Now, tying pricing to token-based AI credits reflects a broader industry trend: as agentic AI coding tools become more powerful and resource-intensive, vendors are under pressure to align revenue with actual compute usage. Other AI providers have already begun quietly trimming flat-rate usage or reconsidering their consumer plans as long-running agents drive token consumption skyward. Going forward, developers should expect AI coding assistants to be priced more like cloud infrastructure: metered, transparent, and potentially expensive for heavy workloads. This could encourage more efficient prompts, smarter tool selection, and a renewed focus on cost-aware AI engineering practices. [1][2]