Google’s $40 Billion Anthropic Bet: Chips, Capacity and Cloud Lock‑In

Google’s planned Google Anthropic investment is structured as a staged, strategic wager on where AI workloads will live. Anthropic says Google is investing USD 10 billion (approx. RM46 billion) in cash at a USD 350 billion (approx. RM1.61 trillion) valuation, with a further USD 30 billion (approx. RM138 billion) contingent on performance milestones. In parallel, Google Cloud is committing 5 gigawatts of computing capacity over five years, with more potentially to follow, tying Anthropic’s future model training and serving tightly to Google’s infrastructure. The deal deepens an earlier arrangement with Broadcom around custom chips, and formalises Anthropic as a flagship customer for Google’s cloud AI chips and services. Even as Anthropic also secures capital from Amazon, the Google partnership effectively guarantees that Claude models will be first‑class citizens on Google Cloud, reinforcing long‑term cloud commitments and giving Google a powerful showcase for enterprise AI infrastructure at hyperscale.

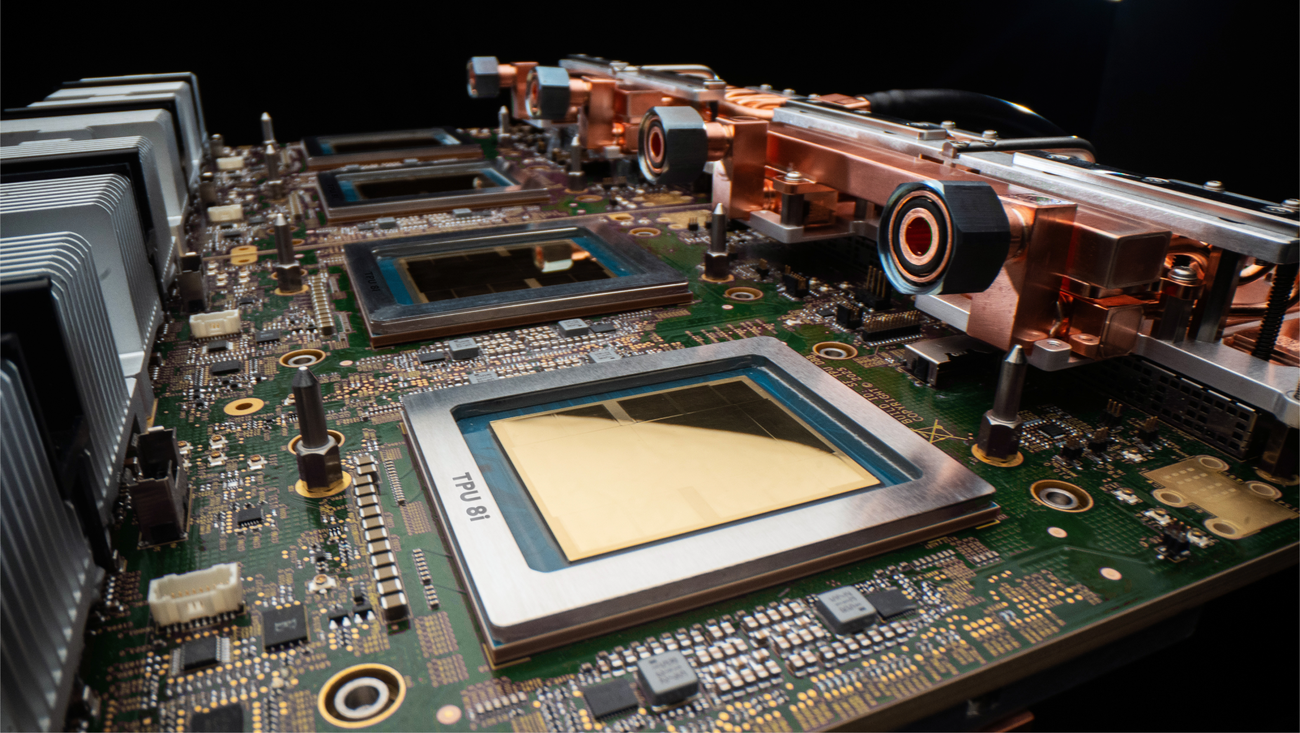

Inside the Eighth Generation TPU: Architected for the Agentic Era

Google’s eighth generation TPU is framed as a platform for the ‘agentic era’ of AI, where long‑running, reasoning‑heavy agents dominate workloads. The family splits into TPU 8t, focused on training, and TPU 8i, tuned for inference, both co‑designed alongside Google’s Gemini models. New Boardfly interconnect topology targets the communication demands of advanced reasoning models, while expanded SRAM in TPU 8i is sized around the key‑value cache footprint of large‑scale inference. A new Virgo network fabric is engineered for trillion‑parameter training, and both chips now sit on Google’s Axion ARM‑based CPU hosts, optimising the full system stack. Google highlights up to 2x better performance‑per‑watt versus the prior Ironwood generation, critical as data‑centre power, not silicon, becomes the hard constraint. Native support for JAX, PyTorch, SGLang and vLLM, plus bare‑metal access, positions the eighth generation TPU as a flexible, cloud AI chip for both frontier labs and enterprises deploying production‑grade AI agents.

Countering Microsoft–OpenAI and Amazon–Nvidia with Claude on TPUs

Pairing Anthropic’s Claude models with Google’s eighth generation TPU stack is central to Google’s strategy in the AI compute race. Anthropic is already a major user of Google chips, and the expanded deal ensures its next‑wave agents, such as Claude Code, are closely aligned with Google Cloud’s roadmap. This gives Google a direct analogue to Microsoft’s preferred access to OpenAI on Azure and Amazon’s deep alignment with Nvidia and Anthropic on AWS. By running Claude and Gemini across both custom TPUs and high‑end Nvidia GPUs, Google can offer enterprises portfolio‑level choice: cost‑optimised TPU 8i inference for high‑volume agent traffic, and Nvidia Rubin or Blackwell for specialised or highly portable workloads. For Google Cloud, Claude on TPUs becomes a differentiated pillar in its enterprise AI infrastructure pitch, complemented by open frameworks, bare‑metal access and sovereign‑grade deployments via Google Distributed Cloud for highly regulated sectors in Asia and beyond.

Implications for the AI Chip Landscape and Enterprise Buyers

Google’s push into custom cloud AI chips places it in more direct competition with Nvidia’s dominant GPUs and emerging ASIC vendors. Internal TPUs, co‑designed with partners like Broadcom and potentially Marvell, let Google tune every layer—networking, memory, power—for AI at scale, undercutting generic GPU deployments on performance‑per‑watt. At the same time, Google is not abandoning Nvidia: new A5X bare‑metal instances with Vera Rubin GPUs and Virgo networking promise up to 10x lower inference cost per token and 10x higher token throughput per megawatt over prior generations. For startups and enterprises, this arms race brings both opportunity and risk. They gain cheaper, faster options for training and inference, plus enhanced compliance via confidential computing and sovereign clouds. But deep discounts tied to specific chips or models increase vendor lock‑in. Malaysian firms must weigh immediate savings and performance against long‑term portability when standardising on Google TPUs, Nvidia‑centric stacks or rival hyperscaler ecosystems.

What’s Next for Inference Costs and Regional Platform Choices

Over the next two to three years, Google’s Anthropic partnership and eighth generation TPU rollout are likely to accelerate a price war in AI inference and training. As hyperscalers integrate custom accelerators, optimise power usage and scale optical‑grade networking fabrics like Virgo, the cost per token for common workloads should fall sharply. For Malaysian and regional enterprises, that means generative and agentic AI becoming economically viable across more use cases—from BPO automation to software development and financial services—without prohibitive infrastructure bills. Google’s mix of TPUs, Nvidia‑based A5X instances and distributed cloud options will appeal to organisations balancing cost, data‑residency and compliance. Yet competing offers from Microsoft–OpenAI and Amazon–Nvidia will be equally aggressive. The winners among local adopters will be those who design multi‑cloud, model‑agnostic strategies from the outset, avoiding hard dependence on a single provider’s silicon while still exploiting the rapid improvements in performance and enterprise AI infrastructure economics.