From Manual Crosshairs to Autonomous Drone Targeting

Military drone technology is rapidly shifting from operator-driven video feeds to systems that can find and follow targets on their own. This change is driven by autonomous drone targeting capabilities that keep a steady lock on moving vehicles, individuals, or key infrastructure without constant joystick control. AI tracking systems now embedded on small vision and compute modules can analyze video in real time, recognize a designated target, and keep it framed even as it changes direction or passes behind temporary obstacles. This autonomy is not about removing human oversight, but about reducing operator workload in high-pressure missions, where attention is split across multiple drones, radios, and sensors. By letting the onboard AI handle the routine task of maintaining visual lock, crews can focus on higher-level tactical decisions, coordination with ground forces, and rules-of-engagement compliance.

BAE and Vantor: High-Accuracy Targeting Without GPS

One of the biggest obstacles for drones in modern conflict is GPS-denied environments, where spoofing and jamming undermine traditional navigation and targeting. BAE Systems’ Geospatial eXploitation Products and Vantor are tackling this with a new integration between the GXP software ecosystem and Vantor’s Raptor suite. Raptor Sync georegisters full-motion video from a drone’s onboard camera against Vantor’s 3D terrain data in real time, enabling accurate ground coordinate extraction even when inertial sensors and metadata drift. By injecting corrected Key-Length-Value metadata directly into the video stream at the edge, the system overrides bad telemetry and restores weapon-quality coordinate accuracy, reportedly achieving absolute accuracy of less than 3 meters. This approach keeps intelligence and targeting workflows functioning in contested electronic warfare environments, preserving operational tempo when conventional positioning systems can no longer be trusted.

AI Tracking Systems and Multi-Stream Video for Tactical Awareness

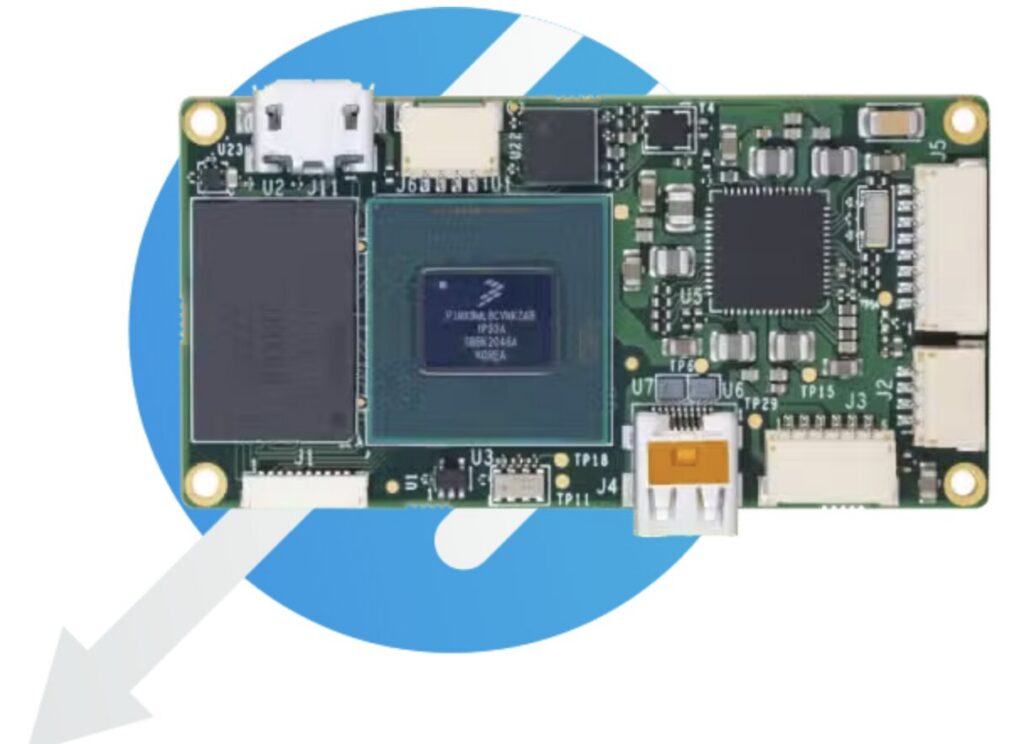

Autonomous drone targeting depends not only on precise coordinates, but also on rich, low-latency visual intelligence. Maris-Tech’s Jupiter platform family illustrates how AI tracking systems and onboard processing are converging. Jupiter-Drones can compress and stream live video from two cameras simultaneously, while Jupiter-AI expands this to multiple channels and adds a Hailo-8 processor for automated target detection and tracking. Smaller variants such as Jupiter-Nano and Jupiter Mini support real-time, multi-stream video on micro drones and other constrained platforms. This multi-camera approach gives operators and algorithms overlapping views of a scene, improving target identification and resilience when one sensor is degraded. With more intelligence pushed onto the drone itself, fleets can maintain continuous surveillance and autonomous lock-on in dynamic engagements, rather than depending on high-bandwidth links or external processing centers.

Contested Environments Drive a New Targeting Architecture

The combination of Vantor’s Raptor Sync and BAE’s GXP ecosystem points to a new architecture for operating in GPS-denied environments. Instead of assuming that raw telemetry is trustworthy, the system treats vision as the primary reference, continuously matching video to high-resolution 3D terrain models. This creates a feedback loop in which corrected metadata is injected into the video stream before exploitation, so downstream tools and analysts work only with trusted coordinates. At the same time, multi-domain interoperability allows data from different sensors and platforms to be fused, mitigating the limitations of any single asset. In effect, targeting confidence becomes a software problem, solved by computer vision and geospatial analytics at the edge. This shift enables drones with inexpensive, imperfect sensors to support precision activities that previously required high-end, tightly calibrated platforms.

Reducing Operator Burden While Preserving Human Control

As autonomous drone targeting matures, a central goal is to offload cognitive burden without sidelining human decision-makers. AI tracking systems that automatically maintain lock-on, along with georegistration tools that correct metadata in real time, allow operators to manage more drones and more complex missions. Instead of manually steering sensors to fight metadata drift or reacquire moving targets, crews can supervise autonomous behaviors, validate suggested coordinates, and authorize actions. This is especially critical in contested environments, where electronic warfare, cluttered airspace, and time-sensitive targets create intense pressure. By embedding more autonomy on the platform, militaries can sustain operational tempo, reduce fatigue, and maintain situational awareness across larger areas. At the same time, the human role shifts upward in the decision chain—from manipulating video feeds to orchestrating coordinated effects across a network of intelligent unmanned systems.