Emotional AI war clips: engineered to hit you before you think

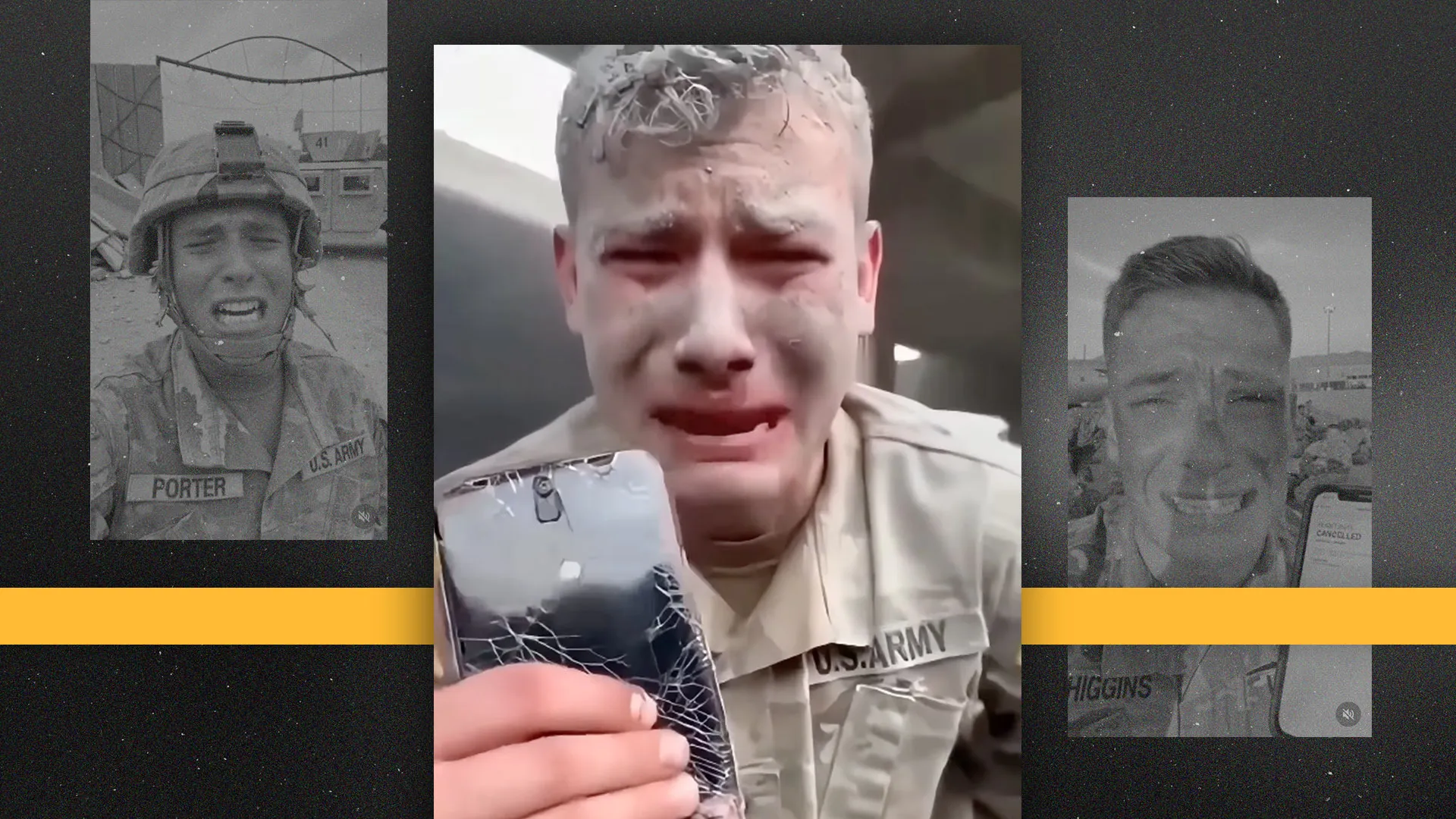

You’re doomscrolling after work when a video of a sobbing soldier or a burning ship suddenly appears. Before you can question it, you already feel anger, fear or sympathy—and that split second is exactly what many creators of AI generated video are counting on. Researchers say entire accounts now specialise in fake war footage and crying “soldiers”, designed to trigger strong emotions before critical thinking kicks in. Some clips feature supposed U.S. service members in tears or yelling into the camera, with uniforms and insignia that look almost right but contain subtle errors. Others build fictional war scenes that feel ripped from breaking news. These videos are not always the work of spy agencies; many are run like content farms, chasing ad revenue or political engagement. For Malaysian social media users, this emotional design makes it much harder to separate genuine war reporting from AI video manipulation.

Recent fakes: from ‘Iranian’ ship attacks to invented troop deployments

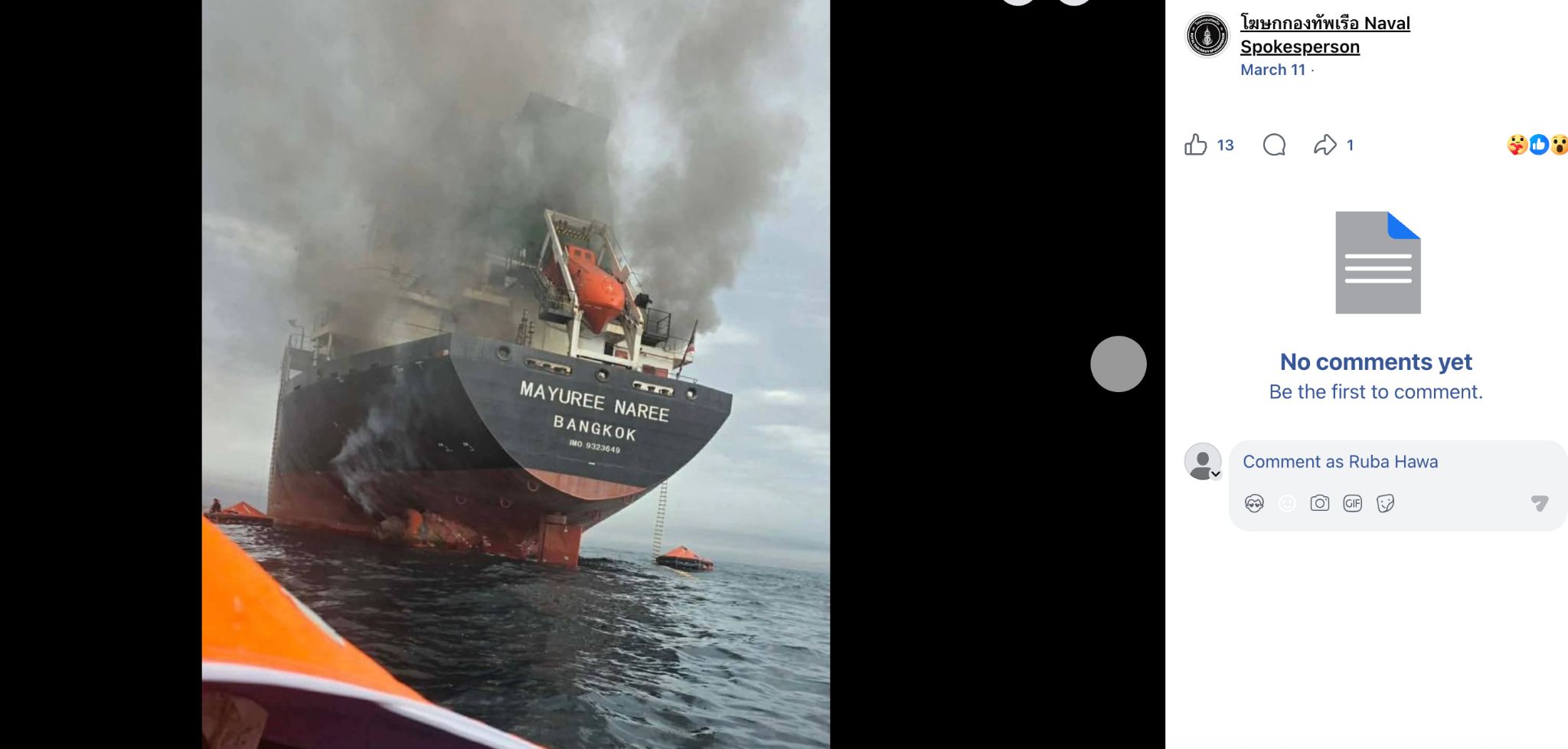

Fact-checkers are now debunking viral AI clips almost daily, including fake war footage from maritime and Middle East flashpoints. In one recent case, a TikTok account supportive of Iran shared a video that appeared to show an Israeli cargo ship ablaze after an attack. Investigators at Misbar found the clip was stitched together from two unrelated photos—a real Thai cargo ship fire near Oman and a separate Associated Press image of vessels in the Strait of Hormuz—then animated with AI. Technical analysis flagged a 99.9% likelihood of AI manipulation, with impossible smoke patterns, static flames and unnatural reflections. In another case, a viral video claimed to show U.S. soldiers flying to the Middle East to attack Iran. Misbar traced it to Google’s Veo tool and a TikTok account that regularly posts AI-generated clips, noting distorted faces, blended bodies and a visible Veo watermark that exposed the deception.

Content farms, politics and profit: why these videos keep coming

Behind many AI war clips are not grassroots eyewitnesses but organised content operations. Investigations into crying "soldier" videos found dozens of accounts pumping out similar AI scenes: distressed troops speaking straight to camera, uniforms almost accurate, emotions dialled to maximum. Their goal is simple—grow followers fast so that platform ad payouts or traffic to external sites become lucrative. One highly followed “Army girl” persona, presented as a young U.S. service member supporting a political movement, turned out to be entirely fabricated, built to funnel viewers toward paid adult content. Elsewhere, political actors experiment with AI generated video for campaigning, such as a Glasgow election candidate using AI scenes of himself at rallies and in schools. Even when labelled as “illustrative AI scenes”, these clips risk misleading voters who never read the fine print. The same tactics can easily be repurposed to push geopolitical narratives or partisan talking points into Malaysian feeds.

How Malaysian users can spot and verify suspicious war videos

With AI video manipulation becoming routine, Malaysian users need a practical social media fact check habit. Start with the source: who posted the clip first, and is it a known news outlet, eyewitness, or a random new account churning many similar videos? Look closely for visual glitches—warped faces and hands, insignia that look wrong, text that’s smeared or unreadable, or smoke and fire that repeat in unnatural loops. Watermarks from AI tools, like the Veo logo identified on a fake troop deployment video, are major red flags. Use reverse image or video search where possible to see if still frames match older photos from different events, as happened with the Thai cargo ship fire repurposed into a fake Iranian attack. Finally, cross-check the claim with trusted news organisations and professional fact-checkers before sharing. If something seems off, report the video and avoid amplifying it in group chats.

Why this matters for Malaysia: elections, tensions and the limits of platform labels

AI-powered propaganda is no longer a distant problem confined to foreign elections. As regional maritime routes and Middle East conflicts intersect with Malaysian interests, misleading AI generated video about ships, soldiers or religious communities can inflame public opinion at home. Ahead of any election, similar techniques could be used to fabricate rallies, “statements” by politicians or incidents targeting minorities. Regulators abroad are already testing deepfake detection systems and urging clear labelling of AI campaign material, but fact-checkers note that small, hard-to-see disclaimers are easily missed. Social platforms are rolling out labels, watermarking and fact-check banners, yet many deceptive clips still circulate widely before moderation kicks in—or reappear on new accounts. For Malaysians, the most effective defence is behavioural: slow down when a video hits you emotionally, verify before forwarding, and treat every dramatic clip, especially about war or politics, as unverified until proven otherwise.