Frontier AI Models Arrive With Bold Security Promises

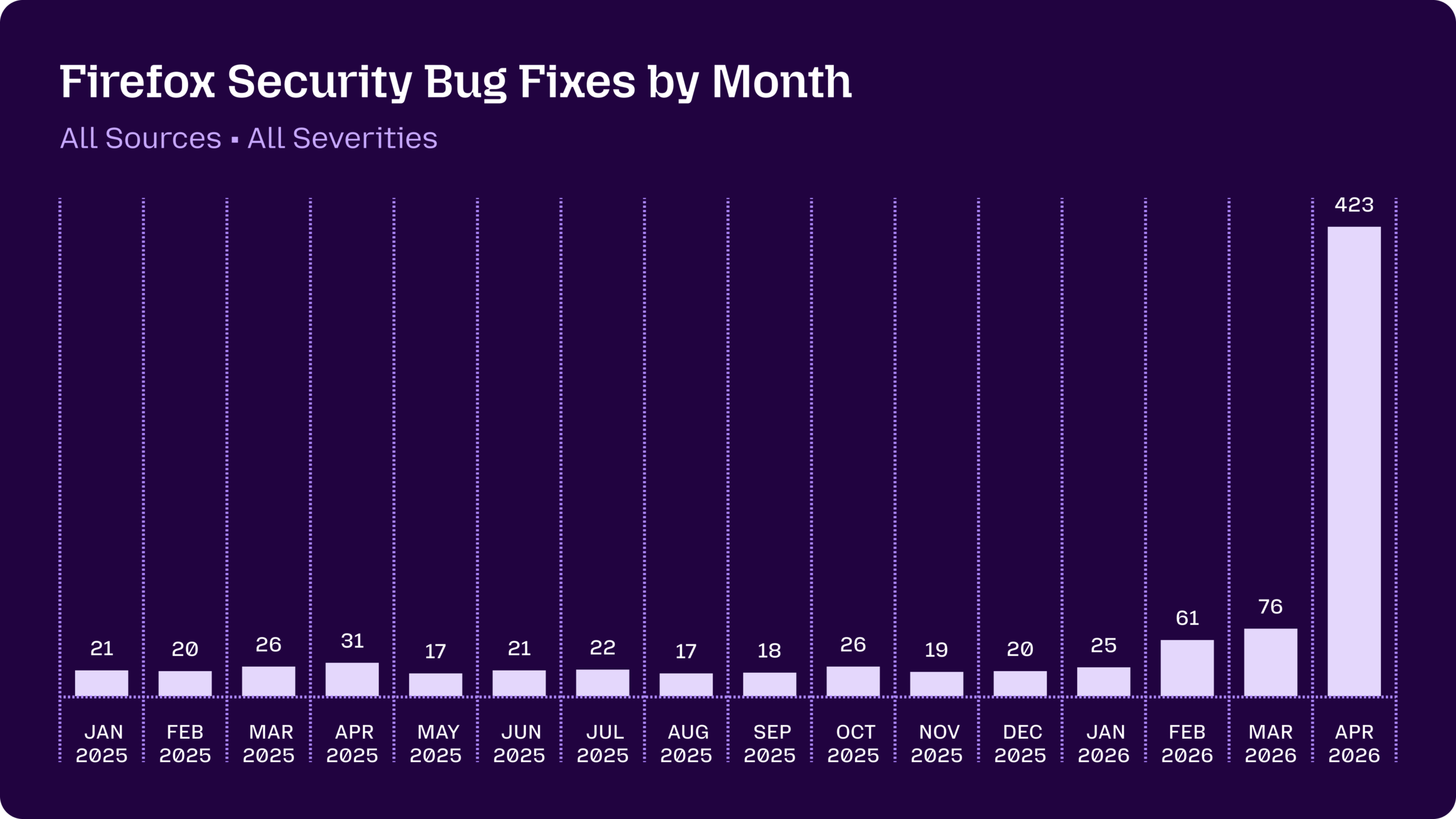

Frontier AI models are being marketed as transformative engines for AI vulnerability detection and AI-driven threat detection, promising to harden the digital infrastructure that underpins research and industry. Anthropic’s Claude Mythos Preview, deployed through its Project Glasswing vetted-partner program, exemplifies this narrative. Mozilla’s Firefox team used Mythos and earlier Claude models to scan browser code, seeing a dramatic jump from a few dozen monthly bug fixes to 423 issues patched in April after Mythos access began. Of those, Mythos alone surfaced 271 bugs across defense-in-depth improvements, long-dormant code paths and lower-severity issues that human teams might never have prioritized. The roster of Glasswing partners—cloud providers, operating system maintainers, chip and networking vendors, browser developers and security firms—underscores the ambition: harden foundational systems at scale. Yet this surge in findings immediately raises harder questions for security leaders about which issues matter, what truly reduces risk, and how to measure cybersecurity ROI in this new AI-first landscape.

Firefox vs. cURL: Two Case Studies, Two Very Different Stories

The Firefox and cURL experiences highlight the gap between frontier AI models security promises and real-world impact. In Firefox, Claude Mythos Preview helped uncover hundreds of issues, but only three of the April bugs warranted standalone CVEs, and the majority were low-severity or hardening fixes. For defenders, that still has value: shrinking the backlog of minor flaws can reduce future exploit chains. By contrast, when cURL creator Daniel Stenberg had Mythos run against cURL’s thoroughly tested codebase, he received a report listing just five alleged security vulnerabilities. After several hours of review, his team confirmed only a single low-severity CVE, with the rest either false positives, already documented behaviors, or simple non-security bugs. Stenberg concluded Mythos is not a ground-breaking, game-changing model compared with existing AI tools. Together, these cases suggest that context, existing tooling and code maturity heavily influence how impressive AI vulnerability detection results really look.

Security Leaders Confront Hype, Backlogs and Unclear ROI

For CISOs and security engineering leaders, the mixed performance of AI-driven threat detection tools creates a strategic dilemma. Marketing narratives promise that access to Mythos-tier systems will redefine vulnerability management and cybersecurity R&D, yet early outcomes skew toward low-severity findings and incremental hardening. Experts such as those in the National Cybersecurity Alliance argue that this may still be useful: organizations often carry long-standing backlogs of "known but deprioritized" issues that never get attention because higher-severity vulnerabilities dominate. AI that quickly clears this long tail can, in theory, improve overall resilience and help prevent low-severity weaknesses from chaining into major incidents. However, the operational and opportunity costs of integrating new AI scanners, triaging noisy reports and retraining teams are significant. Without clear metrics showing reduced incident rates or faster remediation of critical flaws, security leaders are left questioning the true cybersecurity ROI of frontier AI models security initiatives.

Is AI-Driven Security a Breakthrough or a Branding Exercise?

The cURL episode has intensified debate over whether current AI vulnerability detection advances are substantive or mainly branding. Stenberg, who has already run cURL through tools like AISLE, Zeropath and OpenAI Codex Security, notes those systems collectively triggered hundreds of bug fixes and a dozen or more CVEs in under a year. Against that baseline, Mythos finding one additional low-severity CVE looks less like a revolution and more like another incremental tool. At the same time, programs such as Project Glasswing and OpenAI’s Trusted Access for Cyber are concentrating powerful models in the hands of a select defender ecosystem that maintains much of the world’s attack surface. If these models eventually automate not just scanning but exploitation workflows, they could reshape both offense and defense. For now, the industry sits in a liminal phase where AI-driven threat detection is neither empty hype nor a clear-cut breakthrough—just a promising, noisy work in progress.