Why Schools Are Rushing Toward AI—and Why Families Should Care

Schools are under pressure to do more with less: personalize learning, reduce teacher burnout, and manage endless paperwork. AI in schools promises quick help—tools that grade quizzes, draft lesson plans, offer on-demand tutoring, or automate admin tasks. When used well, these systems can free teachers to focus on relationships, provide extra practice for struggling students, and give quicker feedback. But speed can be dangerous. Past waves of classroom technology show how digital tools can easily drift from “helpful” to “harmful.” Some platforms rely on persuasive, game-like designs that keep students clicking without truly reading or thinking, and can be especially problematic for neurodivergent learners who already struggle with attention and social engagement. Families and educators, therefore, need more than enthusiasm. They need an education AI framework that asks: Does this tool deepen real learning? Does it protect student data? And does it keep technology in a clearly supplemental role, rather than letting screens become the center of school life?

The Three Pillars of a Sustainable Education AI Framework

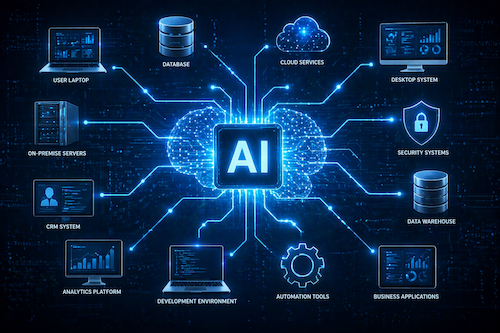

To move from quick experiments to long-term, safe practice, school districts need more than a list of apps. They need a sustainable education AI framework built on three pillars: strong governance, clear educational purpose, and robust data integrity protections. Strong school district governance means AI decisions are made by a cross-functional team—teachers, administrators, IT leaders, parents, and board members—who evaluate tools before they reach classrooms. Clear educational purpose ensures districts define the problem first and only adopt AI when it directly supports learning goals instead of chasing novelty. Robust data integrity protections cover both student data privacy and data quality, so AI systems get accurate information and do not leak or misuse it. Together, these pillars create a classroom technology policy that encourages careful experimentation while placing student well-being and meaningful learning at the center, not the software itself.

What AI Governance Looks Like in Everyday School Life

AI governance can sound abstract, but for parents and teachers it should look very concrete. A district with serious classroom technology policy will have a standing AI governance team that sets rules for which tools are allowed, how they’re tested, and how results are monitored. This group reviews new platforms for alignment with curriculum, student data privacy requirements, and equity goals before students ever log in. They define clear approval processes, communicate decisions openly, and explain why some tools are rejected or limited. Governance also includes ongoing review: Are students actually learning more, or just clicking faster? Are any groups of students being left behind or unfairly labeled by algorithms? When schools combine instructional expertise with technical insight on this team, teachers gain safe space to try AI while knowing guardrails exist. For families, effective governance means fewer surprises, more transparency, and clear points of accountability when something goes wrong.

Judging Real Learning, Not Just Shiny Tools

Not every AI tool that looks innovative actually improves learning. Districts and educators should start with questions, not features: What specific problem does this AI address—reading comprehension, feedback speed, language support? Who benefits most, and how will we know it works? A strong education AI framework requires measurable outcomes, such as improved writing quality or reduced grading time that teachers then reinvest in direct student support. It also distinguishes between AI that substitutes for thinking and AI that scaffolds deeper thinking. If a platform encourages students to skim or guess, or rewards streaks instead of understanding, it risks repeating the pattern of game-like tools where students click to get through without absorbing content. Schools should pilot AI on a small scale, gather teacher and student feedback, and compare results with non-AI approaches. Only tools that demonstrably enhance human teaching—rather than replace it—should be scaled districtwide.

Protecting Student Data and Keeping Tech in Its Place: A Checklist for Families

AI systems depend on student data, which makes safeguards non-negotiable. Districts need clear data privacy agreements for every platform, detailed inventories of what information is collected, and strict controls on who can access it. Strong data infrastructure also means “clean” records and secure connections between systems, so AI tools receive accurate data and do not expose sensitive information. At the same time, schools must guard against over-automation—using AI to manage behavior, track every click, or replace human interactions. Technology should stay supplemental, supporting reading, writing, and face-to-face learning rather than becoming the main event. When your school announces an AI initiative, parents and teachers can ask: Who approved this tool, and based on what criteria? What problem is it solving? What data does it collect and where is it stored? How will success be measured? And most importantly: How does this keep teachers—not algorithms—at the heart of education?