The Measurement Gap: Faster Cycles, Little Proof

Many engineering leaders hear the same story: developers say AI coding tools make tasks feel faster, cycle times seem shorter, and backlogs look more manageable. Yet when you ask for proof, most teams lack a consistent engineering metrics framework that links AI usage to measurable outcomes in the codebase. Industry numbers are also noisy. Some trials report dramatically faster task completion, forecasts project sizeable future gains, and surveys show only a minority of teams reporting high productivity benefits. These figures describe different scopes and time horizons, making them hard to compare or use for budgeting AI coding tools ROI. Meanwhile, finance teams and executives are asked to commit to large AI investments based on anecdotes and vendor claims. Closing this measurement gap requires moving beyond subjective reports to metrics that are repeatable, comparable over time, and grounded in actual delivery performance.

Why Strong Engineering Foundations Come First

Before tuning dashboards or calculating AI coding tools ROI, leaders need to ask a harder question: is the engineering system ready for AI to amplify it? Research from the DORA team frames AI as an amplifier rather than a magic fix. High-performing organisations with solid internal platforms, clear workflows, and healthy deployment practices tend to see AI magnify their strengths. Struggling teams, by contrast, often experience increased chaos as AI accelerates already fragile processes. The report also highlights the instability tax: more code moving faster can overwhelm pipelines and manual review gates, driving up change failure rates and downtime. Without investments in continuous integration, automated testing, and small batch delivery, AI adoption can raise local productivity while harming overall stability. In other words, strong engineering foundations are not optional. They are prerequisites for AI implementation success and for any metrics you collect to reflect real, sustainable improvements.

Using DORA Metrics to Track AI’s Impact

DORA metrics provide a proven starting point for developer productivity measurement in the AI era: deployment frequency, lead time for changes, change failure rate, and time to restore service. Rather than inventing new KPIs from scratch, leaders can first baseline these metrics before AI adoption, then track how they shift as tools roll out. The latest DORA research shows AI adoption correlating with higher throughput but also with lower delivery stability, underscoring the need to look at the full picture. Improvements in deployment frequency and lead time are valuable only if change failure rate and recovery times stay within acceptable boundaries. DORA’s value model links these delivery metrics to non-financial outcomes like developer and user experience, and then to financial outcomes such as cost savings and revenue growth. This creates a structured chain from AI usage to business value, instead of relying on isolated speed anecdotes.

Commit-Level Analysis and the Role of ETV

While DORA metrics show system-level outcomes, some teams want a closer view of how AI changes day-to-day work. Commit-level metrics such as Engineering Throughput Value (ETV) offer one approach. ETV scores each commit against a team’s own pre-AI baseline, allowing leaders to see whether the code being produced after AI adoption represents real throughput gains or simply noise. This guards against AI washing, where unverified productivity claims are attributed to AI without clear evidence in the codebase or workflow changes. By comparing quarters before and after AI rollout, ETV can illuminate whether increased commit volume aligns with meaningful delivery improvements or rising instability. Combining a commit-focused lens like ETV with DORA’s system-level metrics helps clarify where AI is helping, where it is adding verification tax, and where additional investments in automation or training are needed to turn short-term spikes into durable gains.

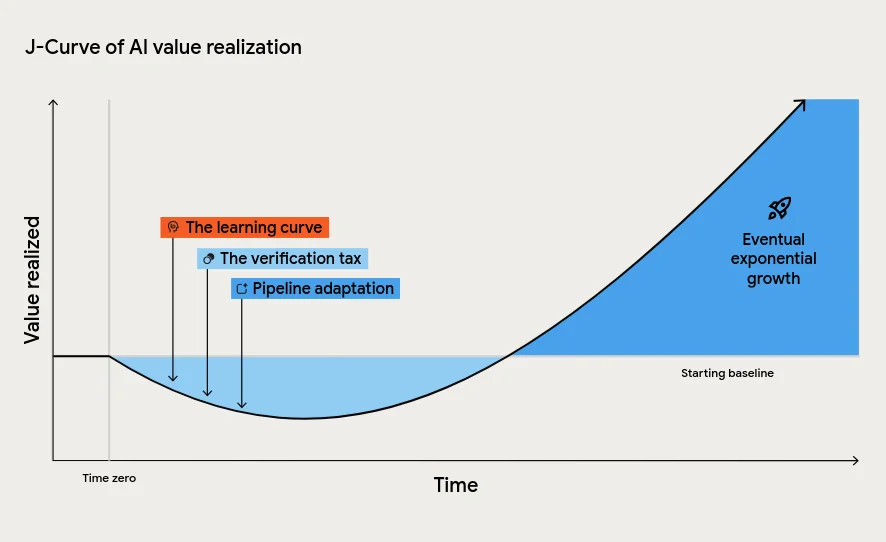

Distinguishing the J-Curve from Lasting ROI

A key challenge for leaders is distinguishing temporary productivity spikes from durable AI implementation success. DORA’s J-Curve of value realisation describes an early dip caused by learning curves, verification tax for AI-generated code, and downstream process changes. During this period, metrics may show mixed signals: local speed-ups alongside higher failure rates or slower approvals. The risk is misreading this dip as failure and cancelling investments just before long-term gains emerge. To avoid that, leaders should define a time-bound experiment window, track DORA metrics and commit-level indicators like ETV, and explicitly model expected shifts in each phase. The goal is not to maximise raw code output but to reduce bottlenecks in the overall delivery system. When throughput rises, stability holds or improves, and developers report better experience, you can more confidently conclude that AI coding tools ROI is real, sustained, and aligned with business value.