AI Coding Tools Are Decoupling Code from Understanding

AI coding tools have made it dramatically faster to generate working code, but they have not made it easier to truly understand it. Surveys cited by Octopus Deploy suggest juniors complete tasks up to 55% faster with AI assistance, while adoption of tools like Claude Code has surged, reaching double-digit global usage in recent developer surveys. The result is a widening gap between code output and conceptual grasp. Senior engineers can often lean on years of architecture and domain experience to sanity-check AI-generated snippets. For newer developers, that context is missing, yet the tooling makes it trivial to produce sophisticated implementations they couldn’t design alone. This dynamic is creating a cohort of “expert beginners” who ship clean, test-passing code yet struggle to explain why it works—or why, in subtle edge cases, it doesn’t. Productivity rises on paper, even as foundational debugging skills quietly erode.

Code Review Is Exposing a New Kind of Skills Gap

Engineering leaders are increasingly discovering the downside of AI-assisted development in the code review process. On the surface, changesets arrive fast, tests pass, and style guidelines are met. But deeper reviews occasionally uncover buried flaws—like timing bugs that only surface when multiple conditions align precisely. When asked to defend or adjust such code, some juniors falter because they never truly authored the logic; they orchestrated AI suggestions instead. Managers describe this new profile as conscientious but opaque: developers who can refactor quickly yet cannot reason about failure modes without tool assistance. This is not just a competence issue; it shifts the burden of verification onto reviewers, who must now assume that any apparently clean diff might hide misunderstood abstractions. As AI accelerates output, the code review process becomes both more critical and more fragile, especially when teams mistakenly equate fast commits with genuine developer productivity.

When AI Hits Its Limits: Long-Running Tasks and Complex Debugging

The weaknesses of AI assistants become particularly visible in long-running tasks and complex debugging scenarios. Research from Microsoft’s DELEGATE-52 benchmark shows that even advanced models degrade over multi-step workflows, losing on average a quarter of document content across 20 delegated interactions in frontier systems and around half across all models tested. While models performed comparatively better in programming tasks, they still exhibited catastrophic corruption in many domains, with errors often appearing suddenly rather than gradually. For debugging, this means AI may help diagnose straightforward issues but can introduce subtle corruption when asked to iteratively modify codebases or configuration artifacts. Developers who rely on AI as an always-correct co-pilot risk inheriting these hidden flaws. When production systems misbehave weeks later, they may lack both the mental model and clean history needed to trace failures back through layers of AI-influenced changes.

The Vanishing Training Ground for Debugging Skills

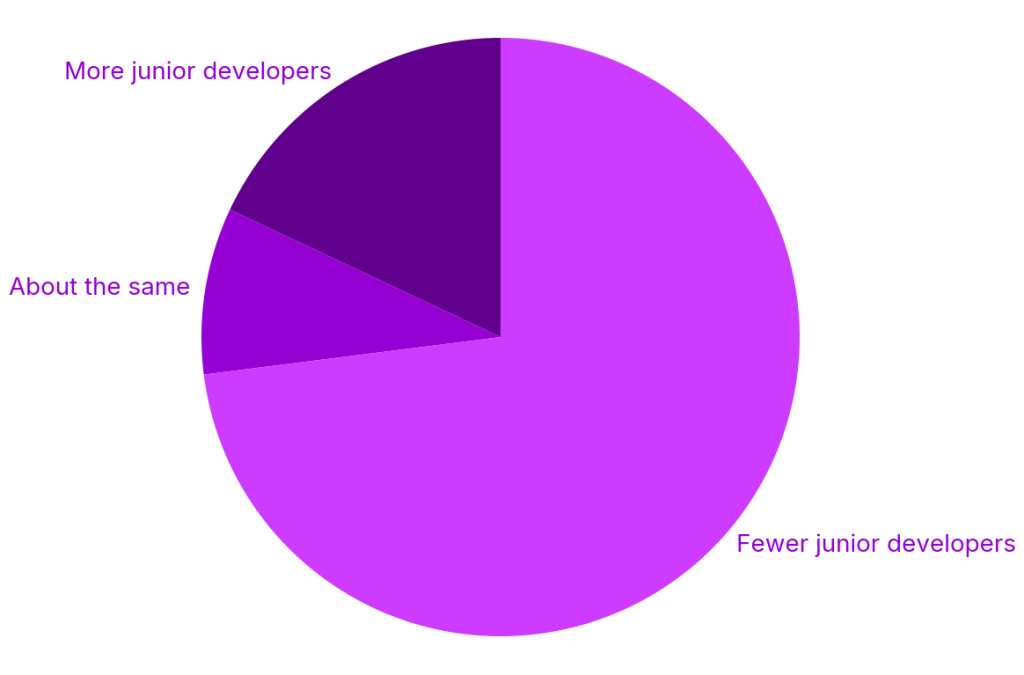

At the same time that AI coding tools are accelerating output, the traditional pipeline for growing debugging skills is shrinking. Industry analyses highlight sharp drops in entry-level tech roles and graduate positions over recent years, alongside a reported 73% of organizations reducing junior hiring. Many companies are redirecting investment from mentoring early-career developers toward purchasing AI tooling. This “seniors with AI” model assumes that experienced engineers, augmented by assistants, can replace much of the work once done by junior cohorts. But debugging expertise is historically built through repeated exposure to messy, real-world failures—exactly the kind of work juniors used to cut their teeth on. Without those early opportunities, and with AI buffering them from wrestling directly with problems, newer developers risk plateauing quickly. The industry gains short-term throughput yet undermines the apprenticeship path that once produced resilient problem-solvers.

Balancing Productivity Gains with Core Competencies

Organizations face a strategic choice: embrace AI coding tools for their undeniable productivity benefits, or restrain them to protect foundational debugging skills. The answer has to be a balance. Leaders can treat AI output as a starting point, not a final product, requiring developers to explain, refactor, and test code without ongoing tool guidance. Code review processes may need explicit checks for reasoning—asking “why does this work?” as often as “does this pass tests?” Teams should also invest in structured debugging practice, from chaos exercises to postmortem-driven learning, to keep human problem-solving sharp. Importantly, opting out of AI entirely is risky too; senior engineers who ignore these tools may lose visibility into emerging coding patterns. The goal is not to reject automation but to design workflows where AI enhances developer productivity without hollowing out the critical thinking and debugging skills that keep complex systems reliable.