A Quiet Reveal Ahead of Google I/O 2026

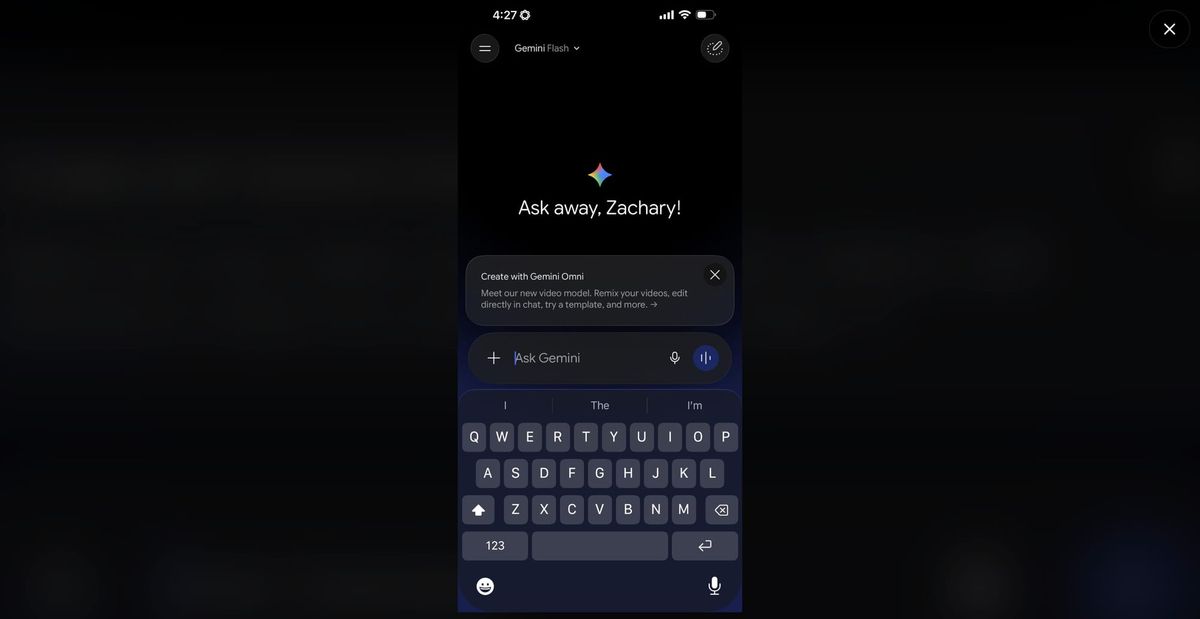

Gemini Omni’s video model surfaced quietly over the weekend, as screenshots of a revised Gemini interface began circulating on Reddit. A new model card labeled “Create with Gemini Omni” described it as a video model able to remix clips, edit directly in chat, and apply templates, confirming long-standing speculation that Google would unify video capabilities under the Gemini brand in time for Google I/O 2026. The appearance looks like either an accidental rollout or a tightly controlled A/B test, with only a subset of users seeing the new options and a fresh usage limits tab in settings. Early testers also noticed that video generation consumed credits quickly, suggesting a metered system similar to other Gemini features. Together, these hints point to a strategic soft launch: enough visibility to shape expectations and gather feedback, but still framed as a pre-keynote preview rather than a full public release.

Editing First: Chat-Based Control for Creators

Initial reactions suggest that Gemini Omni’s strength is not raw video generation, but editing and control. Users report that while the model’s cinematic fidelity trails ByteDance’s Seedance 2, its ability to manipulate existing footage is unusually strong for an early glimpse. Tasks that usually require timeline scrubbing and masking—such as removing watermarks, swapping objects in a scene, or rewriting shots via text prompts—appear to work directly inside a chat-style interface. For creators, that positions Gemini Omni video editing as a layer on top of existing footage rather than a pure content generator. The approach mirrors Google’s Nano Banana image model, which launched with modest generation quality but quickly became a top-tier editing tool before being upgraded further. If Google runs the same playbook for video, Omni could become a go-to assistant for fast edits, revisions, and versioning long before it becomes a cinematic powerhouse.

How Gemini Omni Could Reshape Creator Workflows

For working creators, the promise of Gemini Omni lies in workflow compression. Instead of juggling dedicated AI video editing tools, traditional non-linear editors, and separate apps for things like watermark cleanup or object replacement, creators could route many tasks through a single Gemini conversation. Need a vertical cut for social, a color pass tuned to a specific mood, or a last-minute scene rewrite? In theory, those become chat prompts rather than manual edits. The emerging usage limits and credit consumption hints suggest Google is treating video as a heavier, more premium capability, but also as part of a broader Gemini surface that spans Android devices and the web. If Omni slots into Google’s existing creator ecosystem—Photos, Drive, YouTube, and mobile editing apps—it could enable automated video production flows that move from capture to cut to upload with far fewer context switches.

Competing in a Crowded Field of AI Video Editing Tools

Gemini Omni is arriving in a landscape already filled with AI video editing tools that emphasize visually striking generation. Early feedback indicates that Omni’s Flash-tier outputs do not yet match the cinematic bar set by leaders like Seedance 2, which may limit its immediate appeal to creators chasing the most photorealistic AI clips. However, Google appears to be betting on depth of integration and modality unification rather than headline-grabbing demos alone. By weaving video into the same Gemini environment that already handles text, images, and code, Google can offer creators a consistent interface for brainstorming, scripting, editing, and publishing. Tiered variants such as Flash and a likely Pro option could further segment use cases: fast, iterative edits on one side, heavier automated video production on the other. The real test will be whether this integrated strategy can offset any short-term quality gap in raw generation.

What to Watch for at Google I/O 2026

With Google I/O 2026 just around the corner, Gemini Omni’s early leak sets expectations for a bigger story about AI-native creation tools. Key questions remain: how deeply will Omni integrate with YouTube and Android camera apps, what safeguards will govern watermark removal and scene rewriting, and how will Google price and meter access given video’s heavy compute demands? Developers will also be watching for APIs that allow third-party apps to tap into Omni’s editing engine, potentially spreading its capabilities across existing creator tools instead of confining them to Gemini’s own interface. If Google follows through on its unified Gemini strategy, Omni could become the backbone of automated video production workflows that span ideation, capture, edit, and distribution. The weekend’s controlled leak suggests that Google is carefully shaping this narrative ahead of the keynote, positioning Omni as a foundational, long-term bet rather than a one-off demo.