Real-World Data: GPT-5.5 Costs 49–92% More Than GPT-5.4

OpenRouter’s April usage-log analysis reveals a sharp increase in production workload expenses when teams switch from GPT-5.4 to GPT-5.5. Across real customer traffic, effective costs climbed between 49% and 92%, even though the list-price jump had previously been estimated at only 19%. This discrepancy highlights why AI model cost comparison must go beyond headline pricing. In practice, production workloads include variable prompts, multi-turn conversations, and retry loops that amplify subtle changes in model behavior. For prompts under 2,000 tokens, average cost per million tokens rose from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43.10). For prompts between 50,000 and 128,000 tokens, costs increased from USD 0.74 (approx. RM3.40) to USD 1.10 (approx. RM5.05). These numbers show that GPT-5.5 pricing can nearly double effective spending, even before traffic scales fully.

Why OpenAI’s Efficiency Claims Don’t Tell the Whole Story

OpenAI positions GPT-5.5 as more token efficient than GPT-5.4, with similar per-token latency and shorter answers at very long context lengths. However, OpenRouter’s data shows that efficiency gains in one band can hide cost inflation in another. For prompts above 10,000 tokens, GPT-5.5 generated 19–34% fewer completion tokens, which should help long-context jobs. But in the 2,000–10,000 token range, median completions grew by 52%, a critical factor for many enterprise workloads. This mismatch between theoretical efficiency and practical economics illustrates how OpenAI efficiency marketing can diverge from actual deployment costs. Production workloads rarely live exclusively in the longest context windows that vendors highlight. Instead, they mix short and mid-range prompts, tool calls, and follow-up questions. In that reality, longer completions in the middle bands can quietly inflate GPT-5.5 pricing, despite claims of overall token efficiency.

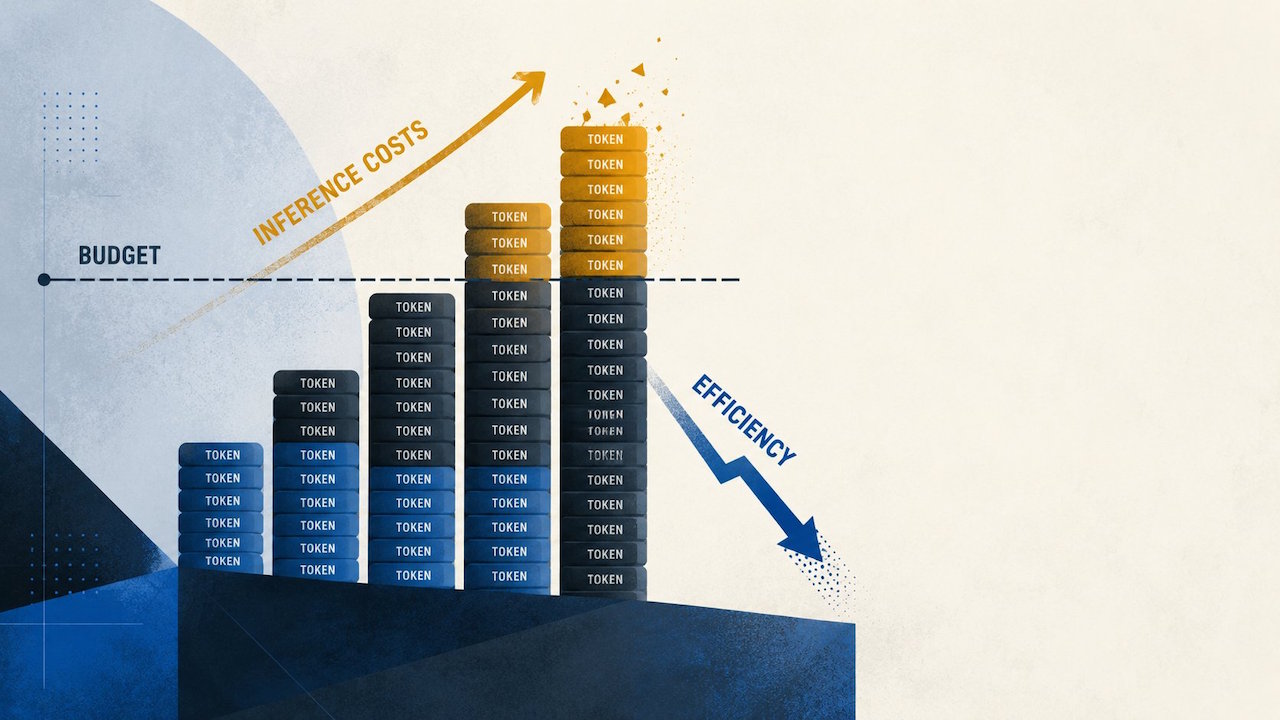

Completion Length Drift: The Hidden Driver of Budget Overruns

The OpenRouter analysis shows that small shifts in completion behavior can have outsized budget impact. For prompts under 2,000 tokens, median completion length rose from 121 tokens on GPT-5.4 to 129 on GPT-5.5, a 7% increase. In the 2,000–10,000 token band, completions jumped from 140 to 213 tokens, a 52% increase. While GPT-5.5 trims output in very long contexts, this mid-range expansion is where many real applications actually sit. Retrieval assistants, coding copilots, workflow agents, and customer-support bots frequently operate with short prompts and repeated turns. Every tool retry, follow-up question, or retrieval reformulation adds completion tokens. Even if each response is only slightly longer, the effect compounds across millions of calls. The result is a steady rise in production workload expenses, turning what looks like a modest per-request change into a structural cost issue for shared platforms.

From List Price to Real Bills: How Buyers Should Evaluate GPT-5.5

On paper, GPT-5.5’s list prices are straightforward: short-context baselines previously sat at USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69.00) per million output tokens for GPT-5.4, while GPT-5.5 now charges USD 5 (approx. RM23.00) and USD 30 (approx. RM138.00) respectively. GPT-5.5 Pro climbs even higher at USD 30 (approx. RM138.00) per million input tokens and USD 180 (approx. RM828.00) per million output tokens. Yet the April usage data proves that list-price comparisons alone underestimate real deployment costs. Enterprise approval processes often start in finance with a rate card, but final bills are shaped by prompt length, completion drift, and user behavior after launch. Platform teams need to run narrow production tests on live traffic, capturing token usage across short and mid-range prompts before standardizing on GPT-5.5. Without that, OpenAI efficiency claims can mask recurring cost increases that only appear once the system is in full use.

Strategic Model Choices: When GPT-5.5 Is Worth the Premium

Given the higher GPT-5.5 pricing, teams must be deliberate about where they deploy it. OpenRouter’s breakdown suggests the model is most economical on long-context tasks where prompts exceed 10,000 tokens and shorter completions offset higher per-token rates. For these scenarios—such as large document analysis or complex multi-step planning—GPT-5.5’s reduced output length can yield better value. By contrast, high-volume products that lean on short and mid-range prompts may see 49–92% higher effective costs. Organizations might respond by routing GPT-5.5 only to premium tiers, long-context jobs, or cases where its quality uplift is clearly measurable. At the same time, they can benchmark alternatives like Anthropic’s Claude Opus 4.7, especially since some comparisons have found GPT-5.5 base output pricing higher while input pricing remains similar. Ultimately, real workload testing—not marketing benchmarks—should guide whether GPT-5.5 becomes a default or a targeted, high-value option.