From Beating Humans in Games to Beating Them in Sports

Embodied AI is starting to leave the lab and step into stadiums. Two headline moments capture this shift: Sony’s Ace table tennis robot, which has defeated elite and even professional players under official rules, and Honor’s Lightning humanoid robot marathon runner, which completed a 21-kilometer race in 50 minutes and 26 seconds—faster than the human world record over the same distance. These feats extend a decade-long trend of AI mastering digital games such as chess, Go and racing simulators, but they do so in the messy, fast-paced physical world. They show that robots can now combine high-speed perception, precise control and learned strategies to outperform people in narrow, well-defined tasks. At the same time, they invite a key question: when a robot beats humans at ping-pong or a half-marathon, is it really matching human competence—or just excelling in a carefully engineered performance?

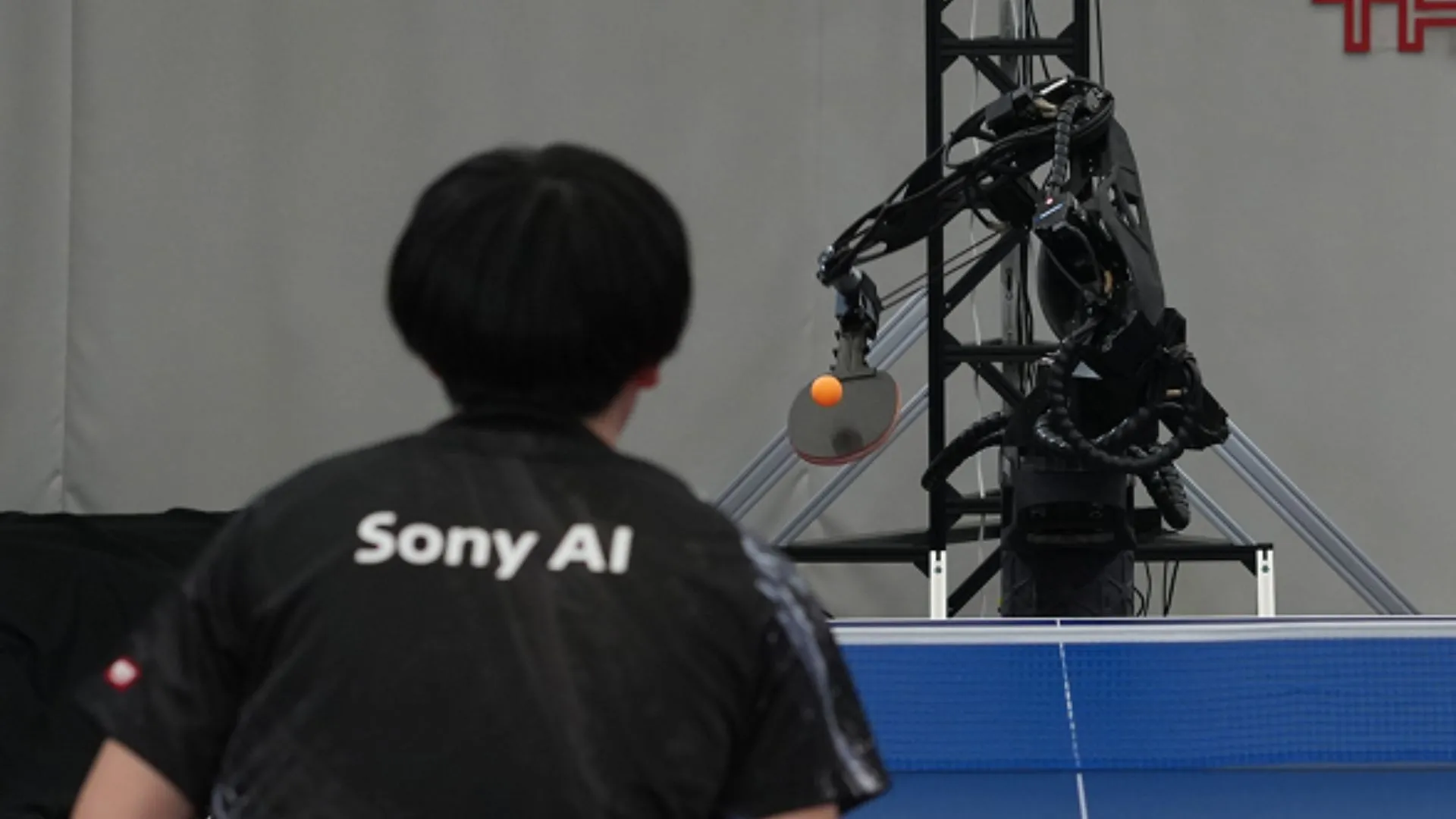

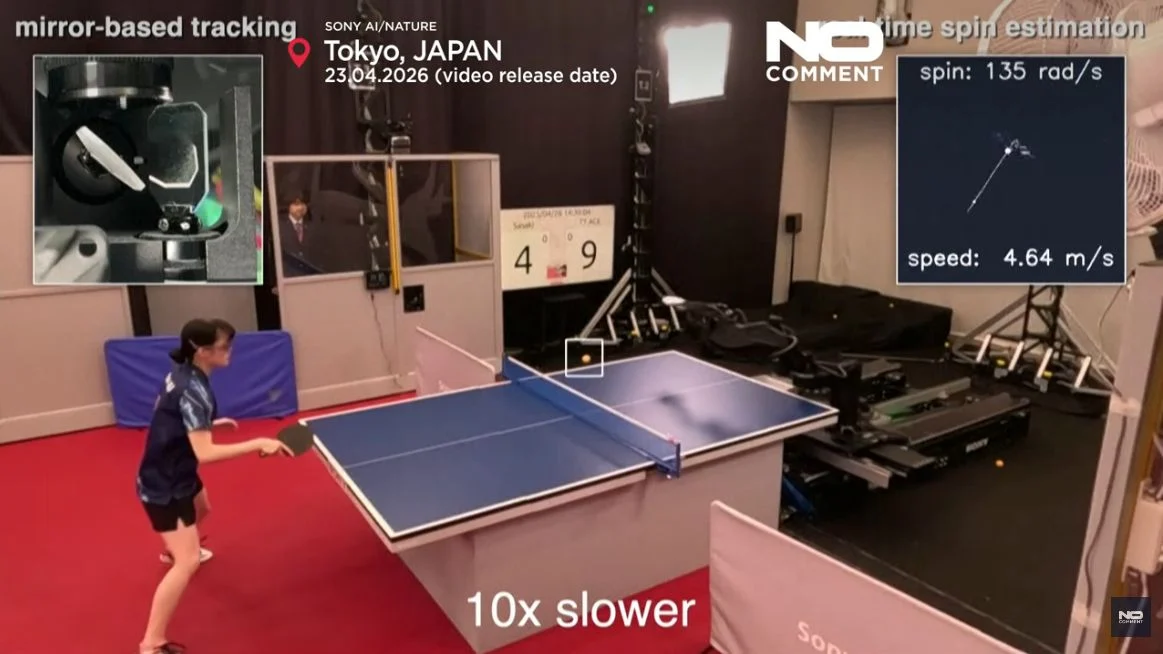

Sony Ace: Expert-Level Ping-Pong Inside a Perfectly Framed Game

The Sony Ace table tennis robot is a showcase of how far physical AI has come in controlled environments. Ace uses nine synchronized high-speed cameras and multiple vision systems to track the ball’s 3D position, velocity and spin in real time, then drives an eight-joint robotic arm trained entirely in simulation using reinforcement learning. In tests, Ace won three out of five matches against elite amateur players and even defeated professional opponents under International Table Tennis Federation rules, matching or exceeding human reaction times and decision-making at the table. This is a genuine milestone in high-speed perception, spin tracking and precision control. Yet Ace’s triumph rests on a constrained setting: fixed lighting, a known table, consistent ball properties and clear rules. The system doesn’t need to navigate crowds, adapt to slippery floors or handle sudden changes in context. It is superhuman in a narrow slice of reality, not a general-purpose athlete.

Honor Lightning: A Humanoid Robot Marathon Record With Training Wheels

Honor’s Lightning, a red humanoid robot with long, runner-inspired legs and liquid-cooled motors adapted from smartphones, shattered expectations in the Beijing E-Town humanoid robot half-marathon. Lightning finished the 13.1-mile course in 50 minutes and 26 seconds, outpacing not only all human participants in the event but also the standing human world record. Other Honor units finished close behind, and a separately remote-controlled Honor robot completed the route in 48 minutes and 19 seconds under different scoring rules. The robot’s performance highlights major advances in locomotion, energy management and mechanical robustness: just a year earlier, only six of twenty-one robots finished the same race, with many overheating or collapsing. But this humanoid robot marathon was tightly structured. The course was premapped, robots ran in dedicated lanes, and support crews followed closely. Lightning even crashed into a barricade and had to be reset by handlers, underscoring how fragile its “record” really was outside ideal conditions.

Where Embodied AI Truly Excels — and Where It Still Falls Apart

Events like the humanoid robot marathon and embodied AI sports trials reveal a clear pattern. Robots now outperform humans in areas that reward precision, endurance and repeatability. A machine doesn’t get tired, doesn’t worry about pacing or hydration, and can maintain a mechanically perfect gait or swing if the environment stays predictable. Cooling breakthroughs, robust hardware and simulation-trained controllers have drastically cut failure rates: more robots are finishing races, and their times have plummeted from hours to under an hour. Yet the same races expose how limited these systems remain when conditions deviate from the script. Lightning’s fall into a barricade, the reliance on premapped routes, and the fact that most entrants are still remotely piloted point to weak autonomy. Roboticists warn that many observers confuse performance with competence; a robot’s record time does not translate into human-like judgment, safety or resilience in crowded, unstructured settings.

From Spectacle to Service: The Real Test for Physical AI

Sports victories are fast becoming marketing campaigns for embodied AI. Spectacular headlines that a robot beats humans in ping-pong or outruns the fastest runner help normalize humanoids and attract investors. Competitions also provide brutal stress tests for balance, control and durability, accelerating iteration in locomotion, cooling and control software. But these showcase feats risk overhyping progress if we blur the line between stadium-ready demos and everyday usefulness. Real-world jobs—logistics in cluttered warehouses, inspection in chaotic industrial sites, or home assistance amid pets, children and fragile objects—demand continuous adaptation to the unexpected. The next frontier is whether the perception and control stacks behind Sony Ace and Honor Lightning can be transplanted into these messy environments, where there are no premapped routes, no parallel lanes and no handlers jogging behind. Until robots can improvise safely around real people, their biggest wins will remain impressive but fundamentally staged performances.