From Isolated Pipelines to a Connected Model Lifecycle

As organizations accumulate hundreds of datasets, models, and experiments, model lifecycle management quickly becomes a tangle of opaque pipelines and undocumented dependencies. Netflix’s engineering team argues that traditional tooling, built around linear workflows, cannot keep pace once multiple teams start reusing datasets, features, and workflows in different ways. Their response is the Model Lifecycle Graph, a metadata-centric view of machine learning systems architecture that treats every ML asset—datasets, features, models, evaluations, workflows, and production services—as nodes in a graph. Instead of seeing a model as the endpoint of a pipeline, engineers can inspect its full lineage, from upstream data sources through feature transformations to downstream consumers. This shift reframes ML infrastructure scaling as a graph problem: understanding and navigating relationships, rather than simply orchestrating steps, becomes the core capability for operating ML at enterprise scale.

Why a Graph Suits Enterprise ML Infrastructure Scaling

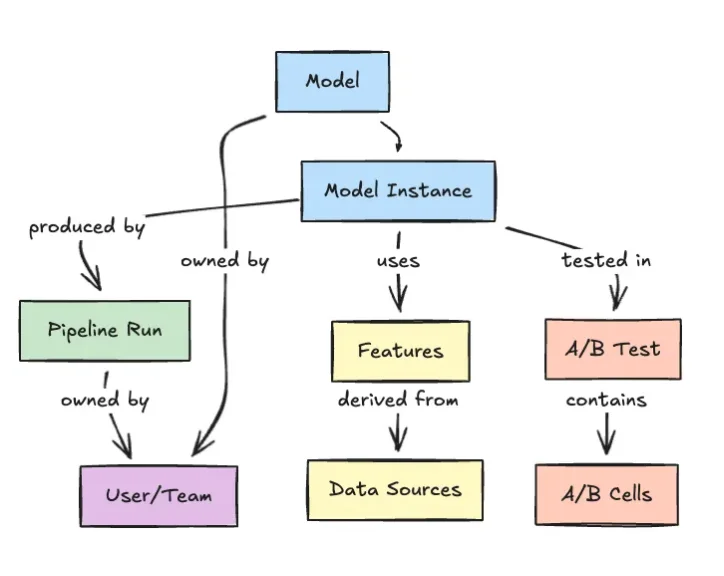

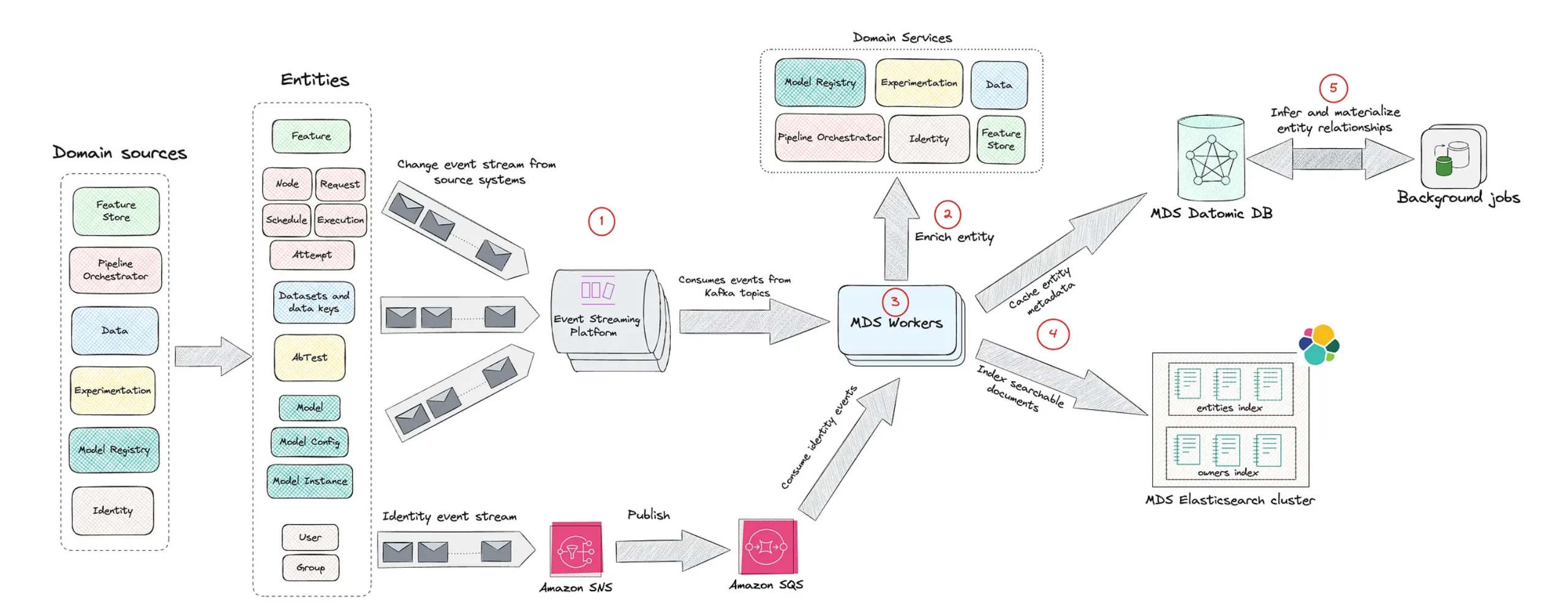

Netflix’s Model Lifecycle Graph is designed around the reality that ML assets rarely exist in isolation. A single recommendation model might draw from several datasets, use shared feature stores, run through multiple evaluation workflows, and power multiple production services—all evolving independently. Modeling these connections as traversable graph edges gives platform teams and practitioners a more faithful representation of how the system behaves over time. It supports impact analysis when a dataset changes, lineage tracking for governance and compliance, and dependency visualization for operational readiness. Compared with pipeline-centric views, the graph better reflects cross-cutting concerns like feature reuse, multi-model experiments, and shared infrastructure. For enterprise ML operations, this architecture turns previously implicit coupling into explicit structure, making it easier to reason about blast radius, technical debt, and long-term maintainability as machine learning systems architecture grows in size and complexity.

Enabling Discoverability, Reuse, and Self-Service ML

Beyond dependency tracking, Netflix positions the Model Lifecycle Graph as a way to democratize machine learning across engineering teams. By capturing models, datasets, and features in a unified, queryable graph, the platform doubles as an internal catalog for ML assets. Data scientists can discover existing datasets and features, inspect how they are used in production models, and reuse proven components instead of rebuilding them. Engineers gain visibility into ownership, evaluations, and deployment contexts, improving governance and accountability without requiring centralized gatekeeping. This self-service orientation reduces duplication of effort and shortens the path from idea to production-ready model, while still preserving traceability and lifecycle control. In effect, the graph becomes both a map and a shared language for enterprise ML operations, aligning experimentation, deployment, and maintenance around a common representation of how machine learning truly flows through the organization.

Part of a Broader Shift to Metadata-Centric ML Platforms

Netflix’s approach echoes a broader movement toward metadata-first platforms in both data and software engineering. Systems like LinkedIn’s DataHub and lineage initiatives such as OpenLineage also model datasets, pipelines, and ownership as graph structures, while ML platforms like Uber’s Michelangelo have long emphasized lifecycle management, feature reuse, and reproducibility. Even internal developer portals such as Spotify’s Backstage rely on graph-like catalogs to represent services and infrastructure. Netflix’s Model Lifecycle Graph brings this philosophy squarely into machine learning systems architecture, prioritizing traceability and institutional visibility over short-term experimentation convenience. As ML becomes embedded across more products and workflows, this signals a shift: metadata, lineage, and structured model lifecycle management are turning into core architectural requirements. For enterprises grappling with ML infrastructure scaling, Netflix’s graph suggests that success will hinge less on adding yet another pipeline, and more on deeply understanding how everything already connects.